11 Software Essays That Transformed My Engineering Mindset

Explore 11 pivotal software essays that profoundly influenced an engineering mindset, covering pragmatic advice on technology, architecture, coding, and career development.

Over the years, a selection of software essays has fundamentally reshaped my engineering mindset. Each piece offered a unique lesson, shifting my perspective on technology choices, architecture, coding practices, and even career development.

This article shares the most impactful essays and the key lessons I gleaned from each. These insights span from pragmatic engineering advice to philosophical understandings, all profoundly influencing my work as an engineer and leader. My hope is that by reflecting on these learnings, you may also find them valuable, and perhaps be inspired to read the original essays.

Here they are, presented in no particular order:

- Choose Boring Technology (2015) by Dan McKinley

- Parse, Don’t Validate (2019) by Alexis King

- Things You Should Never Do, Part I (2000) by Joel Spolsky

- The Majestic Monolith (2016) by David Heinemeier Hansson (DHH)

- The Joel Test (2000) by Joel Spolsky

- How to Design a Good API and Why It Matters (2007) by Joshua Bloch

- The Rise of “Worse is Better” (1989) by Richard P. Gabriel

- The Grug-Brained Developer (2022) by Carson Gross

- Software Quality at Top Speed (1996) by Steve McConnell

- Don’t Call Yourself a Programmer (2011) by Patrick McKenzie

- How To Become a Better Programmer by Not Programming (2007) by Jeff Atwood

1. Choose Boring Technology (2015) by Dan McKinley

Early in my career, I often succumbed to the "shiny object syndrome" – being drawn to the latest frameworks, databases, or tools. Dan McKinley’s essay, "Choose Boring Technology," was a revelation, effectively granting me permission to disregard the hype cycle.

McKinley argues that every team possesses a limited number of "innovation tokens" to spend on new technology. Therefore, these tokens must be allocated wisely. Innovation is a scarce resource; it should be reserved for areas that genuinely differentiate your product, while proven, reliable technology should be utilized for everything else.

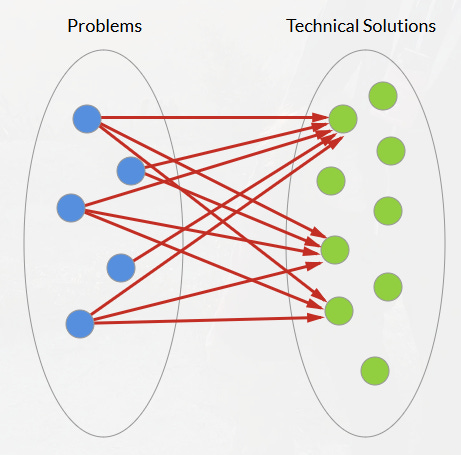

His advice favors technologies with well-understood failure modes and decades of documentation—the "boring" choices like MySQL, Postgres, and Cron—precisely because they function so reliably. McKinley suggests that when selecting a tool, "the best tool is the one that occupies the ‘least worst’ position for as many of your problems as possible," emphasizing that long-term system reliability far outweighs any short-term development convenience.

Choosing the best tool for the job (Source: Dan McKinley’s Choose Boring Technology)

After reading McKinley’s message, my perspective on new tech stacks transformed. I shed the guilt associated with using "boring" languages and frameworks. Instead of experimenting with every new JavaScript library or cloud service, I now commit to a few stable, well-understood choices. This shift has made me a faster and more effective engineer. My projects encounter fewer unexpected issues, new team members are onboarded more easily due to a common and well-documented stack, and less time is spent grappling with tools.

"Choose Boring Technology" taught me that innovation is a budget; it should be spent where it genuinely counts. There’s no need to reinvent the wheel. By using stable technology for most aspects, teams can reserve their innovative efforts for what truly matters: their product’s unique domain. It is a pragmatic lesson that has enhanced both my individual productivity and my team’s collective efficiency.

2. Parse, Don’t Validate (2019) by Alexis King

This essay explores how to leverage static types for building more robust software. Alexis King’s "Parse, Don’t Validate" fundamentally altered my approach to handling input data, regardless of the programming language.

The core idea is simple yet profound: whenever you validate data, instead of merely returning an error or a boolean flag, write a function that parses the data into a richer, more specific type. By doing so, you make invalid states unrepresentable within your program.

Before encountering this essay, my approach to input checking was primarily defensive. For instance, consider a reports endpoint with query parameters like GET /reports?from=2025-01-01&to=2025-12-31&limit=500000. In a "validate" style, I would implement helper functions like validateRange(from, to) and validateLimit(limit), then continue passing raw string/int types deeper into the system. A single missed guard could lead to an unbounded limit hitting production, resulting in a massive and potentially destructive database query.

King describes this pattern as pushing invalid data forward and relying on repetitive checks, or "shotgun parsing." The solution lies in flipping the flow: parse the data once at the system boundary into a richer, more expressive type, then operate the rest of the system on that validated type. Thus, instead of "validate + keep primitives," I now parse inputs into a ReportQuery value object:

- A

DateRangetype guaranteesfrom ≤ toand enforces a maximum span. - A

PageSizetype guarantees a valid range, e.g.,1...1000. - The core code only accepts

ReportQueryobjects, never raw parameters.

With this approach, invalid states cannot enter the domain model, eliminating the possibility of forgotten validations. There is no longer a path for raw limit values or raw dates to flow into critical logic. Instead of scattering checks throughout the codebase, I now design data types that enforce these constraints at the point of construction.

3. Things You Should Never Do, Part I (2000) by Joel Spolsky

Early in my career, I frequently fell into the trap Joel Spolsky describes in "Things You Should Never Do: Part I." Specifically, I often found myself wanting to rewrite a messy legacy codebase from scratch. Spolsky’s essay stopped me, likely saving me from disastrous rewrites.

His key lesson is clear: never discard working code and start over. While old code might appear unsightly, it embodies years of bug fixes and hard-earned knowledge. Rewriting from scratch means abandoning all that accumulated wisdom and inevitably reintroducing bugs that were resolved long ago.

Joel Spolsky, Co-founder of Stack Overflow and Trello

Spolsky famously uses Netscape as an example; they chose to rewrite Navigator from the ground up, and during the years it took, Internet Explorer swiftly gained market dominance. Joel explains that old code often appears messy to newcomers because "it’s harder to read code than to write it." Every experienced engineer, upon viewing a large codebase, encounters complex sections and believes they could improve them. However, these complexities often exist for very valid reasons: each seemingly odd piece might be a fix for a specific user bug, a workaround for an operating system quirk, or a performance optimization.

As Joel states: "That two-page function that grew warts is full of bug fixes; each fix took weeks of real-world usage to discover and days to fix… If you throw away the code and start over, you throw away all that knowledge and all those fixes." I realized I had been significantly underestimating the value of legacy code. Fundamentally, legacy code generates revenue, and that truth cannot be overlooked.

After reading this essay, my approach changed. When confronting a legacy module, I resist the urge for sweeping, "big bang" changes. Instead, I refactor iteratively, improving it piece by piece, writing tests around existing functionality, and slowly modernizing it.

In this context, it's also worth recalling Fred Brooks' wisdom from his 1975 book, "The Mythical Man-Month":

"An architect’s first work is apt to be spare and clean. He knows he doesn’t know what he’s doing, so he does it carefully and with great restraint. As he designs the first work, frill after frill and embellishment after embellishment occur to him. These get stored away to be used ‘next time.’ Sooner or later the first system is finished, and the architect, with firm confidence and a demonstrated mastery of that class of systems, is ready to build a second system. This second is the most dangerous system a man ever designs. When he does his third and later ones, his prior experiences will confirm each other as to the general characteristics of such systems, and their differences will identify those parts of his experience that are particular and not generalizable. The general tendency is to over-design the second system, using all the ideas and frills that were cautiously sidetracked on the first one."

The Mythical Man-Month by Fred Brooks

4. The Majestic Monolith (2016) by David Heinemeier Hansson (DHH)

Around 2015-2016, microservices were immensely popular. Companies were eagerly dismantling monolithic applications into dozens of smaller services. I, too, was initially caught up in this "microservices mania," until I read DHH’s "The Majestic Monolith."

This essay provided a refreshing, contrarian perspective at a time when it seemed every system had to emulate the distributed architectures of giants like Netflix or Amazon. DHH’s core message was clear: microservices are not a solution for every problem; in fact, most problems don’t require them. For the majority of products, especially those managed by smaller teams, a well-structured monolith is not only sufficient but often a superior choice compared to a chaotic jumble of distributed services.

Monolith at the Swiss National Expo in 2002, built by Jean Nouvel

One line that particularly resonated was DHH’s reference to the fundamental rule of distributed computing: "Don’t distribute your computing if you can avoid it!" Every time a process is split into separate services, a new world of complexity is introduced, encompassing network calls, fallible connections, synchronization challenges, and significant deployment and DevOps overhead.

DHH persuasively argues that microservice architectures are appropriate for massive companies with thousands of engineers working on independent components, not for an average product team of 5, 10, or even 50 developers. Copying the architectures of Google or Amazon when your organization isn’t operating at their scale is essentially "cargo culting."

DHH in Ruby on Rails: The Documentary

"The Majestic Monolith" essay gave me the confidence to challenge the reflexive adoption of microservices. DHH not only defends monoliths but celebrates them, urging developers to embrace them with pride and make them "majestic." A well-designed monolith, featuring clear modular boundaries but deployed as a single integrated system, offers tremendous advantages for small teams. It’s simpler to develop, test, deploy, and understand. Typically, there’s one database, one deployable unit, and a single place to investigate if issues arise.

The essay introduced me to the mantra "You are not Amazon" as a gentle reminder to design systems for my specific scale and complexity, rather than blindly imitating others.

5. The Joel Test (2000) by Joel Spolsky

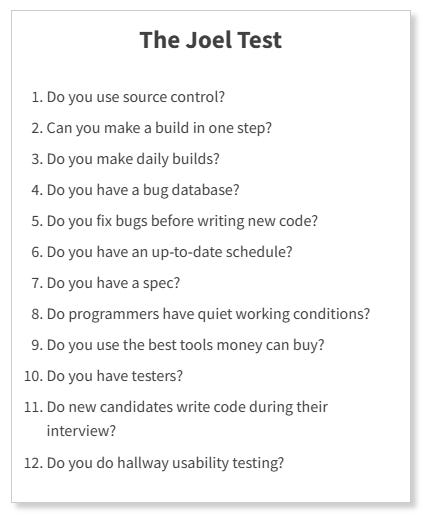

Sometimes profound insights are delivered in simple forms. "The Joel Test" is one such example. In a concise blog post, Joel Spolsky presented 12 yes-or-no questions designed to quickly assess the health of a software team and its development process. Essentially, it’s a quick checklist:

This brief test, taking about three minutes, provides an immediate sense of a team’s effectiveness. And it works. I’ve utilized The Joel Test as an initial diagnostic tool in every team I’ve joined or led, and it has never failed to quickly highlight gaps or dysfunctions.

After repeatedly using this test, I’ve internalized it as a guiding principle for team practices. When I became a team lead, I introduced my team to The Joel Test during a retrospective. We scored approximately 8 out of 12 at the time; we identified the four "No" answers and dedicated the following quarter to addressing them. The improvements were evident in our output: fewer bugs, faster onboarding for new developers, and a greater sense of control.

Joel suggests that a score of 12 is perfect, 11 is acceptable, and anything below 10 indicates serious underlying problems. It serves as a quick litmus test. While not exhaustive (it doesn’t cover aspects like code quality or detailed architecture), it effectively addresses the essentials of software engineering hygiene.

6. How to Design a Good API and Why It Matters (2007) by Joshua Bloch

As I transitioned from writing small scripts to designing libraries and services for others to consume, I recognized the critical yet complex nature of API design. Joshua Bloch’s talk/essay, "How to Design a Good API and Why it Matters," became my definitive guide for API design. It fundamentally reshaped how I design and evaluate interfaces.

Bloch’s core messages can be distilled into a few key rules:

- Public APIs, like diamonds, are forever. You typically get one opportunity to design an API correctly, so significant upfront effort is crucial. This taught me that once an API—whether a library method or a service endpoint—is published, altering it becomes challenging because users inevitably build dependencies on it.

- APIs should be easy to use and hard to misuse. A good API simplifies common tasks while making incorrect usage difficult or impossible. For instance, if a class shouldn’t be directly instantiated, provide a factory or builder pattern to guide proper usage instead of leaving potential "foot-guns" for developers.

- Good naming and documentation are integral to API design. Bloch emphasized that "names matter" and that every API forms a small language of its own. If I struggle to devise a clear name for a method, it often signals that the method’s purpose is ambiguous or that it’s attempting to do too much.

After internalizing Bloch’s guidance, I instituted a new practice: API reviews. Whenever we design a new module or service interface, I gather a few engineers (sometimes from other teams) to simulate API usage before implementation. We essentially role-play as API consumers: Is instantiation obvious? Does the workflow make sense? Are we exposing too much functionality that could be misused? This practice has proactively identified numerous issues.

Bloch also highlighted the importance of evolution: a good API should be extensible in the future, where feasible. This influenced me to design with "future-proofing" in mind. For example, I now use interface types instead of concrete classes if I anticipate needing to change the underlying implementation later, or I reserve unused enum values for future expansion. Crucially, it taught me to avoid breaking changes. If an API requires modification, the preference is to introduce new methods or endpoints rather than altering the contracts of existing ones, as breaking changes cause significant pain for dependent users.

7. The Rise of “Worse is Better” (1989) by Richard P. Gabriel

Originating from the Lisp community in the late 1980s, Richard Gabriel’s "The Rise of Worse is Better" presents a timeless software philosophy that significantly influenced my approach to architecture and product design. The premise is that "worse" solutions often prevail over "better" ones in real-world scenarios, where "worse" and "better" carry specific definitions.

In Gabriel’s terminology, the "MIT approach" to design (also known as "the Right Thing") prioritizes completeness, consistency, and correctness, aiming for an ideal system. Conversely, the "New Jersey approach" ("worse is better") values simplicity and practicality, even if the solution is incomplete or slightly less elegant.

Gabriel’s compelling argument is that the New Jersey style (exemplified by Unix and C) tends to dominate in the marketplace over the MIT style (classic Lisp machines) because simpler systems achieve adoption and can evolve, while perfect ones often never leave the lab. This was eye-opening, explaining many historical outcomes: why C and Unix, despite their flaws, became dominant, while more "perfect" systems faded away. Unix/C was sufficiently simple to be ported to smaller machines, relatively easy to understand, and met just enough requirements to be useful. Once widely adopted, they could be gradually improved. As Gabriel articulated, a software system that’s 50% ideal but widely used will spread and, over time, potentially evolve to 90% of the perfect vision, whereas a system striving for 100% ideal from the outset often never reaches that goal, or does so too late, after the "worse" solution has already taken over the world.

Understanding "worse is better" altered my pursuit of perfection. By nature, I appreciate elegant and perfect solutions. However, my experience has shown that a simple solution that is good enough today consistently outperforms a perfect solution delivered next year. "The Rise of Worse is Better" taught me that sometimes, "good enough" is not merely adequate, but actually the optimal strategy for long-term success. Simplicity possesses its own inherent quality. In a world of finite time and resources, a straightforward system that people can readily use and adapt will often outcompete a "perfect" system perpetually stuck in development.

8. The Grug-Brained Developer (2022) by Carson Gross

This essay is unique, featuring a caveman-style character named "Grug" who offers straightforward yet surprisingly insightful programming advice. "The Grug-Brained Developer," though written in a playfully sarcastic tone, delivered profound truths about over-engineering and complexity.

The central theme is that modern developers frequently make things unnecessarily complex, and that a "grug brain"—simple, fundamental thinking—can often yield superior outcomes.

The Grug-Brained Developer

One of the most humorous and insightful parts is where Grug identifies the "Eternal Enemy": Complexity. "Complexity is bad. Say again: complexity is terrible." This refrain is both amusing and accurate, explaining in simple terms what many developers learn the hard way: every unit of complexity in a codebase or system creates opportunities for errors and developer confusion.

Grug’s advice often boils down to saying "no" to unnecessary changes, features, or abstractions. His blunt phrasing, "No, grug not build that abstraction," is a caricature, but it genuinely made me more comfortable pushing back against premature or excessive complexity. As I’ve advanced into senior roles, I’ve found that protecting the codebase from undue complexity—whether by resisting a trendy new microframework or avoiding a premature microservice split (referencing "Majestic Monolith")—is one of the most valuable contributions one can make.

Another aspect the Grug essay validated is the importance of iteration and emergent design. Grug discusses avoiding early factoring or abstraction. Instead, build things simply until the problem is truly understood, then refactor as needed. The "grug" approach prioritizes creating something tangible first, even if not perfectly abstract, and then evolving it as clear pain points emerge.

9. Software Quality at Top Speed (1996) by Steve McConnell

Steve McConnell’s article, "Software Quality at Top Speed," transformed my professional approach by demonstrating that clean code and rapid delivery are not conflicting goals. The conventional wisdom often suggests that to achieve speed, one must cut corners on quality, skip tests, bypass design, quickly produce code, and fix issues later.

McConnell, author of the acclaimed book "Code Complete," uses data to argue the opposite: higher quality actually leads to faster development. As he states, projects with the lowest defect rates also boast the shortest schedules. When I first encountered this, it seemed counterintuitive, but the more I reflected and observed it in real projects, the more it proved true.

Steve McConnell's book Code Complete

The article cites studies (from sources like IBM and Capers Jones) revealing that poor quality is a significant contributor to schedule overruns and even project cancellations. Essentially, every bug is a time thief. When development is rushed, and defects are introduced, teams inevitably repay that time (with interest) through debugging, retesting, and firefighting in production.

McConnell provides concrete examples: a team under pressure might reduce design and code review time to "save time," but they end up spending more time later in testing and fixing cycles, or even rewriting buggy components. One compelling anecdote from the article illustrated the true cost of a shortcut: a team hastily implemented a feature to meet a deadline, only for it to cause significant problems months later, necessitating a major rework. McConnell analyzed how the supposed shortcut ultimately consumed more overall time—first building the hack, then debugging its consequences, and finally removing it to implement the solution properly.

McConnell also highlights practices such as code reviews, design reviews, and automated testing as high-leverage activities. For instance, code inspections can eliminate a large percentage of defects early in the development process, dramatically reducing the burden of testing and debugging. As McConnell notes, projects that achieve over 95% bug removal before release tend to meet their schedules and maintain customer satisfaction. This became a personal quality benchmark: aiming to catch virtually everything before it reaches production.

10. Don’t Call Yourself a Programmer (2011) by Patrick McKenzie

This essay by Patrick McKenzie (better known as patio11) focused less on coding itself and more on how to frame my career and perceived value as a software engineer. McKenzie’s central argument is that "programmer" as a job title can pigeonhole you as a commodity in the eyes of businesses.

It’s more advantageous to describe yourself in terms of the business value you create, rather than the specific technical activities you perform.

McKenzie wrote that referring to yourself as a programmer often translates, to business stakeholders, as "anomalously high-cost peon who types some mumbo-jumbo into a computer"—essentially, someone potentially replaceable or outsourceable. This hit home, and I realized he was right. Non-technical executives often don’t fully comprehend the act of coding; they only know it’s expensive and wish they needed less of it. Yet, engineers often proudly adopt the label "Programmer."

McKenzie suggests that instead, you present yourself as "someone who increases revenue or reduces costs"; the coding becomes incidental to achieving that outcome. This was a revelation, shifting my perspective from skills-centric ("I know X language, I can build Y") to value-centric ("I solve Z problem for businesses, which saves them $N or helps them gain M customers").

I began rewriting my resume, LinkedIn profile, and even how I communicated in interviews or performance reviews. For example, instead of stating, "Implemented a .NET service to process e-commerce transactions," I would now say, "Built an online payment solution that processed $10M in transactions in the first year, increasing our company’s revenue by 5%."

This essay also introduced me to the concept of profit centers vs. cost centers within a company, helping me understand the financial dynamics of the organizations I worked for. Programmers are typically viewed as cost centers (a necessary expense), while roles like sales or trading are profit centers (they directly generate revenue). This is particularly true for service companies. McKenzie’s point is that by aligning yourself with profit—working on projects that directly drive revenue or becoming the go-to person for cost-saving solutions—you secure a much stronger career position.

11. How To Become a Better Programmer by Not Programming (2007) by Jeff Atwood

When I first read the title of Jeff Atwood’s blog post, "How To Become a Better Programmer by Not Programming," I did a double-take. Not programming? Isn’t the common mantra "practice, practice, practice"? However, Atwood’s piece makes a crucial point: beyond a certain foundational level of coding skill, what distinguishes great developers isn’t simply more coding, but rather "the everything else."

In essence, to truly improve, you must broaden your skills beyond just writing code.

Jeff Atwood blog - aka Coding Horror

Bill Gates is famously quoted as saying that after three to four years of programming, one tends to plateau in pure coding ability. Additional years don’t necessarily make you much better at algorithmic coding. Atwood (and Gates) suggest that to continue improving thereafter, you need to understand the context surrounding the code: the users, the domain, collaboration with others, design principles, and so forth. Jeff writes, "the only way to become a better programmer is by not programming"—encouraging developers to put down the IDE and broaden their perspective.

I applied this advice in several concrete ways:

- I began learning more about the business domain I was working in. Instead of viewing this as someone else’s responsibility, I engaged more with product managers, attended customer feedback sessions, and learned the industry’s vocabulary, becoming more product-minded as an engineer.

- I invested more effort in communication and people skills. Jeff highlights that excellent engineers often excel at bridging engineering with other departments, such as marketing and customer service. This motivated me to volunteer to present engineering plans to various departments, mentor junior colleagues, and coordinate more effectively with QA and Operations.

- I diversified my technical interests. I started reading more about design, UX, and even fields like graphic design or systems thinking. Although primarily a backend engineer, I’ve dabbled in front-end work and studied some data science concepts, not to master them all, but to expand my horizons.

Atwood’s essay essentially urged me to "get out of the coding bubble." I can attest that following this advice has made me a much better developer in a holistic sense. I’ve observed developers who only code and disregard everything else; they often reach a ceiling. They might be proficient at churning out features, but those features might miss the mark, be difficult to integrate, or they might struggle within a team environment. Conversely, developers who dedicate time to non-coding activities—thinking, discussing, and learning about the broader world—end up making more impactful contributions when they do code.

Atwood's "The Best Code is No Code At All" (2007) also influenced me deeply, where he states what every experienced developer knows but often hates admitting: code is a liability, not an asset. Every line written creates a future maintenance burden, and every new feature multiplies the surface area for bugs. The essay opens with Rich Skrenta’s observation that code rots, requires maintenance, and inevitably contains bugs. But Atwood delves deeper: the problem isn’t just the code, it’s developers' instinct to solve every problem with more code. This instinct, he argues, is our adversary. Wil Shipley’s framework makes the tradeoffs explicit: every coding decision balances brevity, features, speed, time spent, robustness, and flexibility. These dimensions often oppose each other. The solution? Start with brevity, and increase other dimensions only as testing dictates.

A discussion on X (formerly Twitter) about Wil Shipley's framework

Conclusion

These essays, each in its own unique way, have profoundly shaped my philosophy and approach to software development. For those who haven’t yet encountered them, I highly recommend a read; they might just transform your perspective on software, too. Ultimately, the common thread weaving through all of them is a wisdom that extends beyond mere code: whether it's about steering clear of hype, designing systems that prevent invalid states, appreciating the lessons embedded in legacy code, right-sizing architectural decisions, mastering complexity, investing in quality, or stepping back to grasp the larger picture. They collectively speak to the craft and culture essential for creating excellent software. Each essay provided an insight that I carry into my daily work, and together, they form a toolkit of principles I wish I had understood from the very beginning. I hope that sharing them, and what I’ve learned, provides something valuable for you to take away. Happy coding, and happy not-coding!