Addressing a Common Pitfall for LangGraph Users: The Power of Dynamic Guidelines

Many LangGraph users struggle with single-route supervisor agents in multi-topic conversations. Discover dynamic guidelines and the Parlant framework for more coherent, versatile AI interactions.

This article is brought to you by Lightning’s Model APIs, now leading benchmarks on Artificial Analysis. Access open and proprietary models on a single, multi-cloud platform optimized for performance and cost. Train, deploy, and scale your models with confidence.

The following visual depicts its quick usage:

Thanks to Lightning for partnering today!

Every LangGraph user we encounter seems to be making the same mistake!

LangGraph users often leverage the popular "supervisor pattern" to construct conversational agents. This pattern involves a central supervisor agent that analyzes incoming queries and routes them to specialized sub-agents. Each sub-agent is designed to handle a specific domain, such as returns, billing, or technical support, using its own distinct system prompt.

This approach works perfectly when the user's intent has a clear and singular focus. However, the fundamental problem arises because it always selects only one route.

Consider a scenario where a customer asks, “I need to return this laptop. Also, what’s your warranty on replacements?” The supervisor would typically route this to the "Returns Agent," which is highly proficient in handling returns but completely unaware of warranty policies. Consequently, the agent might ignore the warranty question, admit its inability to help, or, even worse, hallucinate an answer – none of which provides a satisfactory user experience.

This issue exacerbates as conversations evolve, because real users don't adhere to categorical thinking. They naturally blend topics, switch contexts, and still expect the agent to maintain coherence and responsiveness. This isn't a bug that can be patched; it's an inherent limitation of how router patterns fundamentally operate.

A New Approach: Dynamic Guidelines

Instead of rigidly routing between specialized agents, we propose defining a set of Guidelines. Think of guidelines as modular instructional units, structured like this:

agent.create_guideline(

condition="Customer asks about refunds",

action="Check order status first to see if eligible",

tools=[check_order_status],

)

Each guideline comprises two essential components:

- Condition: Specifies when the guideline should be activated.

- Action: Defines what the agent should do once the condition is met.

Based on the user's query, relevant guidelines are dynamically loaded into the agent's context. For instance, if a customer inquires about both returns and warranties, both corresponding guidelines are simultaneously loaded. This enables the agent to provide coherent responses across multiple topics without imposing artificial separations.

This advanced approach is robustly implemented in Parlant, a recently trending open-source framework that has garnered over 15k stars on GitHub.

Parlant distinguishes itself by using dynamic guideline matching rather than routing between specialized agents. In every turn, it evaluates all defined guidelines and loads only the pertinent ones, ensuring a consistent and coherent conversational flow across diverse topics. You can explore the full implementation and try it out for yourself on the Parlant GitHub repository.

LangGraph and Parlant: Complementary Strengths

It's important to note that LangGraph and Parlant are not competitors; they are complementary tools.

- LangGraph excels in workflow automation, where precise control over execution flow is paramount.

- Parlant is meticulously designed for free-form conversations where users do not adhere to predefined scripts.

The remarkable aspect is their synergistic potential. LangGraph can effectively manage complex retrieval workflows within Parlant's tools, providing the conversational coherence of Parlant alongside the powerful orchestration capabilities of LangGraph.

You can find the GitHub repo here → Parlant GitHub repo

The Engineering Effort for Custom Implementation in LangGraph

While it's possible to implement similar guideline-based flows directly within LangGraph, it's crucial to understand the associated costs and significant engineering effort involved.

1. Dynamic Guideline Matching ≠ Intent Routing: Routing based on "returns" versus "warranty" is a straightforward intent classification. Guideline matching, however, demands a sophisticated scoring and conflict-resolution engine. This engine must be capable of evaluating multiple conditions per turn, distinguishing between continuous and one-time actions, managing partial fulfillment and re-application, and weighing conflicting rules based on their priorities and the current conversational scope. Consequently, you'll need to design elaborate schemas, thresholds, and lifecycle rules, moving beyond simple edges between nodes.

2. Essential Verification and Revision Loops: For consistent behavior, particularly in high-stakes environments, incorporating structured self-checks is vital. Questions like, "Did I violate any high-priority rules?" or "Are all facts sourced exclusively from the permitted context?" require a robust system. This implies a JSON-logged revision cycle, not merely a single LLM call per node.

3. The Challenge of Token Budgeting: Loading all relevant instructions sounds simple but is challenging without exhausting the context window. Implementing this effectively necessitates intelligent ordering policies (e.g., recency over global relevance), deduplication, summarization, slot-filling, and strict token budgets per turn to ensure the model remains focused and to keep costs and latency predictable.

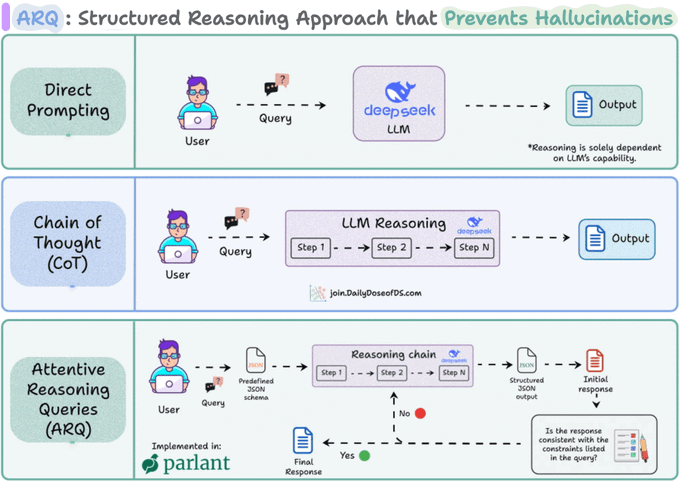

4. Parlant's ARQ Reasoning Technique: The reasoning technique employed by Parlant, known as ARQ (Agent Reasoning Questions), is specifically engineered to achieve precise control over agents.

In essence, instead of allowing Large Language Models (LLMs) to reason freely, ARQ guides the agent through explicit, domain-specific questions. For instance, before making a recommendation or deciding on a tool call, the LLM is prompted to fill structured keys, such as:

{

"current_context": "Customer asking about refund eligibility",

"active_guideline": "Always verify order before issuing refund",

"action_taken_before": false,

"requires_tool": true,

"next_step": "Run check_order_status()"

}

This mechanism helps reinforce critical instructions, keeping the LLM aligned mid-conversation and preventing hallucinations. By the time the LLM generates the final response, it has already progressed through a series of controlled reasoning steps, avoiding the free-form reasoning characteristic of techniques like Chain-of-Thought (CoT) or Tree-of-Thought (ToT).

Conclusion

If your interaction is narrow, highly guided, or genuinely workflow-oriented, LangGraph alone is an ideal solution. However, if your interactions are open-ended, span multiple topics, and require compliance sensitivity, you will likely find yourself reimplementing a significant portion of the advanced mechanisms discussed above to maintain conversational coherence.

You can explore the full implementation on GitHub and try it out yourself.

You can find the GitHub repo here → Parlant GitHub repo

Thanks for reading!