Agent Lab: How We Built a Hybrid Team of Humans and AI Agents

Explore the journey of a team that successfully integrated AI agents into their daily workflows, detailing project structure, agent development examples, key lessons learned, and the future of human-AI collaboration.

The concept of hybrid human-agent workforces, where AI agents collaborate with human teams, has gained significant traction. Salesforce CEO Marc Benioff envisioned this shift, a perspective that deeply resonated within our team. This led us to embark on an "Agent Lab" project, aiming to build our own hybrid team by developing and integrating AI agents into our daily workflows.

How We Structured the Project

Our "Agent Lab" project was spearheaded by internal AI champions. To ensure success within our distributed team operating across continents and time zones, several key structural decisions were made:

- Dedicated Communication Channel: A Slack channel was established for ongoing discussions and updates.

- Shared Learning Resources: A resources tab within the Slack channel provided access to learning materials on AI agents and relevant development tools.

- Kickoff Session: An initial meeting aligned goals, shared early ideas, and outlined experimentation and collaboration strategies. Two critical decisions emerged:

- Formal Goal Integration: Building AI agents became an official quarterly goal, impacting bonuses to ensure prioritization amidst busy schedules.

- Platform Selection: n8n was chosen as the primary automation platform due to its flexibility and accessibility for both technical and non-technical team members. (Other options considered included Zapier, Make, and Langchain, which has since been adopted for other projects).

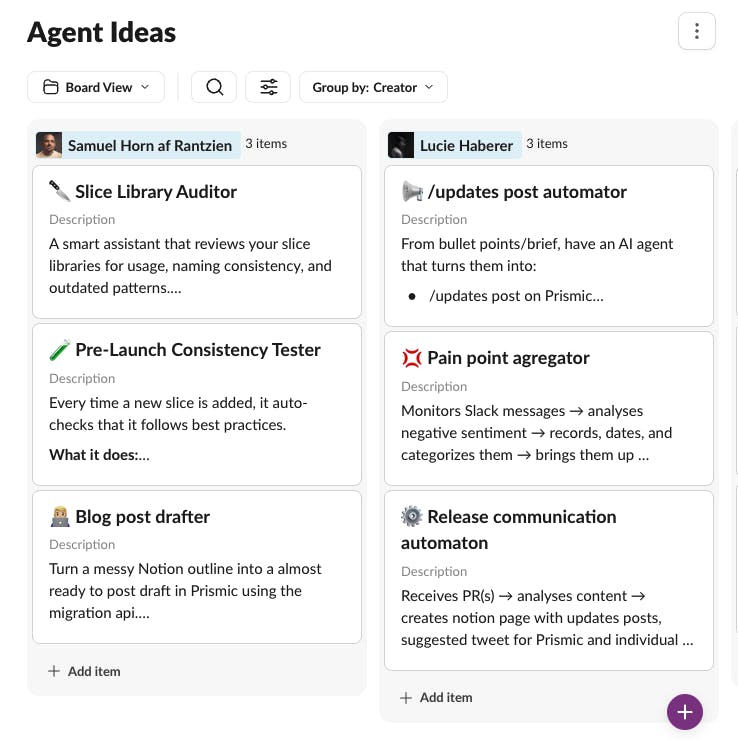

- Agent Idea Database: Over several weeks, a Slack board was used to brainstorm and compile a database of potential agent ideas, allowing for continuous additions.

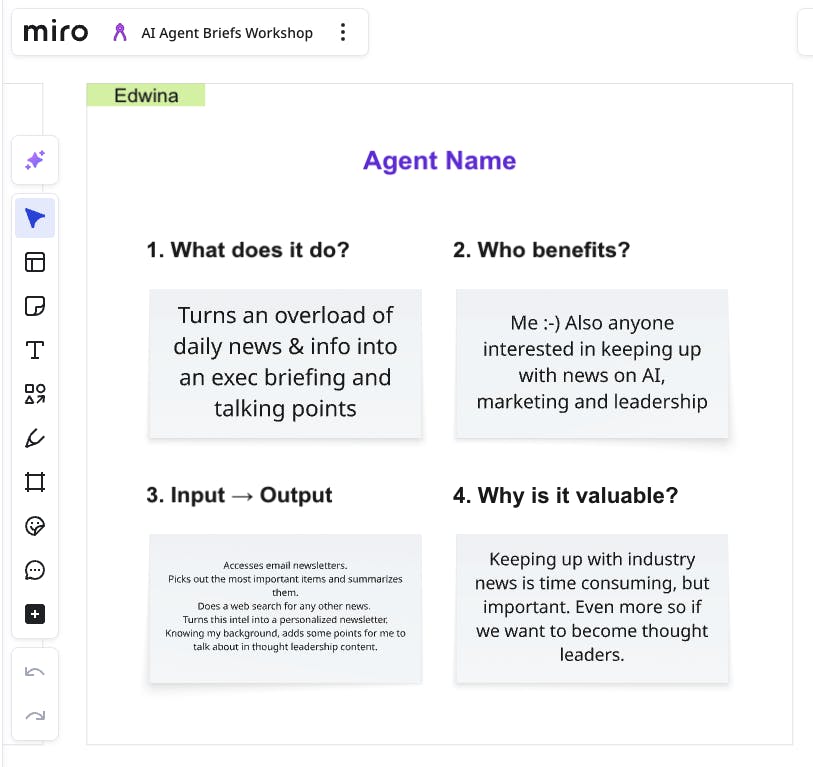

- Live Pitch and Briefing: A workshop was held to pitch ideas, vote on the most promising ones, and create detailed agent briefs. Miro was utilized for this, but physical post-it walls would also suffice for in-person collaboration.

- Weekly Check-ins and 1:1 Jams: A weekly "Agent Lab" meeting was scheduled for demos, progress updates, and roadblock discussions. Additionally, individual "building jams" offered focused, one-on-one support, particularly beneficial for non-technical team members learning best practices in agent structuring, memory integration, JSON handling, and ensuring agents met expectations.

- Continuous Knowledge Sharing: Throughout the building phase, learnings, ideas, and resources were actively shared on Slack, fostering discussions on agent complexity, distinguishing agents from simpler "AI automations," and resolving technical challenges with n8n and connected tools.

What We Actually Built

Our "Agent Lab" yielded several innovative AI agents:

-

Executive Briefing Agent: Designed to combat AI news overload, this agent reviews subscribed newsletters, conducts additional web searches, and generates a personalized daily briefing. With access to a personal profile and understanding of work context, it filters for relevance and suggests talking points for thought leadership content.

-

AI-Driven Content Workflow (Sam's Agent): This project aimed to test n8n as a full backend for an application, resulting in a three-agent content workflow initially powered by Notion.

- Idea Generator: Runs daily, pulls inputs (blogs, subreddits, X accounts), builds a content pool, feeds it to an AI agent to generate new post ideas, and stores them in Notion.

- Post Drafter: Triggered for a specific idea, an AI research agent gathers context via web search, a drafting agent writes a post in a specified tone, and if code is present, another agent generates a styled code image before saving the draft back to Notion.

- X Poster: Runs daily, checks drafts marked "ready to publish," downloads media, and posts to X (formerly Twitter).

While Notion proved flexible as a UI layer, its logic management became cumbersome as the system scaled. This led to the development of a Next.js app for a cleaner interface, with n8n still managing backend logic. Notion was eventually replaced by Convex, a more suitable solution for structured, reactive workflows. The realization then emerged that consolidating n8n workflow logic into the Next.js + Convex stack would further simplify architecture and improve evolution. While n8n excels for rapid prototyping and advanced automation with minimal coding, a unified and robust foundation is generally preferable for production-ready applications if coding expertise is available.

-

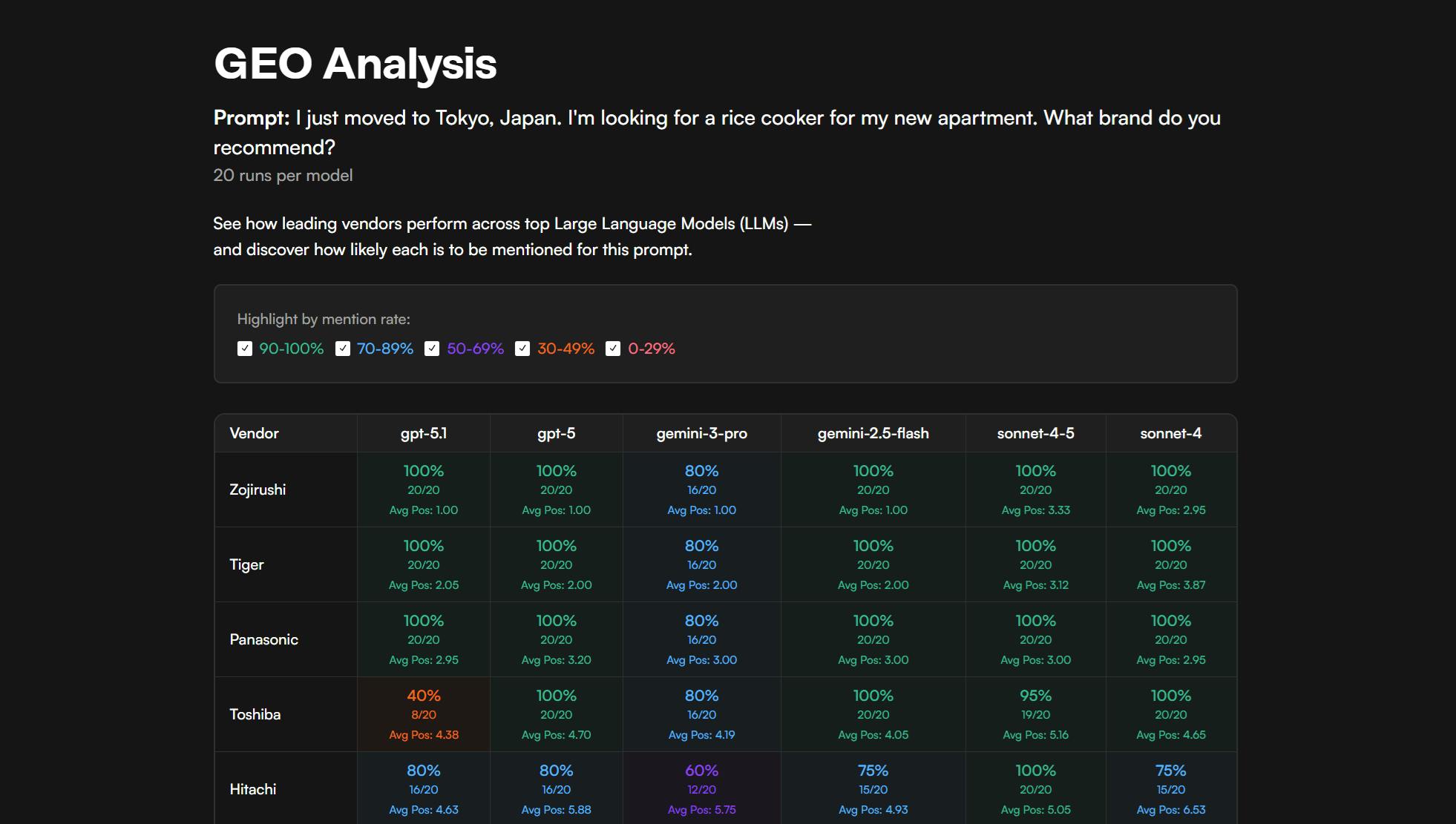

LLM Benchmarking Agent (Lucie's Agent): Inspired by debates on Large Language Model (LLM) performance, this agent benchmarks how frequently various models recommend Prismic for prompts like "What CMS should I use for a Next.js site?" This provides insights into our ranking against competitors.

Instead of using n8n, this agent was implemented directly in code using Vercel’s AI SDK. Recognizing that LLM answers are dynamic, the agent runs weekly polls and reports findings to Slack, tracking ranking evolution and the impact of initiatives. What began as an experiment quickly garnered internal interest, expanding to support on-demand polls for arbitrary prompts (including non-work-related ones). It has since evolved into a widely adopted internal tool across the company.

Instead of using n8n, this agent was implemented directly in code using Vercel’s AI SDK. Recognizing that LLM answers are dynamic, the agent runs weekly polls and reports findings to Slack, tracking ranking evolution and the impact of initiatives. What began as an experiment quickly garnered internal interest, expanding to support on-demand polls for arbitrary prompts (including non-work-related ones). It has since evolved into a widely adopted internal tool across the company.

What We Learned

The project offered valuable insights into building and deploying AI agents:

-

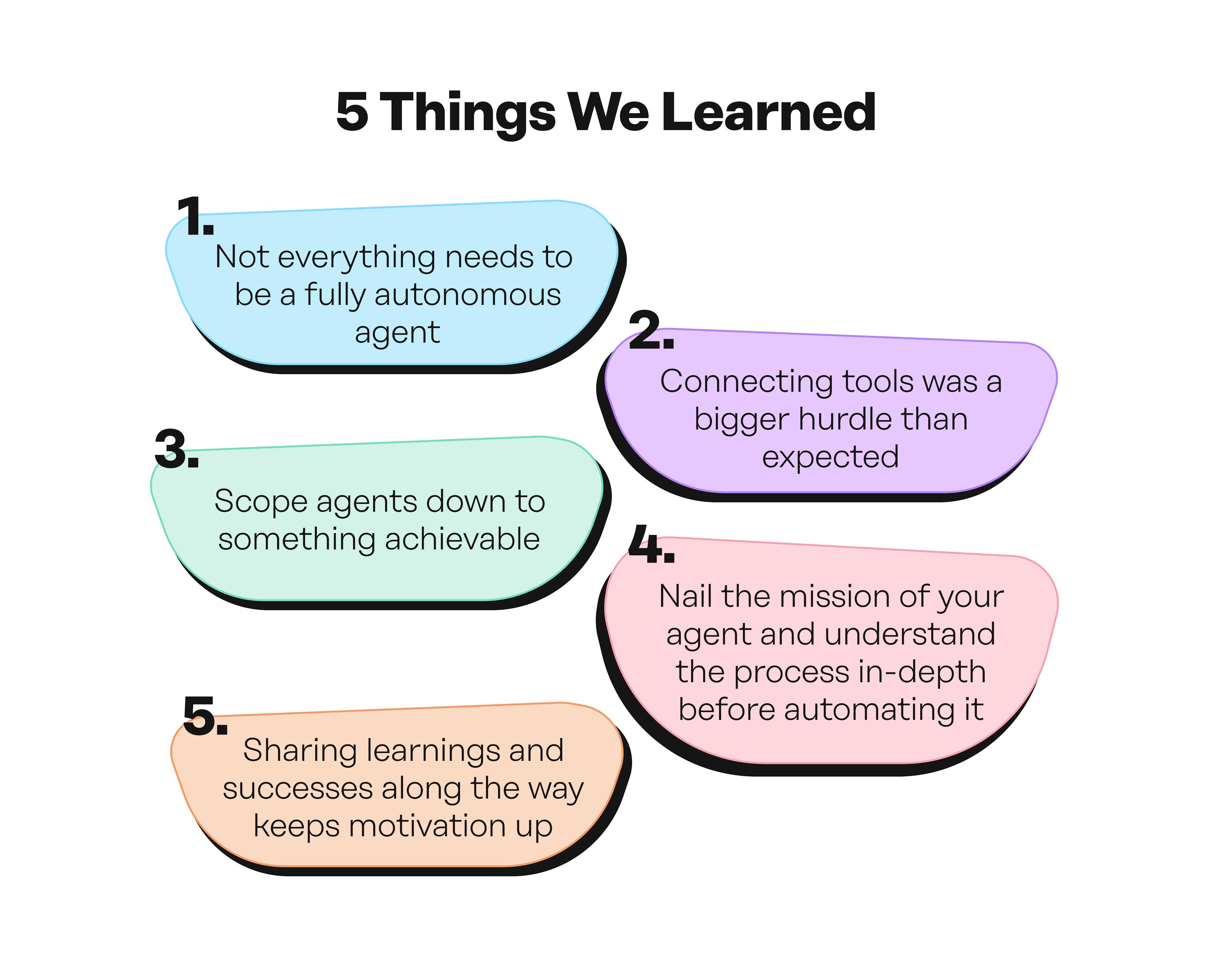

Not Everything Needs to Be a Fully Autonomous Agent: Initially, the team sometimes over-engineered solutions to be fully "agentic" when a simpler, linear workflow with a focused AI agent handling a specific part would have been more effective. For instance, fetching code changes is rigid, but summarizing those changes and assessing documentation impact benefits from a smart agent. Combining rigid and agentic steps proved successful.

-

Connecting Tools Was a Bigger Hurdle Than Expected: Integrating multiple tools proved more difficult than anticipated. Simple API key entry often led to complex troubleshooting, token exhaustion, quota limits, and unintended outputs. This highlighted the importance of establishing core infrastructure before scaling team-wide development efforts.

-

Scoping Agents Down to Something Achievable: Grand initial ideas often exceeded the one-quarter timeframe and led to diminishing results due to increased complexity. Early planning and experimentation focused on defining realistic scopes and building Minimum Viable Products (MVPs) iteratively, adding complexity incrementally.

-

Nail the Mission of Your Agent and Understand the Process In-Depth Before Automating It: Before automating a process, it's crucial to thoroughly understand and master it manually. Attempting to automate a poorly understood process, like complex competitor research, without prior hands-on experience, often leads to failure. A well-defined process, even for a narrowly scoped "Competitor Intelligence Intern" agent, ensures successful automation and effective prioritization of further work.

-

Sharing Learnings and Successes Along the Way Keeps Motivation Up: The journey involved waves of excitement, frustration, and performance fluctuations. The dedicated Slack channel and weekly meetings were instrumental in maintaining motivation by providing platforms for sharing progress, learnings, successes, and occasional failures.

What Happened With Our Agents After the Project Ended

Full transparency: not all our agents passed their "probation period." Some served primarily as learning exercises, either due to over-complication or a focus on what could be automated rather than what was truly needed. As Peter Drucker noted, "Efficiency is doing things right. Effectiveness is doing the right thing."

However, some agents achieved significant effectiveness. Lucie’s LLM tracker, for instance, which monitored our visibility on key topics across various LLMs, quickly caught the attention of the sales team. It has since evolved into a company-wide tool used to prepare for prospect and client meetings related to our GEO landing page builder solution.

Where We Go From Here

The team remains committed to evolving existing agents and building new custom GPTs and agentic or non-agentic AI solutions for daily work, even outside formal project cycles. The "Agent Lab" has solidified the conviction that human-AI collaboration is a powerful path forward.