Benchmarking OpenAI's GPT-5.2/Pro Against Opus 4.5 and Gemini 3 on Real-World Coding Tasks

A comprehensive benchmark comparing OpenAI's GPT-5.2 and GPT-5.2 Pro with Claude Opus 4.5 and Gemini 3 across three challenging real-world coding tasks. Discover performance, code quality, and cost implications for each model.

OpenAI recently launched GPT-5.2 and GPT-5.2 Pro. This report immediately assesses how these new models compare against Opus 4.5, Gemini 3, and the previous GPT-5.1, providing results within 24 hours of the release.

How the Models Were Tested

The models were evaluated using the same three real-world coding challenges from a previous comparison involving GPT-5.1, Gemini 3.0, and Claude Opus 4.5. This allows for a direct assessment of OpenAI's advancements in frontier coding models. Each test began from an empty project within Kilo Code.

The tests included:

- Prompt Adherence Test: Implementing a Python rate limiter with 10 strict requirements, including exact class name, method signatures, and error message formats.

- Code Refactoring Test: Refactoring a 365-line TypeScript API handler that contained SQL injection vulnerabilities, inconsistent naming conventions, and lacked security features.

- System Extension Test: Analyzing an existing notification system architecture and then adding an email handler that conformed to the established design patterns.

For Tests 1 and 2, "Code Mode" was utilized, while Test 3 involved "Ask Mode" followed by "Code Mode." Reasoning effort was set to the highest available option for both GPT-5.2 and GPT-5.2 Pro to maximize their performance.

Test #1: Python Rate Limiter

The prompt required all five models to implement a TokenBucketLimiter class with 10 precise requirements. These included an exact class name, specific method signatures, a particular error message format, and details such as the use of time.monotonic() and threading.Lock().

GPT-5.2 implementing the Python Rate Limiter in Kilo Code.

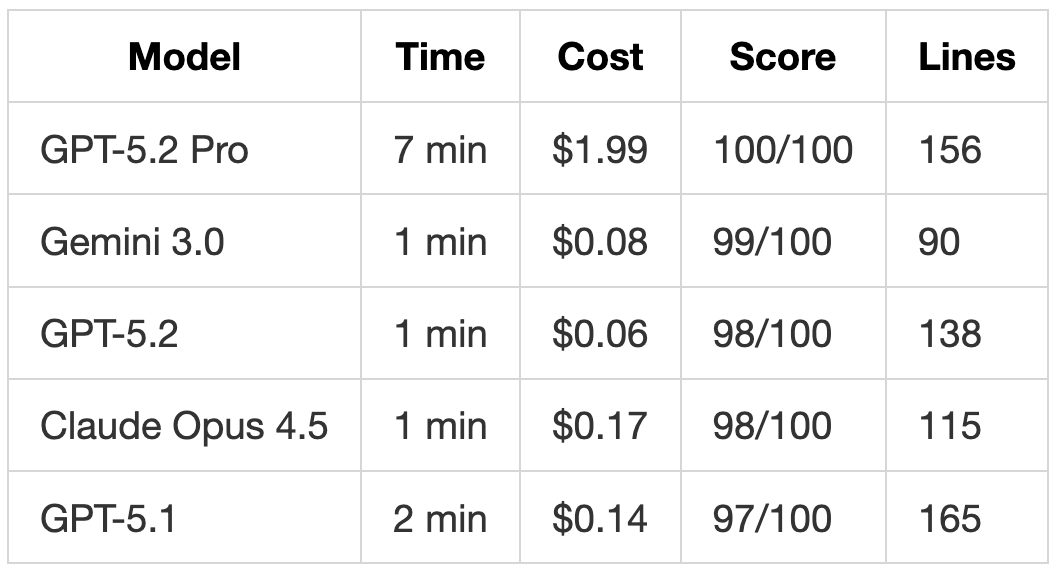

Results:

GPT-5.2 Pro was the sole model to achieve a perfect score, although its completion took 7 minutes. It successfully adhered to the exact constructor signature (initial_tokens: int = None instead of Optional[int]) and named its internal variable _current_tokens to match the required dictionary key in get_stats().

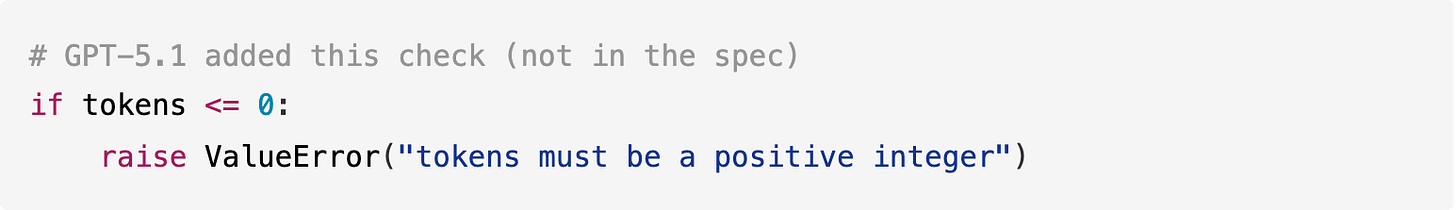

GPT-5.2 demonstrated a significant improvement over GPT-5.1 by eliminating unnecessary exceptions. GPT-5.1 previously added unrequested validation that raised ValueError for non-positive tokens:

In contrast, GPT-5.2 handled edge cases gracefully:

This represents a crucial change, as GPT-5.1's defensive additions could have broken systems expecting the method to handle zero tokens. GPT-5.2 now adheres more closely to specifications while still managing edge cases effectively.

GPT-5.2 Pro’s Extended Reasoning

GPT-5.2 Pro dedicated 7 minutes and $1.99 to a task that other models completed in 1-2 minutes at a cost of $0.06-$0.17. This additional time and expense yielded three key improvements:

- Exact Signature Matching: Used

int = Noneinstead ofOptional[int]orint | None. - Consistent Naming: Employed

_current_tokensinternally, aligning with the required dictionary key. - Comprehensive Edge Cases: Handled

tokens > capacityandrefill_rate <= 0by returningmath.inf.

Pros and Cons:

- Pros: For a critical component like a rate limiter that will execute millions of times, the 7-minute investment for perfection might be worthwhile.

- Cons: For rapid prototyping, GPT-5.2, completing the task in 1 minute for $0.06, is a more practical choice.

Test #2: TypeScript API Handler Refactoring

The prompt involved a 365-line TypeScript API handler laden with over 20 SQL injection vulnerabilities, mixed naming conventions, absent input validation, hardcoded secrets, and missing features like rate limiting. The models were tasked with refactoring it into clean Repository/Service/Controller layers, adding Zod validation, resolving security issues, and implementing 10 specific requirements.

GPT-5.2 Pro refactoring the TypeScript API handler in Kilo Code.

Results:

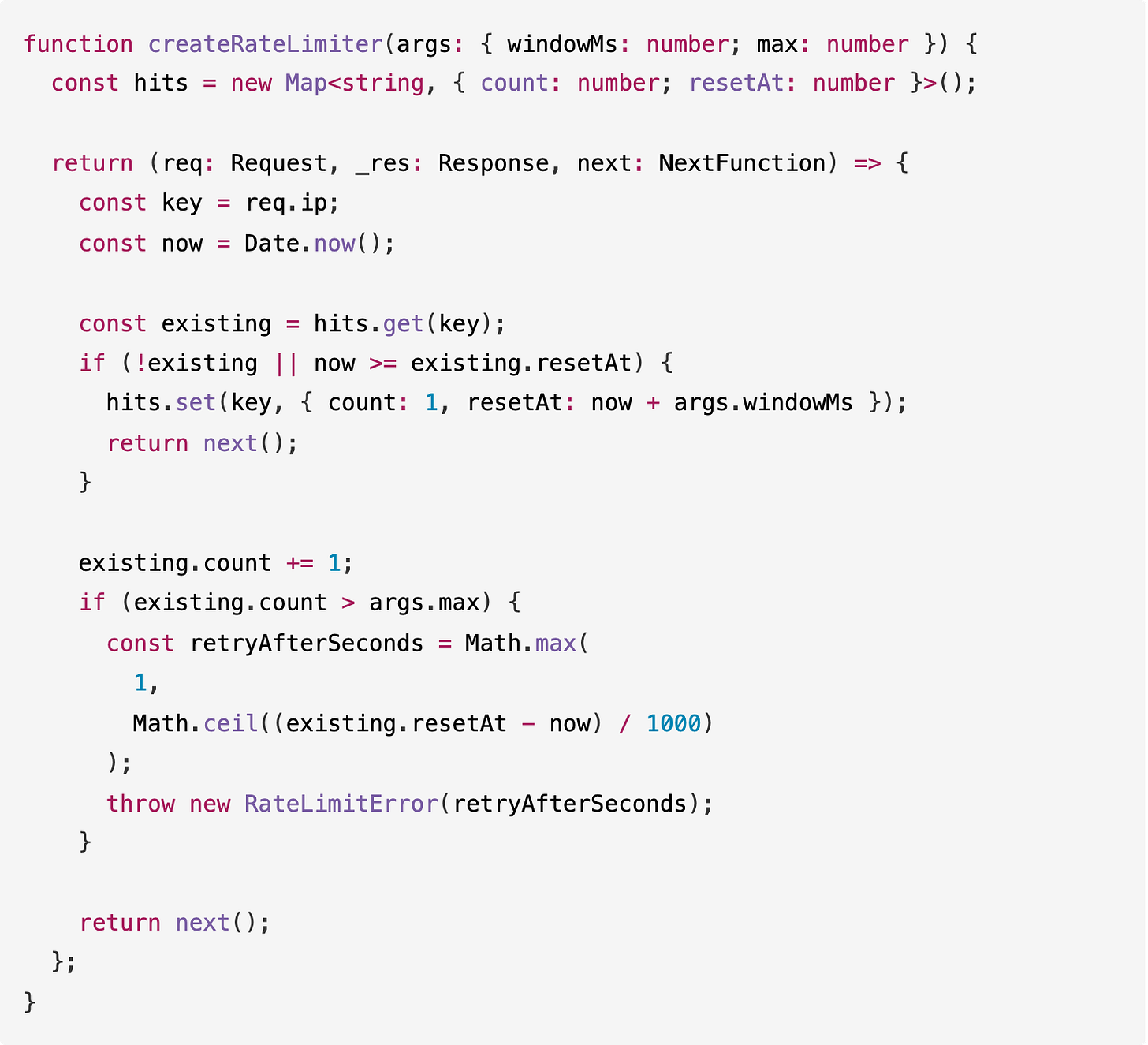

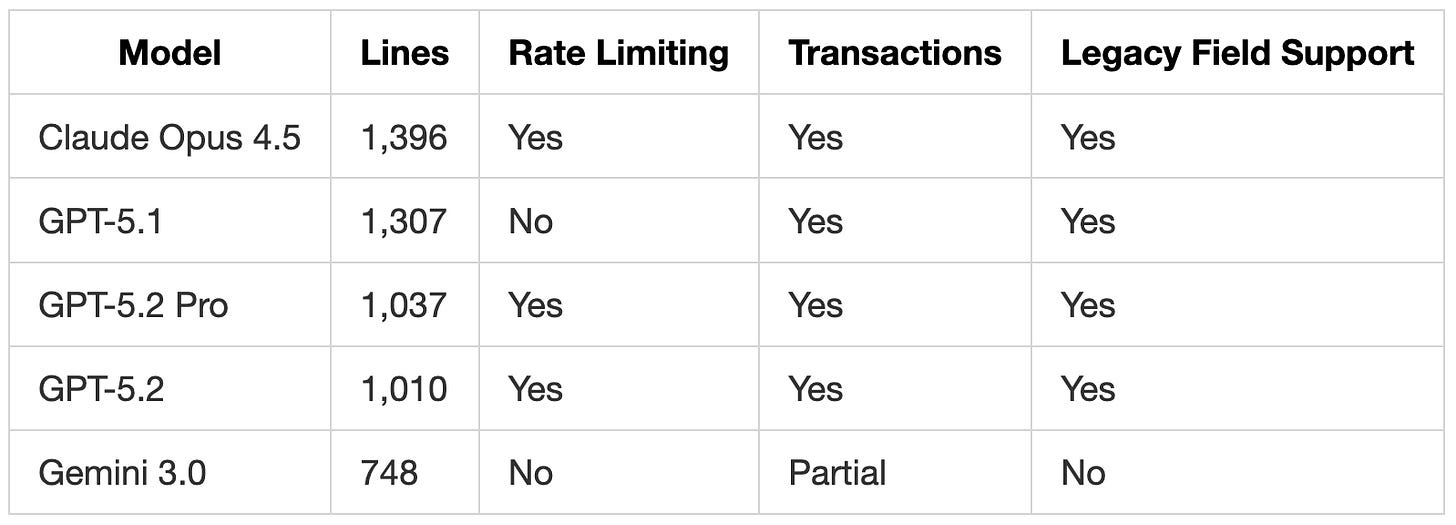

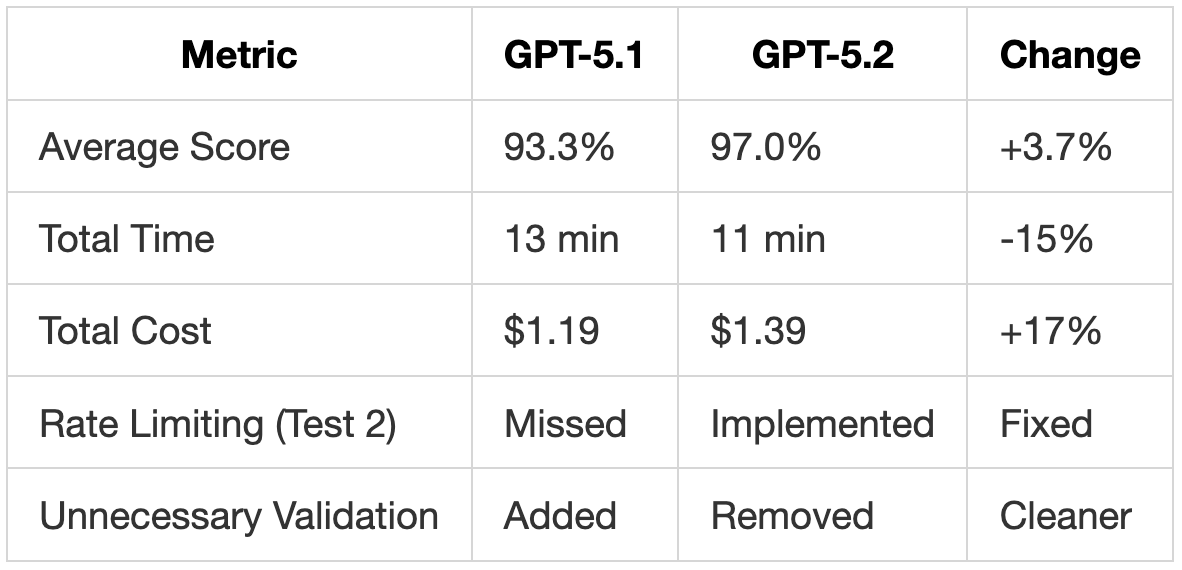

Both GPT-5.2 and GPT-5.2 Pro successfully implemented rate limiting, a feature entirely missed by GPT-5.1 despite being one of the 10 explicit requirements.

GPT-5.2 Implemented Rate Limiting

GPT-5.1 completely ignored the rate limiting requirement. In contrast, GPT-5.2 followed the instructions precisely:

GPT-5.2's implementation included proper Retry-After calculation and used a factory pattern for configurability. While Claude Opus 4.5 provided a more comprehensive implementation, including X-RateLimit-Limit, X-RateLimit-Remaining, and X-RateLimit-Reset headers, alongside periodic cleanup of expired entries, GPT-5.2 met the core requirement without these extras, unlike GPT-5.1 which omitted it entirely.

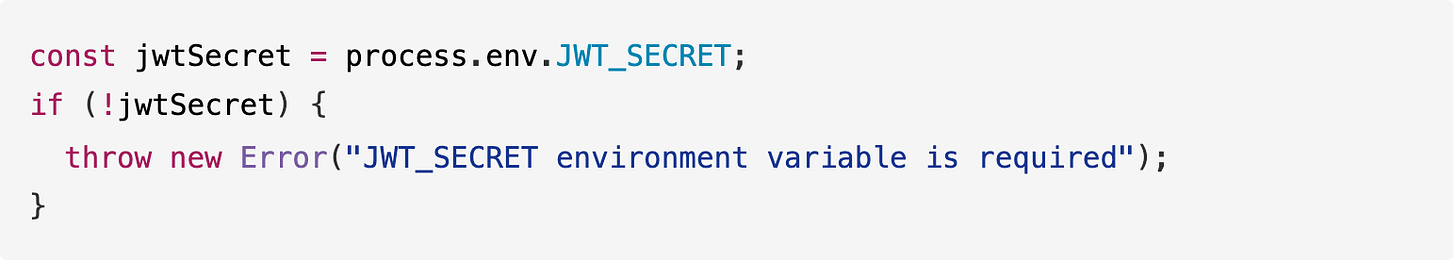

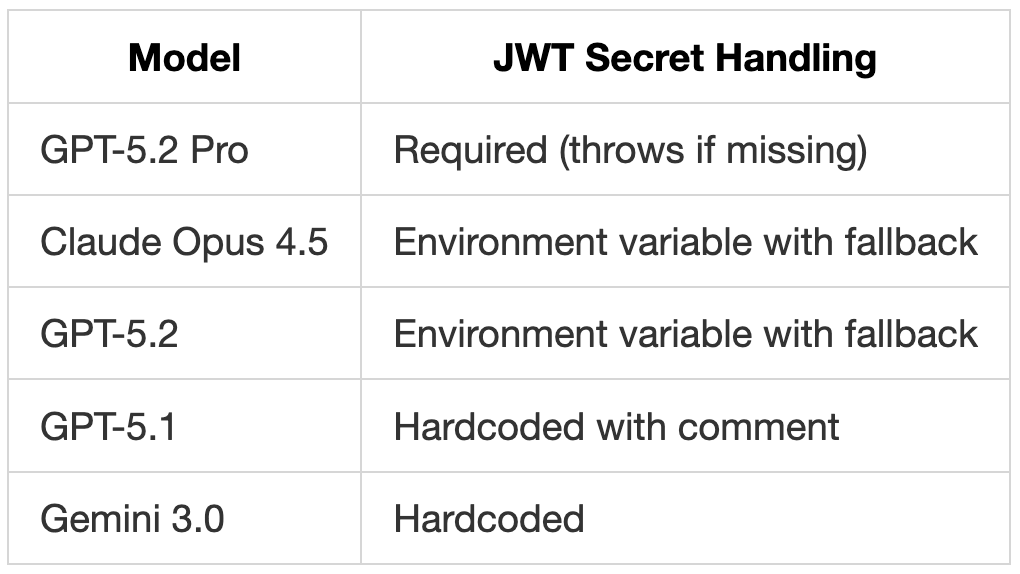

GPT-5.2 Pro Requires JWT_SECRET

GPT-5.2 Pro was the only model to enforce the JWT secret as an environment variable, proactively throwing an error at startup if it was missing:

Other models either hardcoded the secret or utilized a fallback default:

For production environments, GPT-5.2 Pro's approach is superior as it favors failing fast over running with insecure defaults.

Code Size Comparison

GPT-5.2 generated more concise code than GPT-5.1 (1,010 lines vs. 1,307 lines) while fulfilling more requirements. This reduction in code size was attributed to cleaner abstractions:

Notably, GPT-5.2 matched Claude Opus 4.5's feature set with 27% fewer lines of code.

Test #3: Notification System Extension

This test provided a 400-line notification system that included Webhook and SMS handlers. Models were instructed to:

- Explain the existing architecture (using Ask Mode).

- Add an

EmailHandlerthat matched the established patterns (using Code Mode).

This task specifically evaluated both comprehension of existing systems and the ability to extend code while adhering to an established style.

Results:

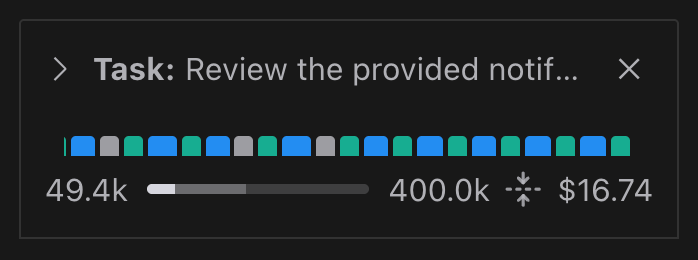

GPT-5.2 Pro achieved the first perfect score on this particular test. It spent a significant 59 minutes reasoning through the problem, which, while impractical for most common use cases, highlights the model's capabilities when allocated sufficient processing time.

GPT-5.2 Pro Context and Cost Breakdown in Kilo Code.

GPT-5.2 Pro Fixed Architectural Issues

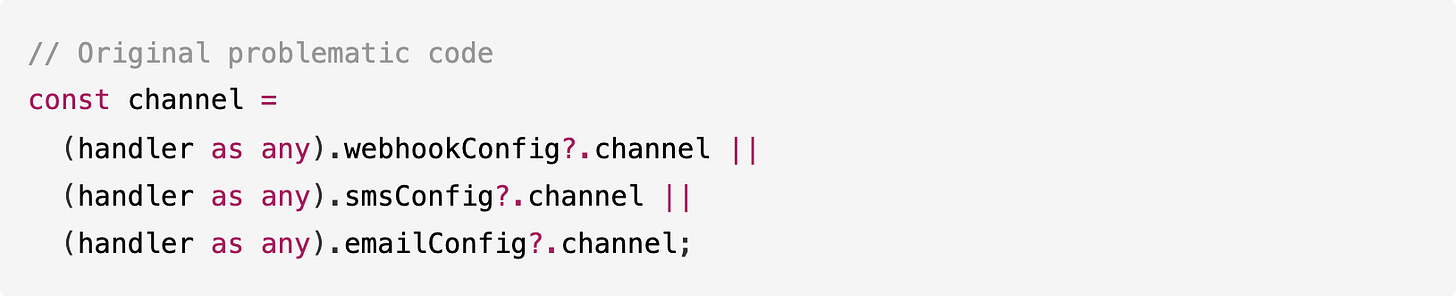

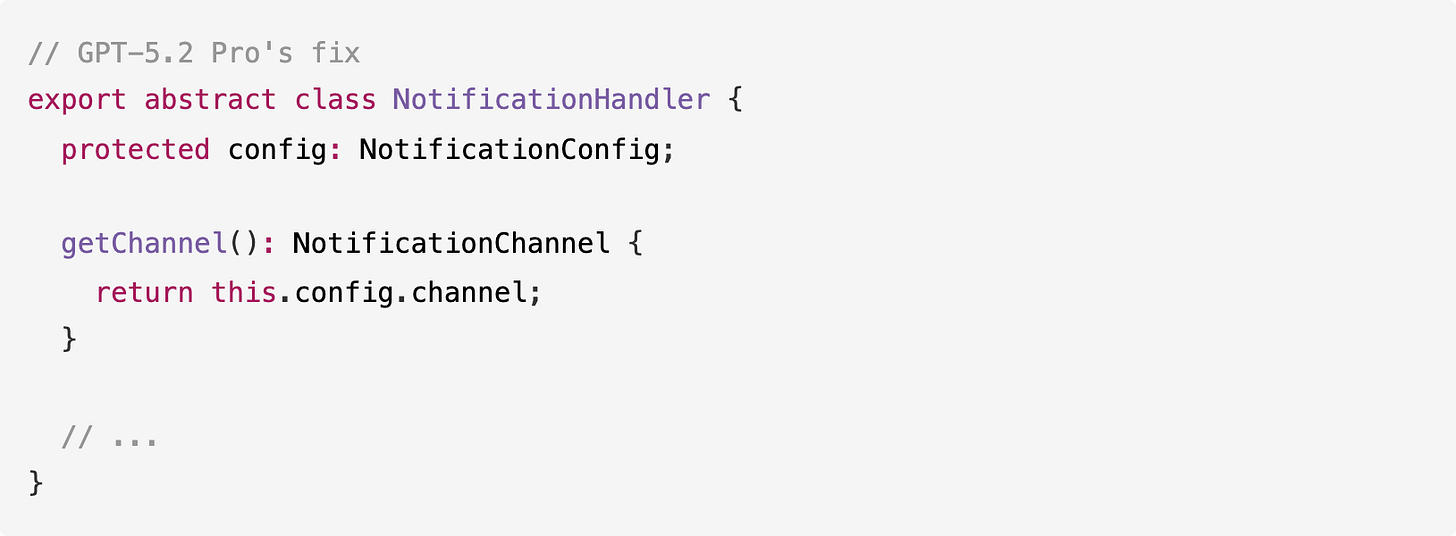

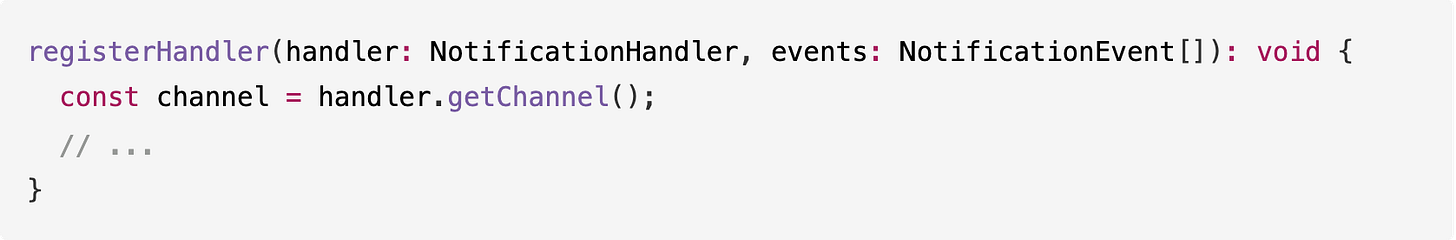

In prior tests, all models identified a fragile pattern within the original code, but none successfully addressed it. The registerHandler method relied on type casting to determine which channel a handler belonged to:

GPT-5.2 Pro was the first model to actually correct this by adding a getChannel() method to the base class:

And subsequently updating registerHandler to utilize this new method:

Furthermore, GPT-5.2 Pro addressed two other issues overlooked by other models:

- Private Property Access: It introduced a

getEventEmitter()method instead of directly accessingmanager['eventEmitter']. - Validation at Registration: It implemented

handler.validate()calls duringregisterHandler()execution, rather than deferring validation until send time.

These fixes underscore the power of extended reasoning, as GPT-5.2 Pro not only implemented the email handler but also proactively improved the overall system architecture.

GPT-5.2’s Email Implementation

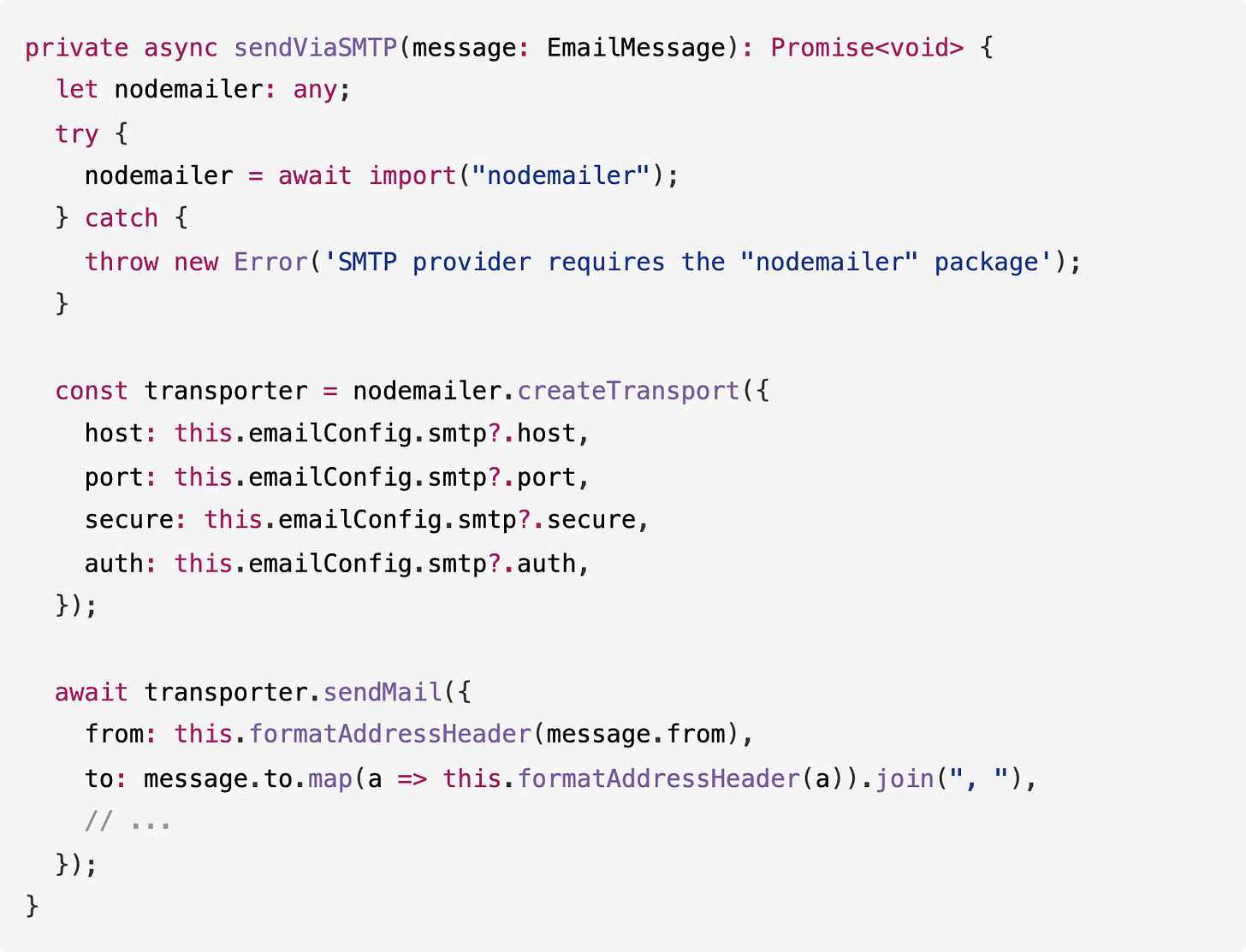

GPT-5.2 delivered a comprehensive email handler, spanning 1,175 lines, with full provider support:

It cleverly utilized dynamic imports for nodemailer and @aws-sdk/client-ses, ensuring the code functions without requiring these dependencies to be installed until they are actually needed. Additionally, it incorporated normalizeEmailBodies() to facilitate text generation from HTML or vice versa:

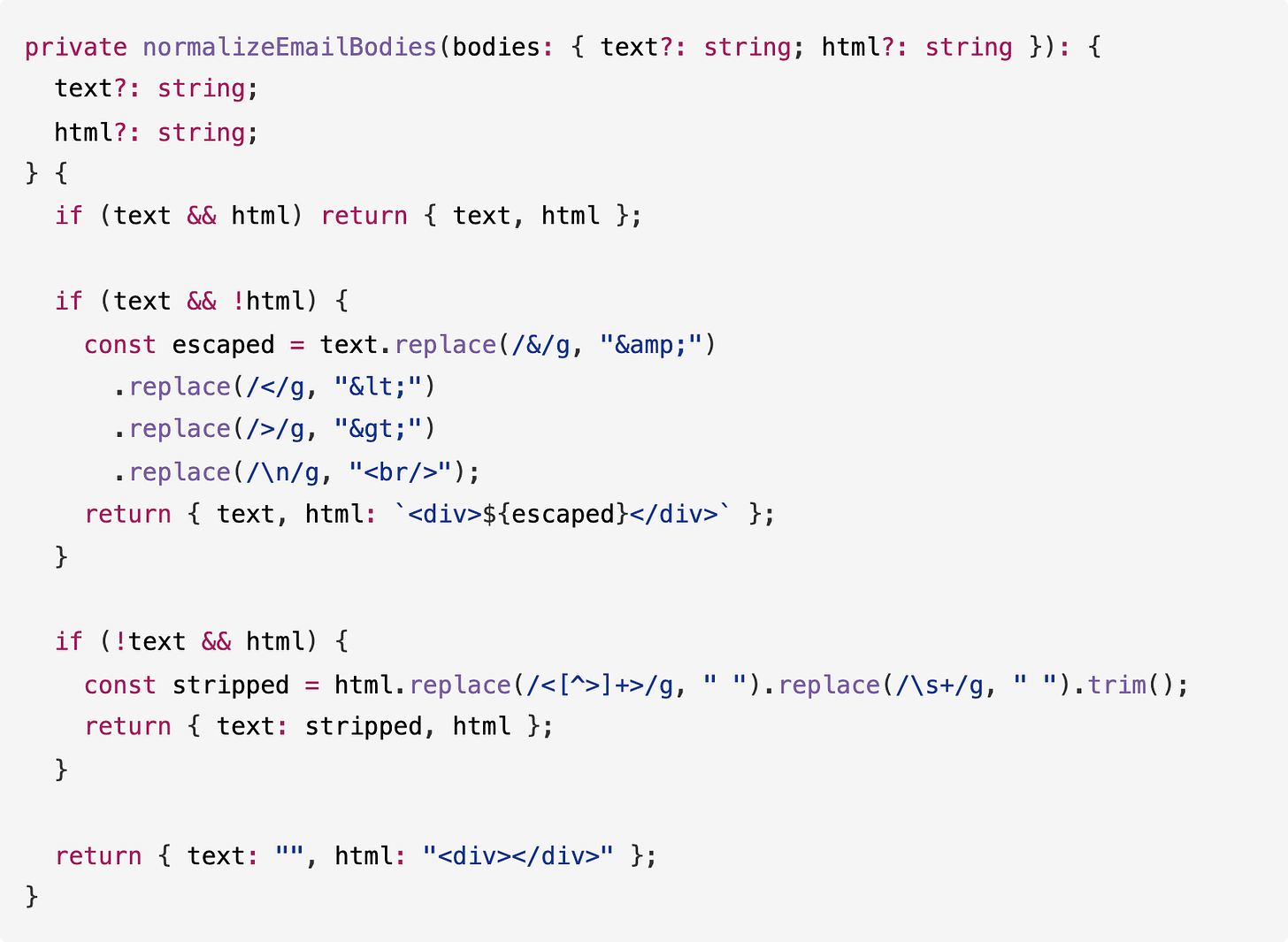

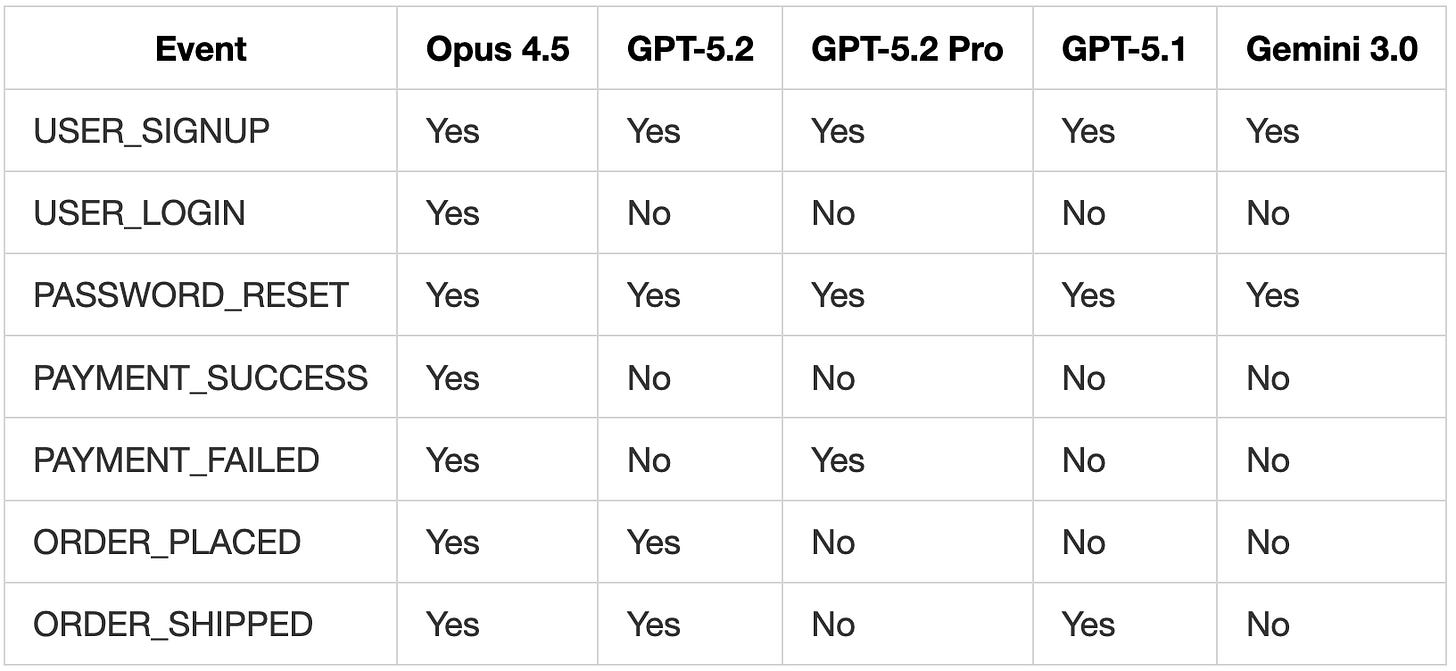

Template Coverage

Claude Opus 4.5 remains the only model to provide templates for all seven notification events. GPT-5.2 covered four events, while GPT-5.2 Pro covered three.

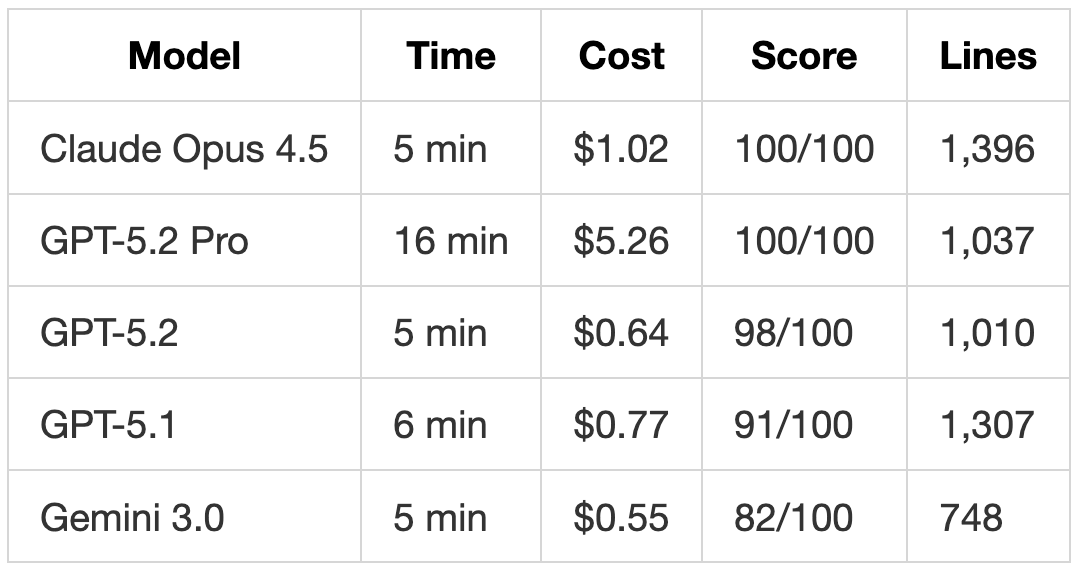

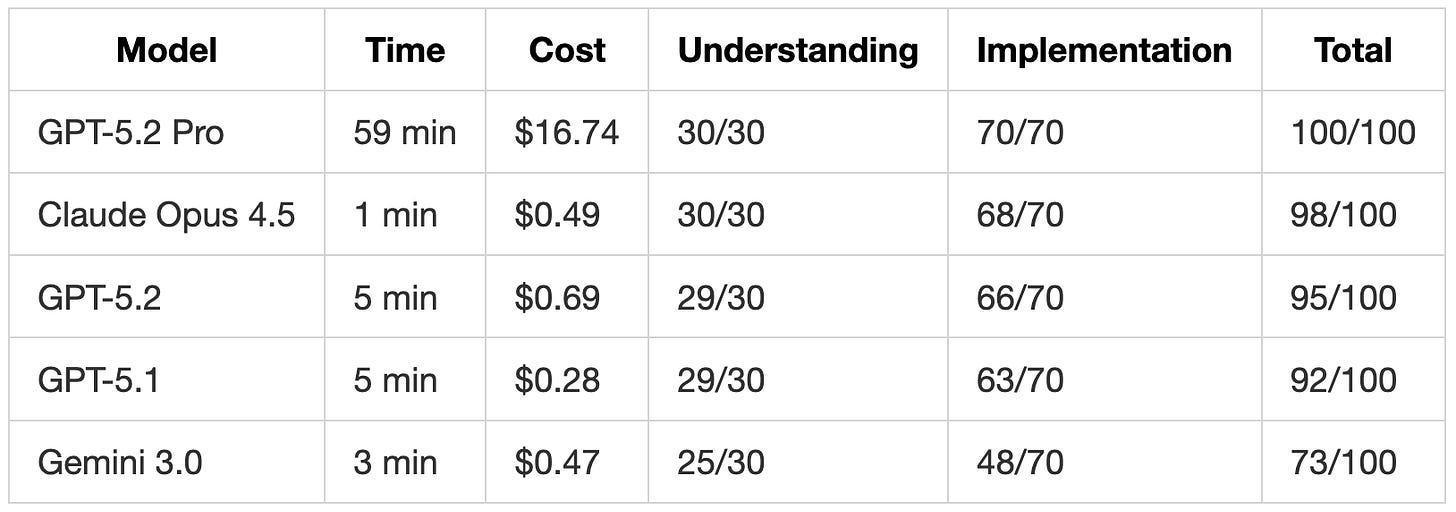

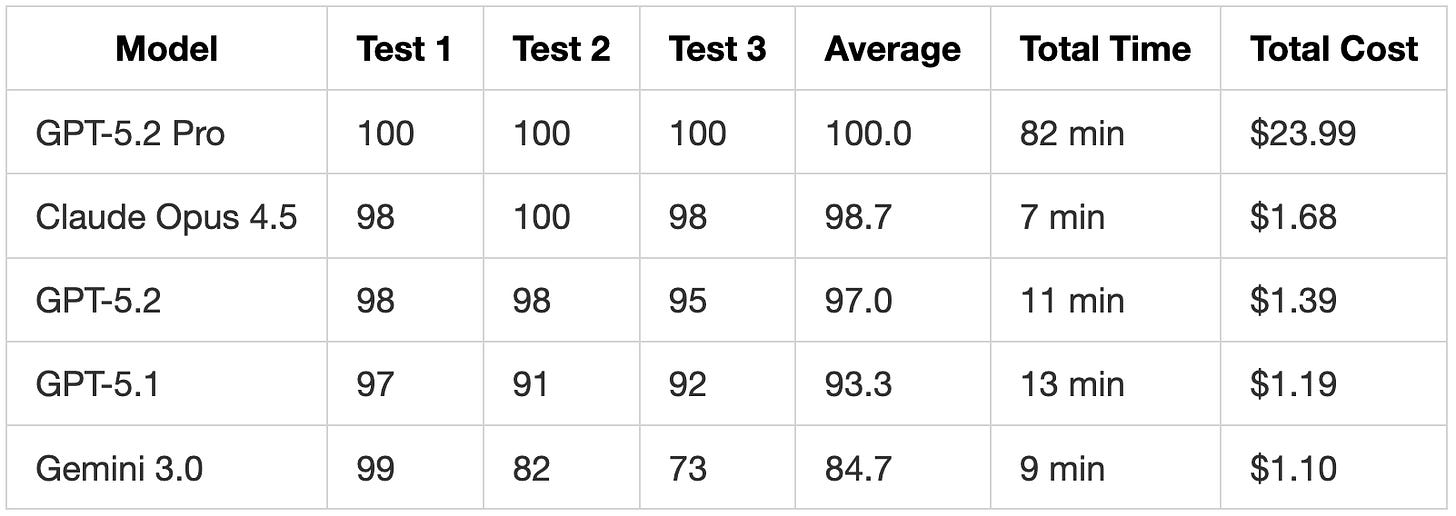

Performance Summary

Test Scores

GPT-5.2 Pro achieved perfect scores but at a considerable total of 82 minutes and $23.99. In comparison, Claude Opus 4.5 scored a 98.7% average in 7 minutes for $1.68.

GPT-5.2 vs. GPT-5.1

GPT-5.2 is demonstrably faster, achieves higher scores, and generates cleaner code than GPT-5.1. The 17% cost increase ($0.20 total) is justified by significant improvements in requirement coverage and overall code quality.

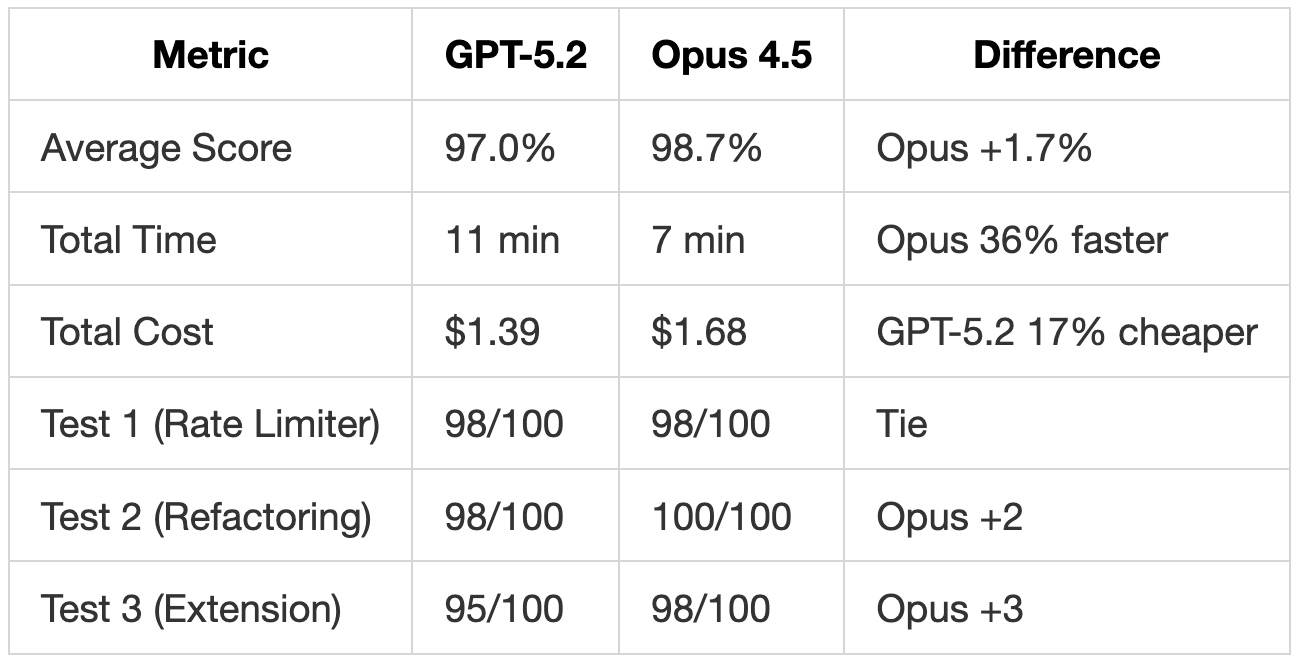

GPT-5.2 vs. Claude Opus 4.5

Claude Opus 4.5 consistently achieved slightly higher scores across all tests while completing tasks more quickly. The performance difference was most pronounced in Test 2, where Opus 4.5 was the only model (apart from GPT-5.2 Pro) to implement all 10 requirements, including rate limiting with full headers. In Test 3, Opus 4.5 provided templates for all seven notification events, whereas GPT-5.2 covered only four.

Although GPT-5.2 is 17% cheaper per run, Opus 4.5 is more likely to deliver complete implementations on the first attempt. Factoring in potential retries to address missing features, the cost difference may narrow.

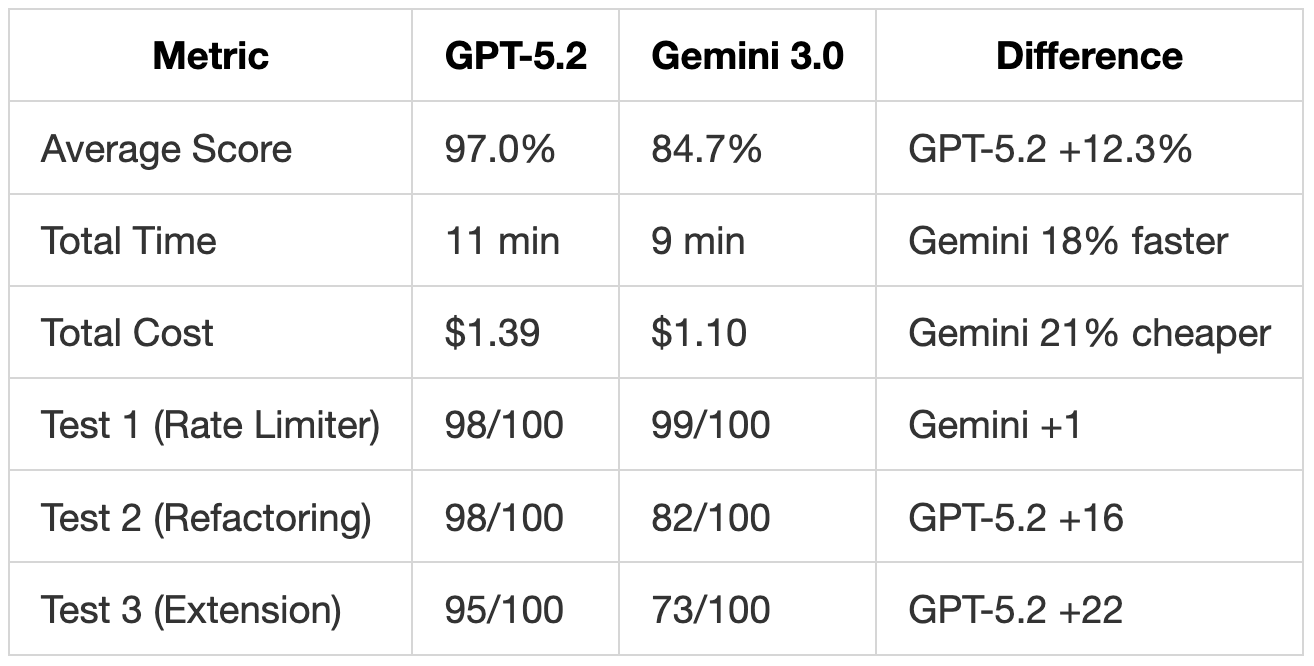

GPT-5.2 vs. Gemini 3.0

Gemini 3.0 excelled in Test 1 by adhering most literally to the prompt, producing the shortest code (90 lines) with exact specification compliance. However, on more complex tasks (Tests 2 and 3), GPT-5.2 significantly outperformed it. Gemini entirely missed rate limiting in Test 2, omitted database transactions, and generated a minimal email handler in Test 3 lacking CC/BCC support or proper recipient handling.

While Gemini 3.0 offers faster and cheaper performance, the 12% score gap reflects genuine missing functionality. For straightforward, precisely defined tasks, Gemini delivers clean results. However, for refactoring or extending existing systems, GPT-5.2 proves more thorough.

When to Use Each Model

-

GPT-5.2: This model is suitable for most general coding tasks. It follows requirements more completely than GPT-5.1, generates cleaner code without superfluous validation, and implements features like rate limiting that GPT-5.1 overlooked. The 40% price increase over GPT-5.1 is justified by the enhanced output quality.

-

GPT-5.2 Pro: This model is particularly useful when deep reasoning is required and time constraints are flexible. In Test 3, it spent 59 minutes identifying and rectifying architectural issues that no other model addressed. This makes it ideal for:

- Designing critical system architecture.

- Auditing security-sensitive code.

- Tasks where correctness is paramount over speed.

For routine daily coding tasks—such as quick implementations, refactoring, or feature additions—GPT-5.2 or Claude Opus 4.5 are generally more practical choices.

-

Claude Opus 4.5: This model remains the fastest to achieve high scores, completing all three tests in a total of 7 minutes with a 98.7% average score. If rapid, thorough implementations are critical, Opus 4.5 continues to be the benchmark.

Practical Tips

Reviewing GPT-5.2 Code

When reviewing code generated by GPT-5.2:

- Fewer Surprises: Expect less unrequested validation and defensive code, resulting in output closer to the original request.

- Check Completeness: GPT-5.2 implements more requirements than GPT-5.1, but may still miss some features that Claude Opus 4.5 catches.

- Concise Output: GPT-5.2 produces shorter code for equivalent functionality compared to GPT-5.1, facilitating quicker reviews.

Reviewing GPT-5.2 Pro Code

When reviewing code generated by GPT-5.2 Pro:

- Unsolicited Fixes: Be prepared for the model to refactor or resolve issues in the original code that were not explicitly requested.

- Stricter Validation: It tends to demand explicit configuration (e.g., environment variables) rather than relying on fallback defaults.

- Time Budget: Allocate 10-60 minutes per task, and plan accordingly.

Prompting Strategy

- With GPT-5.2: Prompt similarly to GPT-5.1. If specific behavior is needed, state it explicitly, as GPT-5.2 will not autonomously add as many defensive features.

- With GPT-5.2 Pro: Utilize this model for foundational architecture decisions or when auditing security-critical code. Its extended reasoning time is a worthwhile investment for tasks where initial correctness outweighs speed.