Context Engineering for AI Agents: Building Robust LLM Systems

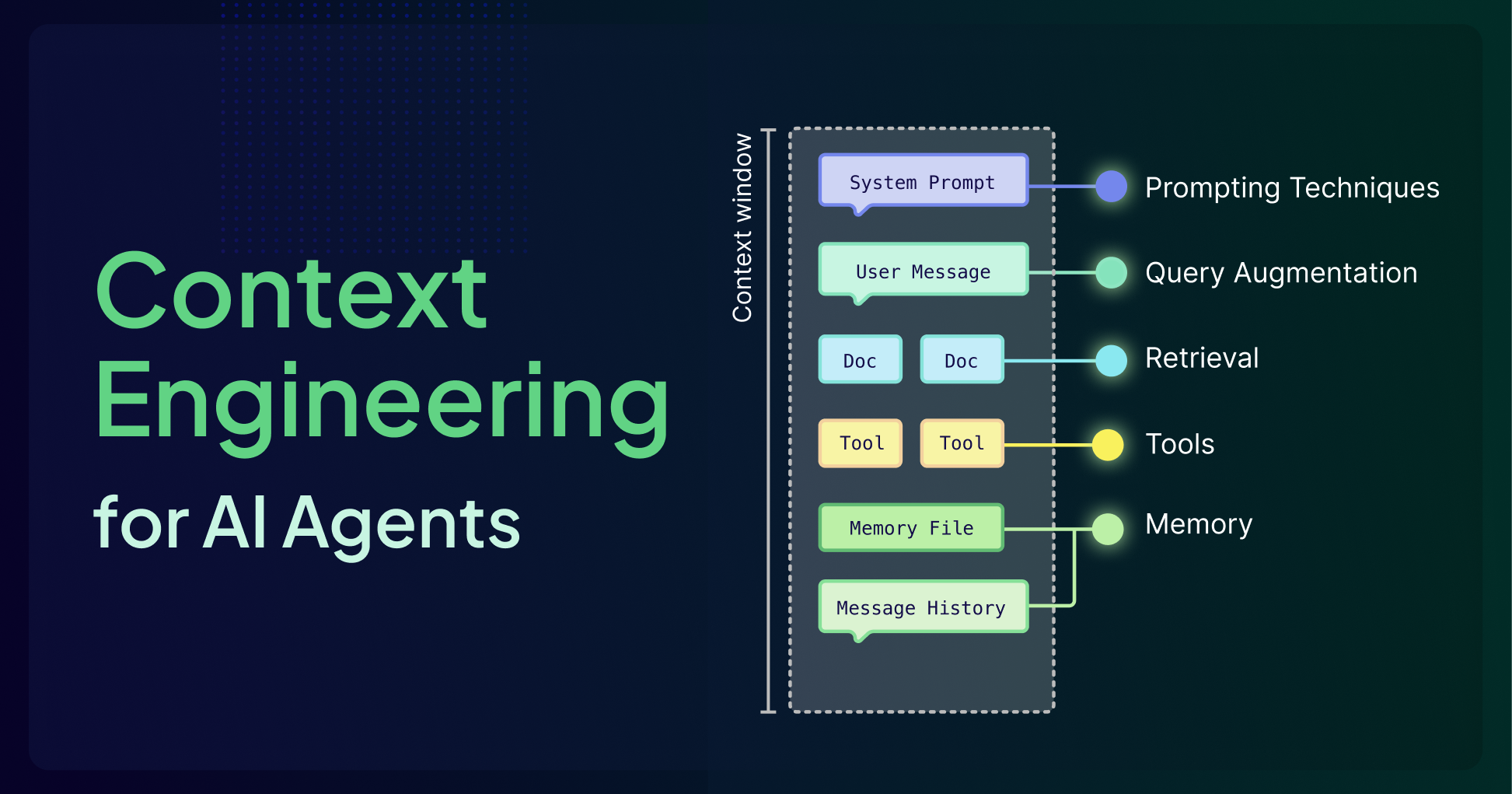

Context engineering is crucial for reliable AI agents. This guide details its six pillars: agents, query augmentation, retrieval, prompting, memory, and tools, optimizing LLM context for robust applications.

Many Large Language Model (LLM) demonstrations initially appear magical, capable of drafting emails, rewriting code, or even booking vacations. For a brief period, these models seem to possess a profound understanding of any input. However, this illusion quickly breaks down when faced with complex, real-world tasks. When success hinges on accessing a specific incident report from yesterday, navigating internal team documentation, or recalling details from an extensive troubleshooting Slack thread, the model often falters. It struggles to retain information from prior interactions, lacks access to private data, and resorts to speculative answers instead of logical reasoning.

The distinction between a captivating demonstration and a reliable production system isn't merely about employing a "smarter" model. It fundamentally lies in how information is carefully selected, structured, and delivered to the model throughout each stage of a task. In essence, the crucial factor is context.

All LLMs operate within finite context windows, which necessitate difficult trade-offs regarding the amount of information the model can simultaneously process. Context engineering is the discipline of treating this window as a scarce resource, meticulously designing all surrounding components—such as retrieval, memory systems, tool integrations, and prompts—to ensure the model allocates its limited attention budget exclusively to high-signal tokens.

What Is a Context Window?

The context window serves as the model's active workspace, where it holds instructions and information pertinent to a current task. Every word, number, and piece of punctuation consumes space within this window. Imagine it as a whiteboard: once it's full, older information must be erased to accommodate new inputs, leading to the loss of important past details.

More technically, a context window refers to the maximum amount of input data an LLM can consider at one time when generating responses, measured in tokens. This window encompasses all user inputs, model outputs, tool calls, and retrieved documents, effectively acting as the model's short-term memory. Every token placed in the context window directly influences what the model "sees" and how it responds.

Context Engineering vs. Prompt Engineering

Prompt engineering focuses on how you phrase and structure instructions for the LLM to achieve optimal results, such as crafting clear or clever prompts, providing examples, or guiding the model to "think step-by-step." While vital, prompt engineering alone cannot resolve the fundamental limitation of a disconnected model.

Context engineering, conversely, is the discipline of designing the underlying architecture that feeds an LLM the correct information precisely when needed. It involves building the connections that link a disconnected model to the external world, facilitating the retrieval of external data, the use of tools, and providing it with memory to ground its responses in facts rather than solely on its training data.

Simply put, prompt engineering is about how you ask the question, while context engineering ensures the model has access to the right textbook, calculator, and even notes/memories from previous conversations before it begins reasoning. The quality and effectiveness of LLMs are heavily influenced by the prompts they receive, but the phrasing of a prompt can only go so far without a well-engineered context. Prompting techniques like Chain-of-Thought, Few-shot learning, and ReAct are most effective when combined with retrieved documents, user history, or domain-specific data.

The Context Window Challenge

The LLM context window can only hold a finite amount of information at once. This fundamental constraint defines the current capabilities of agents and agentic systems. Every time an agent processes information, it must decide what remains active, what can be summarized/compressed/deleted, what should be stored externally, and how much space to reserve for reasoning. It's tempting to assume that merely increasing context window size solves this problem, but this is generally not the case.

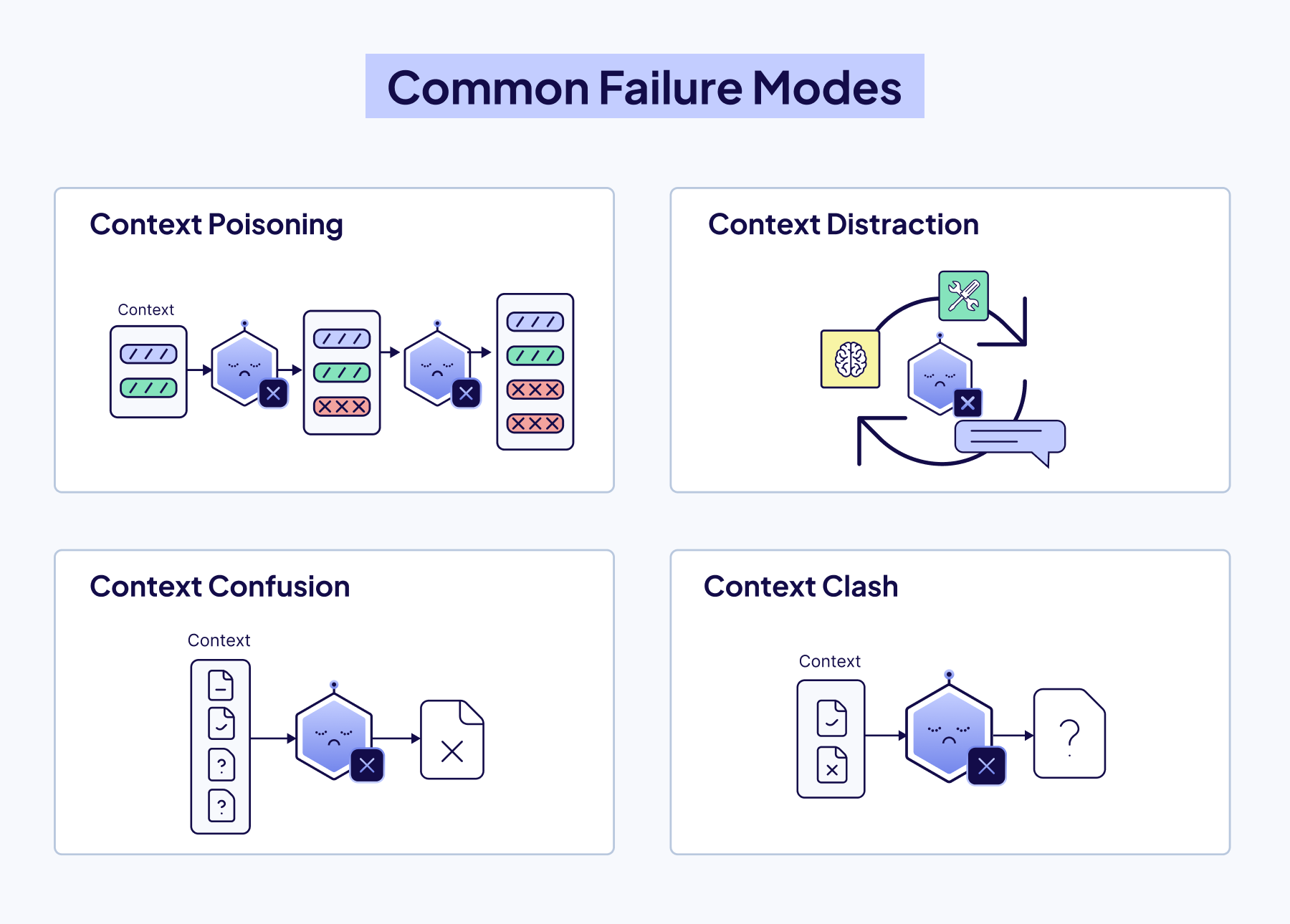

Here are the critical failure modes that emerge as context grows:

- Context Poisoning: Incorrect or hallucinated information enters the context. As agents reuse and build upon this context, these errors continue and compound.

- Context Distraction: The agent becomes burdened by an excessive amount of past information, including history, tool outputs, and summaries, leading to over-reliance on repeating past behavior rather than fresh reasoning.

- Context Confusion: Irrelevant tools or documents overcrowd the context, distracting the model and causing it to use the wrong tools or instructions.

- Context Clash: Contradictory information within the context misleads the agent, leaving it stuck between conflicting assumptions.

These are not just technical limitations; they are core design challenges for any modern AI application. You cannot fix this fundamental limitation simply by writing better prompts or increasing the maximum size of the context window. You must build a comprehensive system around the model. That is what Context Engineering is all about!

The Six Pillars of Context Engineering

Context engineering is a system built from six interdependent components that control what information reaches the model and when:

- Agents orchestrate decisions.

- Query Augmentation refines user input.

- Retrieval connects to external knowledge.

- Prompting guides reasoning.

- Memory preserves history.

- Tools enable real-world action.

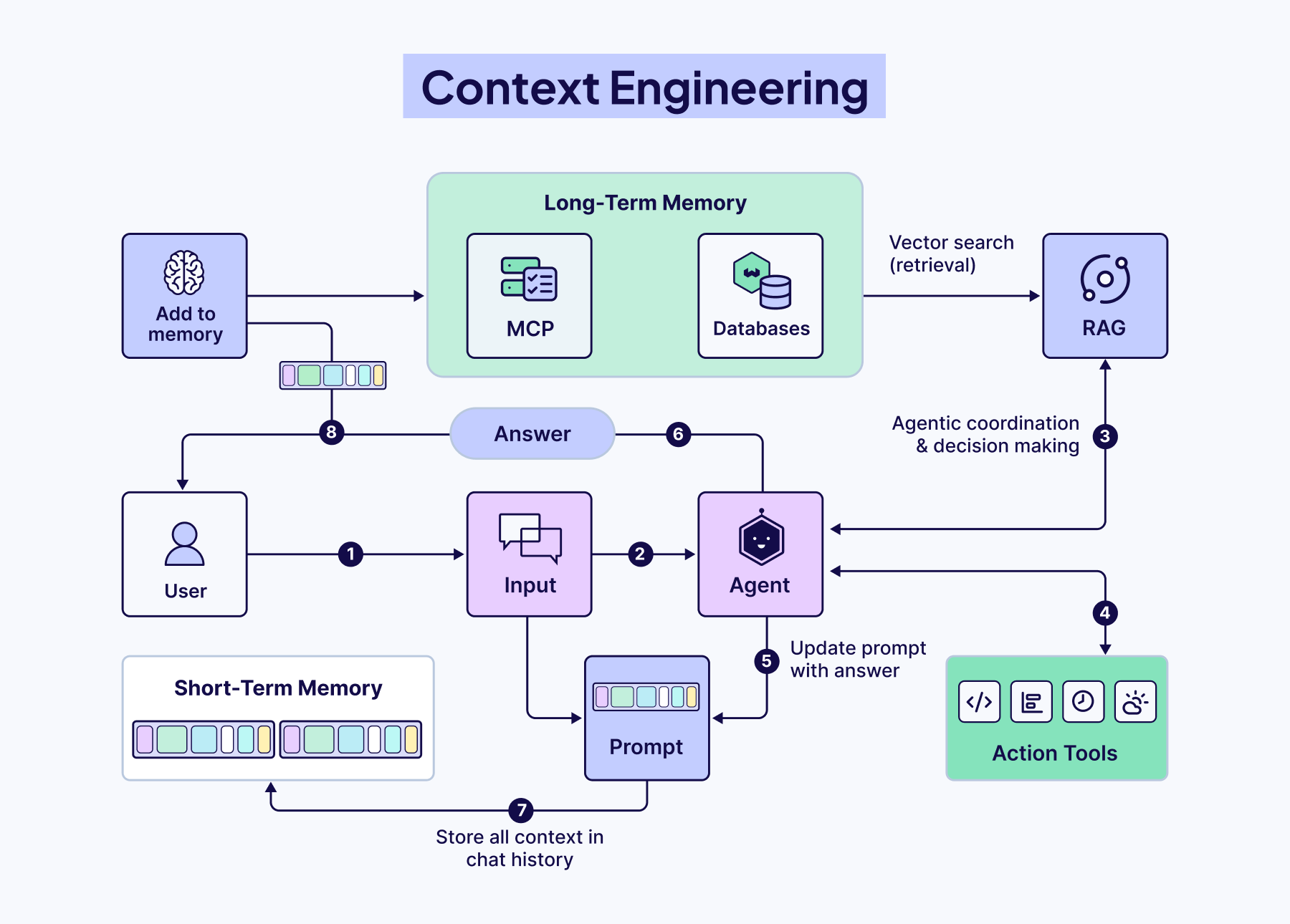

Agents

Agents are rapidly becoming the foundation for building AI applications. They act as both the architects of their context and the users of those contexts, dynamically defining knowledge bases, tool usage, and information flow within an entire system.

What Defines an Agent

An AI agent is a system that utilizes a large language model (LLM) as its "brain" for decision-making and solving complex tasks. The user provides a goal, and the agent determines the necessary steps to achieve it, leveraging available tools in its environment.

These four components typically comprise an agent:

- LLM (Large Language Model): Responsible for reasoning, planning, and orchestrating the overall task.

- Tools: External functionalities the agent can invoke, such as search engines, databases, or APIs.

- Memory: Stores context, prior interactions, or data collected during task execution.

- Observation & Reasoning: The ability to break down tasks, plan steps, decide when and which tools to use, and how to handle failures.

Where Agents Fit in Context Engineering

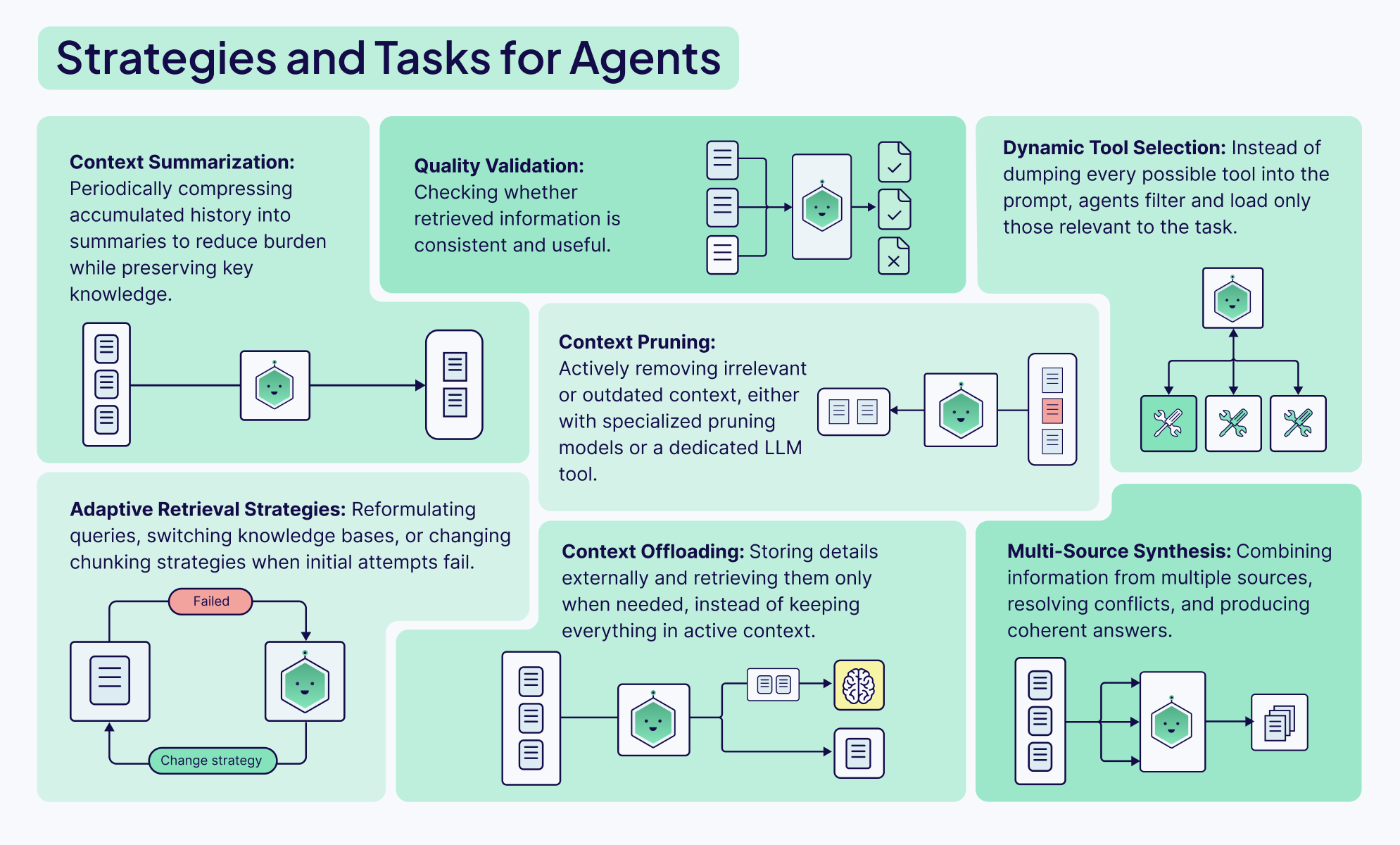

Agents are central to a context-engineering system. They are both the users of context, drawing from retrieved data, history, and external knowledge, and the architects of it, deciding what information to surface, retain, or discard.

In a single-agent system, one agent manages the full pipeline, deciding when to search, summarize, or generate. In multi-agent systems, multiple agents each undertake specialized roles that contribute to a larger goal. In both scenarios, how context is constructed and shared dictates the system's performance.

Agents can perform many different tasks and functions, from defining various retrieval or querying strategies to validating the quality or completeness of a response, or dynamically selecting tools from available options. Agents provide the orchestration between all parts of the system to make dynamic, context-appropriate decisions about information management.

Query Augmentation

Query augmentation is the process of refining a user's initial input for downstream tasks, such as querying a database or presenting it to an agent. This is significantly more challenging than it sounds but critically important. No amount of sophisticated algorithms, reranking models, or clever prompting can truly compensate for misunderstood user intent.

There are two main considerations:

- Users typically do not interact with chatbots or input boxes ideally. Most demos assume users input complete requests in perfectly formatted sentences, but in production applications, actual usage tends to be messy, unclear, and incomplete.

- Different parts of your AI system need to understand and utilize the user’s query in distinct ways. The format best suited for an LLM is likely not the same one you would use to query a vector database. Therefore, we need a method to augment the query to suit different tools and steps within our workflow.

The Weaviate Query Agent is an excellent example of how query augmentation can be seamlessly integrated into any AI application. It functions by taking a user's natural language prompt and determining the optimal way to structure the query for the database, based on its knowledge of the cluster's data structure and Weaviate itself.

Retrieval

A common adage when building with AI is: garbage in, garbage out. Your Retrieval Augmented Generation (RAG) system is only as effective as the information it retrieves. A well-written prompt and a robust model are useless if they are working with irrelevant context. This is why the first and most important step is optimizing your retrieval.

The most crucial decision here is your chunking strategy. It's a classic engineering trade-off:

- Small chunks are excellent for precision. Their embeddings are focused, making it easy to find an exact match. The downside? They often lack the surrounding context for the LLM to generate a meaningful answer.

- Large chunks are rich in context, which is beneficial for the LLM's final output. However, their embeddings can become "noisy" and averaged out, making it much harder to pinpoint the most relevant information. Additionally, they occupy more space in the LLM's context window, potentially displacing other relevant information.

Finding the sweet spot between precision and context is key to high-performance RAG. Get it wrong, and your system will fail to find the right facts, forcing the model to resort to hallucination—the very thing you're trying to prevent.

To assist you in finding the best chunking strategy for your use case, we've created a cheat sheet mapping out the landscape of common chunking strategies, from simple to advanced:

This cheat sheet is a great starting point. However, when moving from a basic Proof of Concept (PoC) to a production-ready system, the questions typically become more complex. Should you pre-chunk everything upfront, or do you require a more dynamic post-chunking architecture? How do you determine your optimal chunk size?

Prompting Techniques

So, once you've perfected your retrieval and your system pulls the most relevant chunks of information in milliseconds, is your job done?

Not quite. You cannot simply stuff them into the context window and hope for the best. You need to instruct the model how to utilize this newfound information. This technique is called prompt engineering.

In a retrieval system, your prompt acts as the control layer. It's the set of instructions that tells the LLM how to behave. Your prompt needs to clearly define the task. Are you asking the model to:

- Synthesize an answer from multiple, sometimes conflicting, sources?

- Extract specific entities and format them as a JSON object?

- Answer a question based only on the provided context to prevent hallucinations?

Without clear instructions, the model will ignore your beautifully retrieved context and hallucinate an answer. Your prompt is the final safeguard that makes the model respect the facts you've given it.

There's a whole toolkit of prompting techniques to choose from. But to transition from theory to production, you need to understand not just what these frameworks are, but how and when to implement them. In our Context Engineering eBook, you’ll learn how to implement simple techniques like Chain-of-Thought (CoT) and more advanced ones like the ReAct framework to build systems that can tackle complex, multi-step tasks with reliability.

Memory

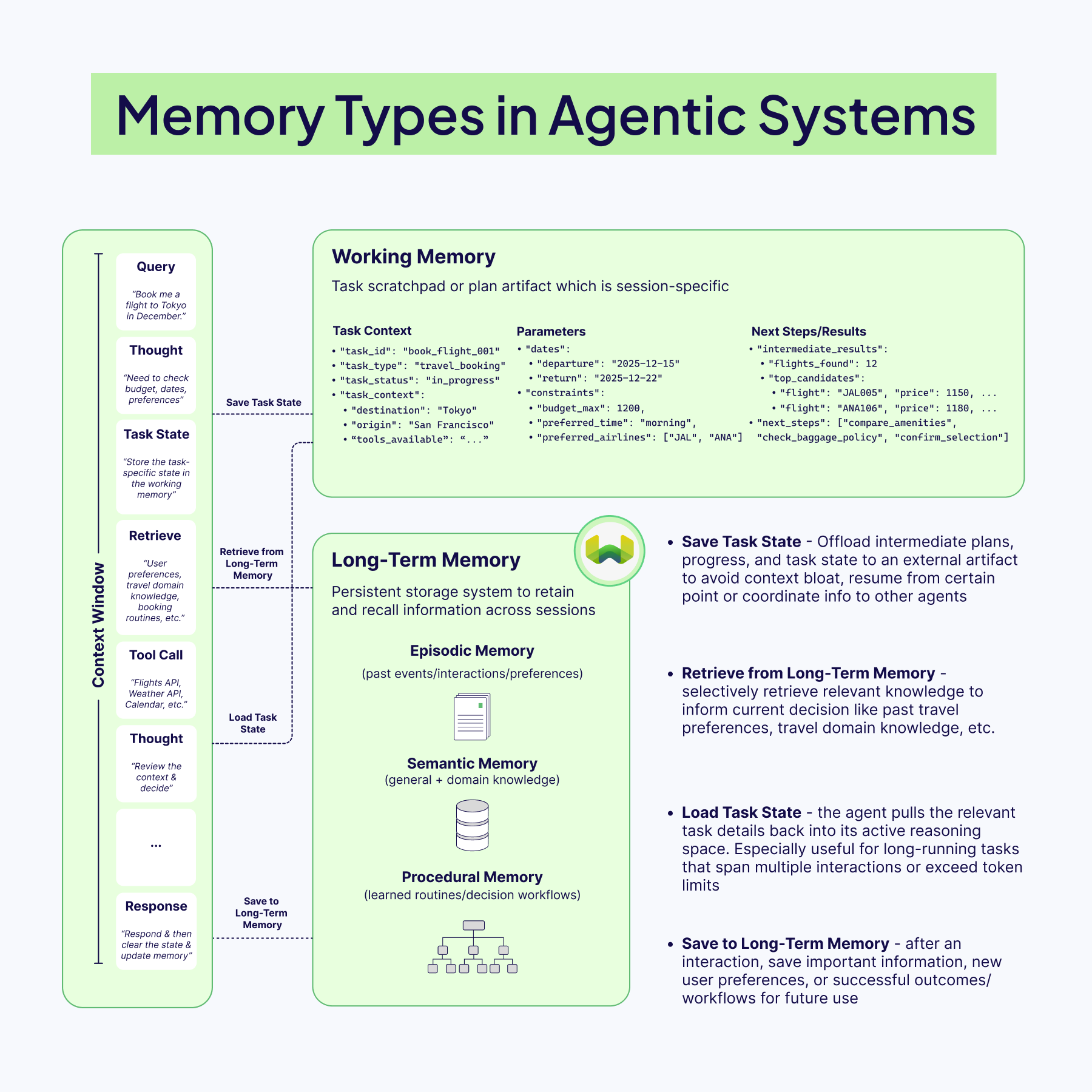

A stateless LLM can answer a single question well, but it has no recollection of what happened five minutes ago or why that might matter for the next decision. Memory transforms the model into something that feels more dynamic and, dare we say, more 'human,' capable of retaining context, learning from the past, and adapting on the fly. For context engineering, the core challenge is not "how much can we store?" but "what deserves a spot in front of the model right now, and what can safely reside elsewhere?"

The Architecture of Agent Memory

Memory in an AI agent is essential for retaining information to navigate changing tasks, remember what worked (or didn't), and plan ahead. To build robust agents, we need to think in layers, often blending different types of memory for optimal results.

Short-term memory is the live context window: recent turns/reasoning, tool outputs, and retrieved documents that the model needs for current step reasoning. This space is brutally finite, so it should remain lean, containing just enough conversation history to maintain coherence and ground decisions.

Long-term memory, in contrast, resides outside the model, typically in vector databases for quick retrieval (RAG). These stores retain information permanently and can hold:

- Episodic Data: past events, user interactions, preferences

- Semantic Data: general and domain knowledge

- Procedural Data: routines, workflows, decision steps

Because it's external, this memory can grow, update, and persist beyond the model’s context window.

Most modern systems typically implement a hybrid memory setup, blending short-term memory with long-term for depth. Some architectures also incorporate a working memory: a temporary space for information needed during a multi-step task. For example, while booking a trip, an agent might keep the destination, dates, and budget in working memory until the task is complete, without storing it permanently.

Designing Memory That Doesn’t Pollute Context

The worst memory system is one that faithfully stores everything. Old, low-quality, or noisy entries eventually resurface through retrieval and begin to contaminate the context with stale assumptions or irrelevant details. Effective agents are selective: they filter which interactions are promoted into long-term storage, often by allowing the model to "reflect" on an event and assign an importance or usefulness score before saving it.

Once in storage, memories require maintenance. Periodic pruning, merging duplicates, deleting outdated facts, and replacing long transcripts with compact summaries keep retrieval sharp and prevent the context window from being filled with historical clutter. Criteria like recency and retrieval frequency are simple but powerful signals for what to keep and what to retire.

Our eBook’s memory section delves deeper into more of these memory management strategies, including mastering the art of retrieval, being selective about what you store, and pruning and refining memories. It also highlights the key principle: always tailor the memory architecture to the task, as there’s no one-size-fits-all solution (at least not yet).

Tools

If memory allows an agent to remember its past, tool use grants it the ability to act in the present. Without tools, even the most sophisticated LLM is confined within a text bubble; it can reason, draft, and summarize brilliantly, but it cannot check live stock prices, send an email, query a database, or book a flight. Tools serve as the bridge between thought and action, the medium that enables an agent to step outside its training data and interact with the real world.

Context engineering for tools isn't just about providing an agent with a list of APIs and instructions. It's about creating a cohesive workflow where the agent can understand available tools, correctly decide which one to use for a specific task, and interpret the results to move forward.

The Orchestration Challenge

Handing an agent a list of available tools is straightforward. Getting it to use those tools correctly, safely, and efficiently is where context engineering begins. This orchestration involves several moving parts, all occurring within the limited context window:

- Tool Discovery: The agent must be aware of all tools it can access. This is achieved by providing a clear tool list and high-quality descriptions in the system prompt. These descriptions guide the agent in understanding how each tool works, when to use it, and when to avoid it.

- Tool Selection and Planning: When a user makes a request, the agent must decide if a tool is needed and, if so, which one. For complex tasks, it may plan a sequence of tools (e.g., “Search the weather, then email the summary”).

- Argument Formulation: After selecting a tool, the agent must determine the correct arguments to pass. For example, if the tool is

get_weather(city, date), it must infer details like “San Francisco” and “2025-11-25” from the user’s query and format them properly for the call. - Reflection: After a tool executes, its output is returned to the agent. The agent reviews it to decide the next step: whether the tool worked correctly, if more iterations are needed, or if an error means it should try a different approach entirely.

As you can see, orchestration occurs through this powerful feedback loop, often called the Thought-Action-Observation cycle. The agent constantly observes the outcome of its actions and uses that new information to fuel its next "thought." This Thought-Action-Observation cycle forms the fundamental reasoning loop in modern agentic frameworks like our own Elysia.

The Shift Toward Standardization with MCP

The evolution of tool use is increasingly moving towards standardization. While function/tool calling works well, it creates a fragmented ecosystem where each AI application requires custom integrations with every external system. The Model Context Protocol (MCP), introduced by Anthropic in late 2024, addresses this by providing a universal standard for connecting AI applications to external data sources and tools. They refer to it as "USB-C for AI"—a single protocol that any MCP-compatible AI application can use to connect to any MCP server.

Instead of building custom integrations for each tool, developers can create individual MCP servers that expose their systems through this standardized interface. Any AI application that supports MCP can then easily connect to these servers using the JSON-RPC based protocol for client-server communication. This transforms the MxN integration problem (where M applications each need custom code for N tools) into a much simpler M + N problem.

As tool use integration standardizes, the real work becomes designing systems that think together, not merely wiring them together.

Example: Building a Real-World Agent with Elysia

Everything covered in this article—from agents orchestrating decisions, query augmentation shaping retrieval, memory preserving state, to tools enabling action—comes together when building real systems.

Elysia is an open-source agentic RAG framework that embodies these context engineering principles within a decision-tree architecture.

Unlike simple retrieve-then-generate pipelines, Elysia's decision agent evaluates the environment, available tools, past actions, and future options before choosing the next course of action. Each node in the tree maintains global context awareness. When a query fails or returns irrelevant results, the agent can recognize this and adjust its strategy rather than blindly proceeding.

In this example, we'll build an agent that searches live news, fetches article content, and queries your existing Weaviate collections, all through intelligent decision-making and orchestration of the Elysia framework. You can get started with Elysia by installing it as a Python package:

pip install elysia-ai

Built-in Tools for Context-Aware Retrieval

Elysia includes five powerful built-in tools:

queryaggregatetext_responsecited_summarizevisualize

The query tool retrieves specific data entries using different search strategies, such as hybrid search or a simple fetch objects, with automatic collection selection and filter generation via an LLM agent.

The aggregate tool performs calculations like counting, averaging, and summing, with grouping and filtering capabilities, also powered by an LLM agent.

text_response and cited_summarize respond directly to the user, either with regular text or with text that includes citations from the retrieved context in the environment, respectively.

The visualize tool helps visualize data present in the environment using dynamic displays like product cards, GitHub issues, graphs, etc.

Before interacting with your collections, you'll need to run Elysia's preprocess() function on your collections so Elysia can analyze schema patterns and infer relationships.

from elysia import configure, preprocess

# Connect to Weaviate

configure(

wcd_url="...", # replace with your Weaviate REST endpoint URL

wcd_api_key="...", # replace with your Weaviate cluster API key,

base_model="gemini-2.5-flash",

base_provider="gemini",

complex_model="gemini-3-pro-preview",

complex_provider="gemini",

gemini_api_key="..." # replace with your GEMINI_API_KEY

)

# Preprocess your collections (one-time setup)

preprocess(["NewsArchive", "ResearchPapers"])

This example assumes you already have NewsArchive and ResearchPapers collections set up in your Weaviate cluster with some data ingested. If you're starting fresh, check out the Weaviate documentation on creating collections and importing objects to get your data ready for Elysia.

Custom Tools to Extend Agent's Capabilities

Elysia can also utilize completely custom tools alongside its built-in ones. This capability extends the agent's reach beyond your local collections to live data, external APIs, or any other data source.

Here's how you can build a simple agent that searches live news with Serper API, fetches article content, and queries your existing multiple collections easily with Elysia:

from elysia import Tree, tool, Error, Result

tree = Tree()

@tool(tree=tree)

async def search_live_news(topic: str):

"""Search for live news headlines using Google via Serper."""

import httpx

async with httpx.AsyncClient() as client:

response = await client.post(

"https://google.serper.dev/news",

headers={

"X-API-KEY": SERPER_API_KEY, # Replace with an actual key: https://serper.dev/api-keys

"Content-Type": "application/json"

},

json={

"q": topic,

"num": 5

}

)

results = response.json().get("news", [])

yield Result(

objects=[

{

"title": item["title"],

"url": item["link"],

"snippet": item.get("snippet", "")

}

for item in results

]

)

@tool(tree=tree)

async def fetch_article_content(url: str):

"""Extract full article text in markdown format."""

from trafilatura import fetch_url, extract

downloaded = fetch_url(url)

text = extract(downloaded)

if text is None:

yield Error(f"Cannot parse URL: {url}. Please try a different one")

yield Result(

objects=[

{

"url": url,

"content": text

}

]

)

response, objects = tree(

"Search for AI regulation news, fetch the top article, and find related pieces in my archive and research papers",

collection_names=["NewsArchive", "ResearchPapers"]

)

print(response)

When you run Elysia with this setup, the decision tree intelligently chains these actions:

- The

search_live_newstool finds breaking stories and adds them to the environment. - The

fetch_article_contenttool retrieves full text from the promising URL. - Elysia's built-in

querytool searches across both collections by routing toNewsArchivefor past articles andResearchPapersfor academic sources.

When you follow up with "What academic papers by XYZ author discuss this topic?", the agent recognizes the intent and prioritizes the ResearchPapers collection without you explicitly specifying it.

This demonstrates context engineering in practice: the decision agent orchestrates information flow, augments queries on the fly, retrieves from multiple sources, maintains state across turns, and uses tools to act on the world—all while maximizing the limited context window with only the relevant tokens to drive reasoning.

Conclusion

So, where does this leave us?

It all boils down to bridging the gap between a flashy demo and a dependable production system. This bridge isn't a single technique; it's a discipline built upon the six interdependent pillars of context engineering.

A powerful Agent is ineffective without clean data from Retrieval. Great Retrieval is wasted if a poor Prompt misguides the model. And even the best Prompt cannot function without the historical context provided by Memory or real-world access granted by Tools.

Context engineering signifies a shift in our role: from prompters who talk at a model to architects who build the world the model lives in.

As builders, we understand that larger models don't necessarily create the best AI systems; rather, superior engineering does.

Now, let's get back to building. We’re looking forward to seeing what you’re working on!