Early Backend Rewrite: Why We Switched from Python (Django) to Node.js (Express) After Just One Week

Discover why an early-stage startup controversially rewrote its entire Python (Django) backend to Node.js (Express) just one week post-launch. This article details the challenges with Python's async ecosystem, the decision-making process, and the significant gains in efficiency and codebase unification achieved through this bold refactoring.

Just one week after launching, our team undertook a significant and seemingly unconventional decision: a complete rewrite of our backend from Python to Node.js. This move was driven by a proactive approach to scalability, even at such an early stage.

While rewriting a core system so soon after launch might appear counterintuitive—especially considering the startup adage of "doing things that don't scale" until product-market fit is achieved—we found ourselves in a unique position. Our launch didn't result in an immediate flood of users necessitating an urgent scale-up, and typically, a well-chosen tech stack should handle growth for a considerable period before a rewrite is considered. However, our situation called for a different path.

The Challenges with Python Async

As a long-time advocate for Django, having been introduced to it at PostHog, it was our natural choice for Skald's initial backend. Django offers rapid development, robust tooling, and impressive flexibility.

Our application at Skald involves extensive interaction with Large Language Models (LLMs) and embedding APIs, leading to a high volume of network I/O that greatly benefits from asynchronous processing. Furthermore, generating vector embeddings for document chunks often requires firing numerous requests concurrently.

However, implementing this in Django quickly became problematic. While our team had limited experience with Python's async features (my prior async experience being primarily with Node.js), this highlighted a core issue: writing solid, performant Python async code is remarkably complex and unintuitive. It often necessitates a deep dive into the underlying architectural principles.

For an early-stage startup, dedicating precious time to master Python async intricacies wasn't feasible. The risk of introducing subtle bugs or performance bottlenecks due to its non-native implementation was high. We discovered that Python's asynchronous foundations, unlike the event loop in JavaScript or Go's goroutines, were retrofitted, leading to inherent difficulties. This perspective is well-articulated in posts like "Python has had async for 10 years -- why isn't it more popular?" and "Python concurrency: gevent had it right."

Our key observations regarding Python's async ecosystem included:

- Lack of native async file I/O.

- Incomplete async support in Django, particularly within the ORM, exacerbating the "colored functions problem." Django's own documentation on async usage highlights numerous caveats.

- The pervasive need for

sync_to_asyncandasync_to_syncwrappers throughout the codebase. - Reliance on non-native solutions like

aiofiles(using thread pools) or Gevent (patching the standard library) for async capabilities, each introducing its own complexities and implications for deployment environments like Gunicorn.

Ultimately, achieving a simple Promise.all-like concurrency pattern in Python, while fully understanding its nuances, proved to be far from straightforward.

Further investigation into the PostHog codebase, where I previously worked, revealed that even a large company with AI features still primarily relies on WSGI and Gunicorn Gthread workers, indicating a preference for horizontal scaling over complex async implementations in Django. Their codebase includes custom async_to_sync utilities, reinforcing the idea that robust async in Django remains challenging.

The Decision to Migrate

We concluded that persisting with Django would soon lead to significant performance bottlenecks and maintenance overhead, even with a modest user base. The prospect of requiring multiple machines for acceptable latency and managing clunky, hard-to-maintain code was not appealing. While "doing things that don't scale" is valid advice, addressing this foundational issue early, when the codebase was small, felt like a strategic move.

We considered FastAPI, a highly regarded Python framework with native async support and a performant reputation, often paired with an async-compatible ORM like SQLAlchemy. A migration to FastAPI would have been quicker, leveraging existing Python code. However, our overall sentiment towards the Python async ecosystem had soured, and given that our background worker service was already in Node.js, we saw an opportunity to consolidate into a single, unified ecosystem.

Thus, we committed to migrating to Node.js, opting for the battle-tested Express framework combined with MikroORM. Despite Express being an older framework, its familiarity and the inherent advantages of JavaScript's event loop were compelling reasons for our choice.

Gains and Losses from the Migration

Gained: Efficiency

Initial benchmarks demonstrate an approximately 3x improvement in throughput out-of-the-box. This is based on code that is still largely sequential within an async context. With Node.js, we now have clear plans to implement extensive concurrent processing for tasks like chunking, embedding, and reranking, promising even greater performance dividends over time.

Lost: Django

Moving away from Django was not without its drawbacks. We've found ourselves having to build more middleware and utilities from scratch in Express. While frameworks like Adonis offer a more comprehensive Node.js experience, adopting a new, full-featured ecosystem felt like a larger undertaking than starting with a minimal setup. We particularly miss Django's ergonomic ORM, which effectively handles performance considerations under the hood, a feature we appreciated even more during the migration of our Django models to MikroORM entities.

Gained: MikroORM

MikroORM proved to be a pleasant discovery during this transition. While still preferring the Django ORM, MikroORM surprised us with Django-like lazy loading, a migration system superior to Prisma's, and a reasonably ergonomic API once properly configured. We are content with our choice of MikroORM over Prisma for our current needs.

Lost: The Python Ecosystem

The Python ecosystem remains dominant for Machine Learning and AI development. While many RAG and agent-building tools offer both Python and TypeScript SDKs, Python often takes precedence, especially for deeper ML work beyond API wrappers. We anticipate needing a dedicated Python service in the future as our ML capabilities become more sophisticated, but for now, our Node.js stack suffices.

Gained: Unified Codebase

The migration allowed us to merge what would have been separate Python and Node.js services into a single Node.js codebase. This unification was a significant benefit, eliminating duplicate logic between our Django server and Node.js worker. Both can now leverage the ORM and share utilities, a considerable improvement over the worker previously running raw SQL.

Gained: Enhanced Testing

The migration prompted us to write a substantially greater number of tests to ensure functionality post-transition. This, along with some necessary refactoring, became a valuable secondary benefit, improving the overall robustness of our system.

The Migration Process

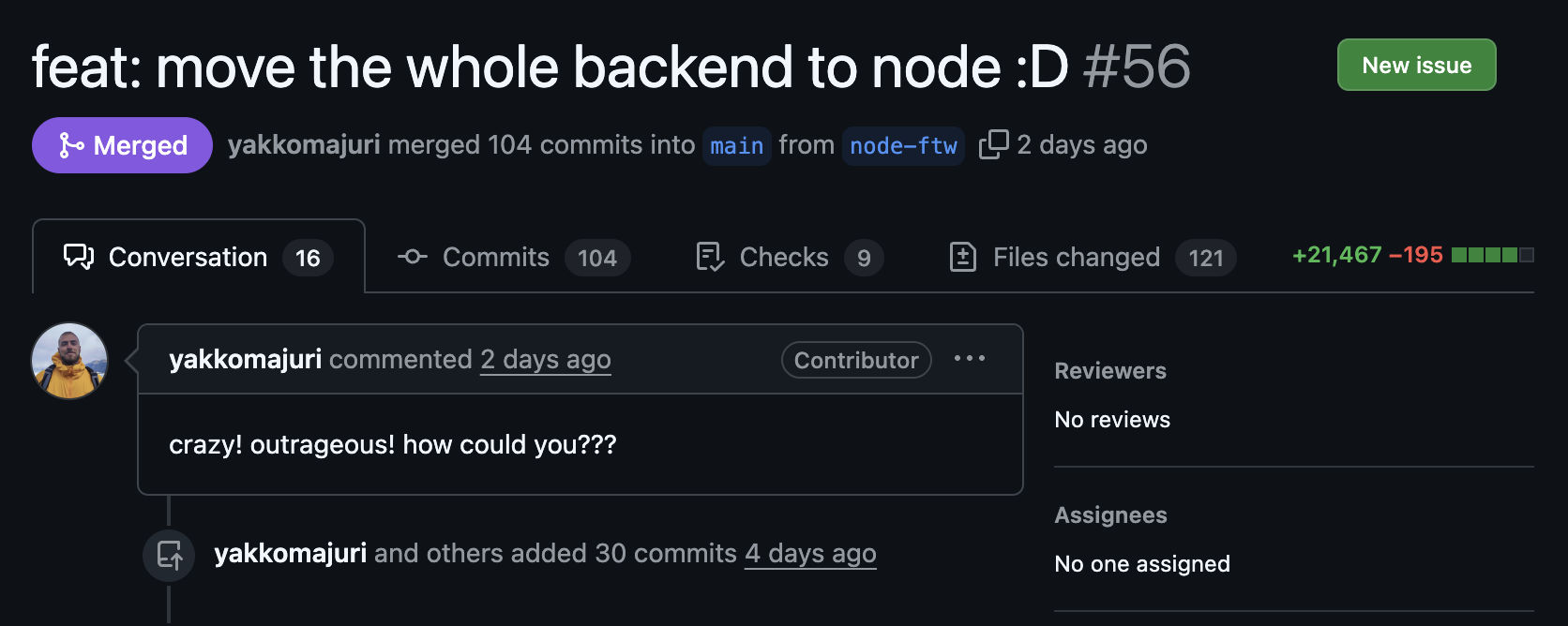

The entire migration was completed in just three days. We intentionally minimized the use of AI code generation in the initial stages to ensure a thorough understanding of our new setup's foundations, particularly MikroORM's internal workings. Once these core components were established, AI tools like Claude Code proved useful for less critical endpoints and for identifying potential issues. The process was challenging, with moments of doubt, especially while balancing customer feature requests and bug fixes against the migration effort.

Conclusion

Despite the unconventional timing, we are highly satisfied with the decision to rewrite our backend. We believe it will yield long-term benefits and is already showing positive returns. The process itself was a valuable learning experience, deepening our understanding of backend architectures and asynchronous programming. We welcome any insights or alternative perspectives on Python async from more experienced developers, as continuous learning remains a core value.