Exploring Vlang: A Developer's Perspective on its Features and Challenges Against Go

A developer explores Vlang's modern features like enums, lambdas, and improved structs, comparing its syntax and performance with Go, and discusses encountered challenges.

A Little Bit About Go

I like Go. I actually don’t mind writing err != nil that much; just set up a snippet, and you’re good to Go. However, I never truly felt a "honeymoon period" with Go. I learned the language, channels, wrote various CRUDs, parsers, and CLIs. It always felt strictly business-like. I initially thought this was due to my career stage, but I was mistaken.

Go is vanilla. It just works. You build it, you ship it. The language is simple, and you don’t need to try hard to make it performant.

But sometimes, you just want a little spice 🌶️🍜

Do you ever wonder what else is out there? Hobby programming is a great concept, but it feels like we’re under too much pressure to produce the next unicorn SaaS with 10 million monthly active users.

You don’t have to pick a tool and then find the right job for it. You can just grab a hammer and start smashing stuff. The same nails you’ve smashed before might feel different if you smash them with another hammer. Pick a Rusty hammer, and you might end up obsessed with the importance of health and safety.

So, What the Heck is Vlang?

I might have shot myself in the foot with the hammer analogy, so let’s talk about ice cream. Here’s the gist: vanilla, drizzle some chocolate on top, peanuts? Sure, why not. You know this taste, you like it, and it comes with more stuff on top. If you like vanilla, you might like vanilla++.

That’s how I see the current state of V. The syntax is similar to Go. It has extra features. Its core is similar; you can cross-compile, you have concurrency (which is also parallelism), channels, and message passing. Oh, and defer as well. All my bros love using defer.

Anyway, let’s see some cool stuff.

Check out the official Vlang website: https://vlang.io

Maps

// So simple!

simple_languages := {

"elixir": {

"score": 100,

"width": 30

}

}

// Alternatively

mut languages := map[string]map[string]int{

"elixir": {

"score": 100,

"width": 30

}

}

languages["elixir"] = {"score": 100}

languages["elixir"]["width"] = 30

Pretty cool! Much like Go, maps require a fixed type; dynamic objects like JSON or JavaScript require either a DTO or a type switch.

Okay, but what about error handling?

elixir_score := languages["elixir"]["score"] or {-1}

if racket := languages['racket'] {

println('racket score ${racket['score']}')

racket_width := racket['width'] or {0}

println('racket width ${racket_width}')

}

// Another way to skin the cat

if 'haskell' in languages {

if 'score' !in languages['haskell'] {

println('where is my haskell score??')

}

}

// Zeroth value

languages['this_dont_exist'] // {}

languages['this_dont_exist']['score'] // 0

Don’t you miss destructuring?

languages_with_racket_ocaml := {

...languages

'racket': {'score': 99}

'ocaml': {'score': 98}

}

Learn more about Vlang maps: https://docs.vlang.io/v-types.html#maps

Struct-licious

module main

struct Language {

pub mut: score int = -1

name string @[required]

}

fn (lr []Language) total() int {

mut total := 0

for l in lr {

if l.score > 0 {

total += l.score

}

}

return total

}

fn (lr []Language) average() int {

return lr.total() / lr.len

}

fn main() {

racket := Language{98, 'racket'}

// Simple arrays too!

langs_arr := [racket, Language{102, 'ocaml'}]

println(langs_arr)

println(langs_arr.total())

println(langs_arr.average())

}

Isn’t that cool? We can have receiver methods on array types. Wait – did you see that? We had a required tag on the struct, which means the program won’t compile if you don’t initialize it. That’s another cool thing I wish Go had. Not to mention the initializer value; Go’s struct is quite predictable in how the value turns out. However, V’s struct allows you to be explicit. This came in very handy for my case!

@[xdoc: 'Server for GitHub language statistics']

@[name: 'v-gh-stats']

struct Config {

mut:

show_help bool = false @[long: help; short: h; xdoc: 'Show this help message']

user string = os.getenv('GH_USER') @[long: user; short: u; xdoc: 'GitHub username env $GH_USER']

token string = os.getenv('GH_TOKEN') @[long: token; short: t; xdoc: 'GitHub personal access token env $GH_TOKEN']

debug bool = os.getenv('DEBUG') == 'true' @[long: debug; short: d; xdoc: 'Enable debug mode env $DEBUG']

cache bool = os.getenv('CACHE') == 'true' @[long: cache; short: c; xdoc: 'Enable caching env $CACHE']

}

This example contains flags for running my SVG generation server; it allows you to define the flags yourself, but if not, it uses the environment variable. Neato!

More on Vlang structs: https://docs.vlang.io/structs.html

WithOption Pattern

Ah, yes, another thing I had to put up with. To be honest, I ended up liking the pattern quite a bit. In Go, no default variables are allowed; you have to use variadic functions. You end up with an Option struct with a zeroth value, passing it around a few functions to finally one last giant private receiver function that creates the struct, fills the value, then finally builds and checks. Imagine a SQL repository pattern where you want to perform a List operation but optionally join or ensure some field is present in a query. Let’s see how we can cook this.

module main

import time

@[params]

struct ListOption {

pub mut: created_since time.Time

}

@[params]

struct HeroListOption {

ListOption

pub mut:

universe string

name ?string

}

struct Hero {}

struct Repo[T] {}

struct Villain {}

fn (r Repo[T]) list(o ListOption) ![]T {

$if T is Villain {

return error('whoops you found Villain somehow but it''s not implemented yet')

}

return error('whoops not implemented for ${T.name} use one of (Hero, ...)')

}

fn (r Repo[Hero]) list(o HeroListOption) ![]Hero {

mut query := orm.build_query()

if o.universe != '' {

query.eq('universe', o.universe)

}

if o.created_since.unix() > 0 {

query.gt('created_since', o.created_since)

}

if name := o.name {

query.eq('name', name)

}

return r.psql(query.do()!)!

}

fn main() {

r := Repo[Villain]{}

r.list() or { println(err) }

hero_repo := Repo[Hero]{}

hero_repo.list()!

hero_repo.list(name: 'bruce')!

hero_repo.list(name: 'bruce', universe: 'dc')!

hero_repo.list(name: 'bruce', universe: 'marvel')!

hero_repo.list(created_since: time.Time{year: 1996})!

}

There’s a lot to unpack here. Let’s start with @params, which tells the V compiler that the struct as a whole can be omitted entirely, so you can write the empty function, and it will still work. Secondly, since generics are a compile-time thing, we can use reflection to check for the name of the type itself. See the link below to see what is possible. You can reflect and check for field existence, field types, as well as attributes (remember @[required]?).

Alright, we keep seeing this bang (!) everywhere. So, what is it? Short answer: Result type. Medium answer: (int, err) -> !int. You don’t need the long answer. The bang can propagate, although you must remember to handle this somewhere, or it will eventually cause a panic. Finally, the optional type. I purposely only used it for one of the fields to show that it can be done; you can decide how you want to write your optionals. But damn! It feels great!

Reference Go's functional options: https://github.com/uber-go/guide/blob/master/style.md#functional-options More on Vlang trailing struct arguments: https://docs.vlang.io/structs.html#trailing-struct-literal-arguments Explore Vlang compile-time reflection: https://docs.vlang.io/conditional-compilation.html#compile-time-reflection Understand Vlang optional and result types: https://docs.vlang.io/type-declarations.html#optionresult-types-and-error-handling

Enums??? In This Economy?

Enums are so back, baby. We can totally replace the previous section’s universe field as such:

enum Universe {

dc

marvel

nil

}

fn (u Universe) str() ?string {

return match u {

// V knows the enum; there's no need to type Universe.dc

.dc { 'dc' }

.marvel { 'marvel' }

else { '' }

}

}

@[params]

struct HeroListOption {

ListOption

pub mut:

universe Universe = .nil

name ?string

}

fn (r Repo[Hero]) list(o HeroListOption) ![]Hero {

...

if o.universe != .nil {

query.eq('universe', o.universe.str())

}

...

}

fn main() {

hero_repo := Repo[Hero]{}

hero_repo.list(name: 'bruce', universe: .dc)!

// functions not expecting enum requires the full path

// auto str() conversion here - see Go fmt.Stringer() or your __str__, __toString()

println('${Universe.dc}')

}

An optional type might be better here. I’m okay with this, though. There are also backed enums, but you can only have integer-backed enums. Did you also notice? Receiver methods on the backed enum, baby.

Dive into Vlang enums: https://docs.vlang.io/type-declarations.html#enums

Lambda; The Best Kind of Lamb

The array structs have a set of methods you can use, like the basic filter, map – there is also a stdlib module called arrays that you need to import. It provides more complex methods like fold and the likes. I don’t know about you, but I am chuffed this exists.

import math

fn example() {

// type hinting here to skip typing Universe.*

mut universes := []Universe{}

universes = [.dc, .marvel, .nil, .dc]

dcs_or_marvel := universes.filter(it != .nil)

nils := universes.filter(|u| u == .nil)

// sorting in place

[5, 2, 1, 3, 4].sort(a < b)

sorted := [5, 2, 1, 3, 4].sorted(a < b)

}

struct XY {

x int

y int

}

fn (xy XY) dist_from_origin() f64 {

return math.sqrt((xy.x * xy.x) + (xy.y * xy.y))

}

fn example2() {

xys := [XY{1, 2}, XY{10, 20}, XY{-1, -69}]

xys.sort(a.dist_from_origin() < b.dist_from_origin())

y_asc := xys.sorted(a.y < b.y)

}

There are a few caveats here. You have to make sure the function you’re using actually allows for it or a < b expressions, but a lambda expression will work anywhere a function is accepted as an argument. However, you can’t use lambda as a variable like x_asc := |a, b| a.x < b.x. Still, neat. Use the LSP to check what is accepted.

More on Vlang lambdas: https://docs.vlang.io/functions-2.html#lambda-expressions Explore Vlang arrays: https://modules.vlang.io/builtin.html#array and https://modules.vlang.io/arrays.html

Some Issues I’ve Encountered

As fun as it has been learning the language and building an SVG service, it is not without problems. The language is on the immature side of things. It has had some time to cook since I last tried it in 2023, and I like it even more. Let’s discuss some of the problems I’ve personally encountered.

net.http

When I was trying to call the GraphQL endpoint using the net.http module, I ran into an issue where it would instantly time out. This network issue described what was happening in my case precisely; adding the flag -d use_openssl completely fixed my problem. This seems to be the case when building for Ubuntu 22.04 — when building the executable for my Windows 11, I did not need this flag.

If you are wondering what the -d flag is about, it is a flag for compile-time code branching. See https://docs.vlang.io/conditional-compilation.html#compile-time-code for more.

Further details on Vlang's net.http module: https://modules.vlang.io/net.http.html

veb

Another weird quirk I’ve had when working with the veb HTTP server is its refusal to build when trying to use gzip. Take a look at this build error message:

/root/.local/v/vlib/veb/middleware.v:129:11: error: field `Ctx.return_type` is not public

127 | handler: fn [T](mut ctx T) bool {

128 | // TODO: compress file in streaming manner, or precompress them?

129 | if ctx.return_type == .file {

| ~~~~~~~~~~~

130 | return true

131 | }

What do you think the issue could be? Maybe my version of the language is incorrect, or my build was faulty? I purged the local V install and got a fresh version straight from the master branch. Yet the issue still persists. Another -d flag perhaps?

Luckily for me, somebody already posted about this issue on GitHub; unluckily for me, I didn’t search the error message first (whoops). Well, I can’t really tell you what the issue is since I haven’t delved into V’s codebase itself. But I can tell you the resolution.

In my main.v, since I was messing around with servers and running main with arguments, I needed to import both modules. This was the head -n5 of my erroneous file:

module main

import os

import veb

The suggested fix?

module main

import veb

import os

Wow! The code now compiles! From a beginner’s perspective, I have no clue why the order of import would affect code in different modules. Namespaces should be sacred and completely independent of each other. The order of import should not matter at all. Both packages seem to be unrelated, so what the heck happened?

Learn about Vlang's veb module: https://modules.vlang.io/veb.html

Related gzip issue on Vlang GitHub: https://github.com/vlang/v/issues/20865#issuecomment-1955101657

More Complex Build System

I alluded to this earlier: there is a cost to using V over Go. V’s main backend compiles to C, and this comes with complexity. There are a bunch of performance optimizations you can do when building the binary itself. You can even build non-static binaries if you wish (in fact, this is the default). This is a double-edged sword; with Go, you get what you get. With V, I got what I got, but I wonder if what I got can be gotten differently.

This might also complicate cross-compilation; the Go team has done a lot of work to ensure things work across different architectures and OSes. I’ve only tried compiling to Windows and Linux using the static flag. Here’s my build command:

v -prod -compress -d use_openssl -cflags '-static -Os -flto' -o main .

The -d flag would have to be optional here depending on where I am trying to target as well; I’d probably have to spend time learning what’s possible for Macs too. I know those platforms are definitely supported since their GitHub actions page contains the CI pipelines for these, but I would personally need to check if my specific implementation, order of imports, as well as -d flags, need to be there for those systems or not.

This is the one big point I have to give to Go. They really have the “just works” philosophy down.

Check Vlang CI pipelines: https://github.com/vlang/v/actions Further reading on Vlang performance optimization: https://docs.vlang.io/performance-tuning.html

Concurrency

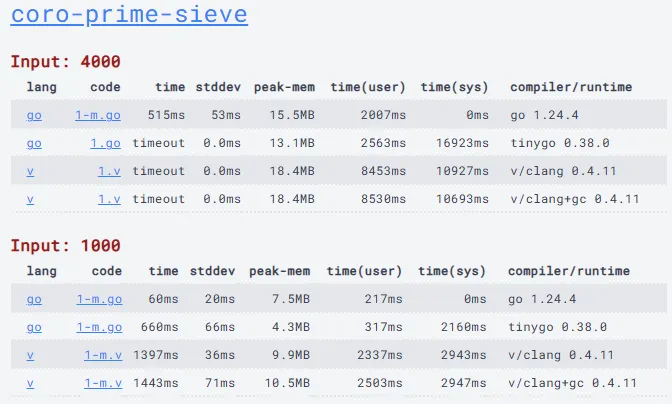

I wondered how the performance of concurrency is compared to Go. The model is almost identical (which is good), but surely the implementation details are different. Luckily, there is a programming benchmark that already exists that answers my questions.

View the V vs Go concurrency benchmark: https://programming-language-benchmarks.vercel.app/v-vs-go

Since I brought up concurrency, let’s take a look at the code to see the implementation.

module main

import os

import strconv

fn main() {

mut n := 100

if os.args.len > 1 {

n = strconv.atoi(os.args[1]) or { n }

}

mut ch := chan int{cap: 1}

spawn generate(ch)

for _ in 0 .. n {

prime := <-ch

println(prime)

ch_next := chan int{cap: 1}

spawn filter(ch, ch_next, prime)

ch = ch_next

}

}

fn generate(ch chan int) {

mut i := 2

for {

ch <- i++

}

}

fn filter(chin chan int, chout chan int, prime int) {

for {

i := <-chin

if i % prime != 0 {

chout <- i

}

}

}

Go's Sieve of Eratosthenes implementation: https://github.com/hanabi1224/Programming-Language-Benchmarks/blob/main/bench/algorithm/coro-prime-sieve/1.go Vlang's Sieve of Eratosthenes implementation: https://github.com/hanabi1224/Programming-Language-Benchmarks/blob/main/bench/algorithm/coro-prime-sieve/1.v

TLDR: It’s finding prime numbers by computing a running channel of previous prime numbers to feed into n to check if n is divisible by any previous primes.

It seems weird to me that V’s version is timing out even though both implementations look almost identical. So I ran the benchmark on my local machine. Here’s my justfile to run the benchmark using all I know so far about optimizing V.

default:

v -prod -gc boehm_full_opt -cc clang -cflags "-march=broadwell" -stats -showcc -no-rsp -o main_v 1.v

go build -o main_go ./main.go

hyperfine './main_v 100' './main_go 100' -N

And the result:

Benchmark 1: ./main_v 100

Time (mean ± σ): 32.1 ms ± 2.9 ms

[User: 42.6 ms, System: 166.4 ms]

Range (min … max): 22.1 ms … 40.7 ms 99 runs

Benchmark 2: ./main_go 100

Time (mean ± σ): 1.8 ms ± 0.2 ms

[User: 2.3 ms, System: 0.3 ms]

Range (min … max): 1.2 ms … 3.1 ms 1471 runs

Summary

'./main_go 100' ran 18.18 ± 2.81 times faster than './main_v 100'

This is exacerbated further when we run N=1000:

Benchmark 1: ./main_v 1000

Time (mean ± σ): 1.189 s ± 0.340 s

[User: 4.410 s, System: 8.144 s]

Range (min … max): 0.806 s … 1.830 s 10 runs

Benchmark 2: ./main_go 1000

Time (mean ± σ): 13.4 ms ± 2.4 ms

[User: 132.5 ms, System: 12.3 ms]

Range (min … max): 8.6 ms … 21.2 ms 182 runs

Summary

'./main_go 1000' ran 88.54 ± 29.90 times faster than './main_v 1000'

Taking a look at the N=100 profiling, we can see what happened exactly:

▶ cat prof.txt | sort --key 2n -n | tail -n 10

202 0.256ms -1.819ms 1267ns sync__new_spin_lock

404 0.064ms -2.664ms 158ns sync__Semaphore_init

4387 10644.653ms 540.655ms 2426408ns sync__Semaphore_wait

8128 5572.567ms 739.231ms 685601ns sync__Channel_try_push_priv

8172 9062.871ms 941.089ms 1109015ns sync__Channel_try_pop_priv

15959 406.167ms 87.435ms 25451ns sync__Semaphore_post

16160 6.993ms -38.159ms 433ns sync__SpinLock_lock

16174 3.412ms 0.754ms 211ns sync__SpinLock_unlock

1766049 380.257ms -434.470ms 215ns sync__Semaphore_try_wait

There is a ton of calls going to Semaphore_try_wait with the actual Semaphore_wait execution itself taking over 10_000 ms in total.

This suggests to me that while concurrency exists and works similarly to the end-user, in its current state, it’s nowhere near Go’s maturity and optimization.

I like V a lot. The abstraction over the syntax is so nice that it made me enjoy writing the syntax as a whole. It makes me wish that Go could do more with what they have, but you and I know that Go would never. V isn’t without its problems, though; the ecosystem is still quite immature, and compiler flags need grokking over even if you’re not a performance maximalist. In my opinion, the issue comes down to maturity. Given enough time and contributors, I believe the language will bloom beautifully. The syntax conveniences already had me sold. I know AI can write boilerplate, but it feels good to not need it at all and write everything myself.

V has come a lot further than when I tried it in 2023. I’ll be actively using it from now on since my main job in Go leaves me wishing for more from time to time. If you enjoy Go, it’s worth checking out. Life is too short to mainline one language. Oh, and check out my SVG service: ktunprasert/v-github-stats

See a live demo of the SVG service: https://app.kristun.dev/stats/?num_languages=10&num_repos=35