Improving Debezium for Scalable Logical Replication in PostgreSQL

Discover how two critical features were contributed to Debezium, enabling robust logical replication for PostgreSQL at scale. This article details solutions for Write-Ahead Log (WAL) growth and complex LSN offset management, crucial for high-throughput event streaming infrastructure.

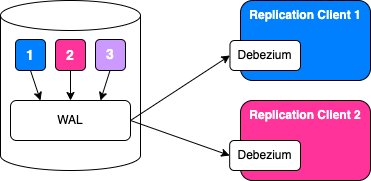

At Zalando, our Fabric Event Streams platform, a Kubernetes-based solution, powers hundreds of event streams by leveraging PostgreSQL logical replication. This platform enables teams to declare event streams directly from their Postgres databases. Each declaration provisions a micro-application utilizing Debezium in embedded mode to publish row-level change events. At peak, these combined connectors process hundreds of thousands of events per second across over 100 Kubernetes clusters.

Operating since late 2018, this infrastructure has processed billions of events. However, achieving this scale required overcoming significant challenges with logical replication, particularly in addressing Write-Ahead Log (WAL) growth. This article details two key features we contributed to Debezium to enhance logical replication at scale.

The WAL Growth Problem Revisited

A few years ago, we encountered a critical issue where low-activity PostgreSQL databases experienced uncontrolled WAL growth. The problem was straightforward: replication slots would not advance without table activity, leading to WAL accumulation that could exhaust disk space. This was our biggest operational hurdle when scaling our event infrastructure, even with heartbeats configured.

This issue was addressed upstream in the PostgreSQL JDBC driver, allowing the driver to respond to keepalive messages from Postgres, thereby advancing the replication slot even when no relevant changes were pending. This fix, deployed in production with Debezium 2.7.4 (pinning to pgjdbc version 42.7.2), successfully eliminated WAL growth for nearly two years, processing billions of events without data loss.

However, a subsequent Debezium upgrade revealed a challenge. A recent pull request had hard-coded the pgjdbc keepalive flush feature to disabled by setting withAutomaticFlush(false) in the replication stream builder. The Debezium team made this change for valid reasons: the feature conflicted with Debezium's own LSN management logic, and users reported issues. For us, this change was a blocker, preventing upgrades without reintroducing WAL growth. We needed a solution that would serve both the broader Debezium community and our verified production use case.

First Contribution: Making LSN Flush Opt-In

We proposed a solution (DBZ-9641) to expose the underlying pgjdbc setting as a connector configuration option, allowing users to opt into this proven-safe feature while maintaining the safer disabled default. The Debezium team was highly receptive.

We submitted PR #6881, introducing a new lsn.flush.mode configuration property. This property replaces and deprecates the existing flush.lsn.source boolean, offering three explicit modes:

manual: LSN flushing is managed externally by your application or another mechanism; the connector does not flush the LSN at all.connector(default): Debezium flushes the LSN after processing each logical replication change event. The PostgreSQL JDBC driver'skeep-alivethread does not flush LSNs.connector_and_driver: Both Debezium and the PostgreSQL JDBC driver'skeep-alivethread can flush LSNs. This mode prevents WAL growth on low-activity databases with infrequent changes to monitored tables. When the connector has no pending LSN to flush, the JDBC driver'skeep-alivemechanism can flush the server-reportedkeep-aliveLSN, reflecting all WAL activity including unmonitored actions likeCHECKPOINT,VACUUM, orpg_switch_wal()that do not produce logical replication change events.

To ensure a smooth transition, we implemented full backward compatibility: true for the deprecated flush.lsn.source maps to connector, and false maps to manual. This allows users time to migrate to the new configuration. This contribution unblocked our upgrade path, but it also prompted us to investigate why others found the feature problematic.

Understanding the Discrepancy: Slot vs. Offset

We reviewed GitHub issues, such as one from Airbyte, illustrating problems where connectors failed on restart with "Saved offset is before replication slot's confirmed lsn" after upgrading pgjdbc (42.7.0+), often forcing full re-syncs.

At Zalando, our approach was somewhat unique. Since launching Fabric Event Streams in 2018, we have consistently treated the PostgreSQL replication slot as the authoritative source of truth for stream position. Using the ephemeral MemoryOffsetBackingStore meant our connectors always deferred to the slot position on startup, so the keepalive flush advancing the slot ahead of a stored offset was never an issue for us.

Our confidence in replication slots stems from our PostgreSQL infrastructure. We have managed PostgreSQL at scale with Patroni for automatic failover since the mid-2010s and later developed the Postgres Operator for PostgreSQL on Kubernetes. From the outset of logical replication in 2018, we implemented robust replication slot management to ensure slots survived failovers, allowing us to trust the slot position as durable and correct.

In contrast, most other Debezium users relied on persistent offset stores (like Kafka Connect's offset topics) to track their position, considering the offset authoritative and the replication slot a PostgreSQL implementation detail. For them, a keepalive flush advancing the slot beyond the stored offset created an irreconcilable conflict, leading to mandatory full re-synchronizations.

The Core Conflict: When Slot and Offset Diverge

The fundamental issue arose from the conflict between the keepalive flush and Debezium's offset management. Logical replication tracks position in two places: Debezium in an offset store (Kafka, memory, etc.) and Postgres on the database server via the replication slot.

Debezium, on startup, compares these positions. By default, if the offset is behind the slot (offset_lsn < slot_lsn), it attempts to stream from the stored offset without validation. If the requested LSN is no longer available in the WAL, PostgreSQL returns an error. An optional internal.slot.seek.to.known.offset.on.start=true configuration offered a stricter "fail fast" policy, immediately failing with "Saved offset is before replication slot's confirmed lsn" for slot recreation scenarios. Neither approach effectively handled the keepalive flush scenario.

The conflict with pgjdbc keepalive flush occurred as follows:

- The JDBC driver advances the slot LSN, skipping unmonitored WAL activity (e.g.,

VACUUM,CHECKPOINT). - The connector has not yet flushed its offset, awaiting the next change event.

- The connector crashes or restarts before flushing its offset.

- On restart,

offset_lsn < slot_lsnbecause the slot advanced beyond the stored offset. - The connector refuses to start, despite no actual data loss.

Users with durable offset stores frequently encountered this with connector_and_driver mode. While keepalive flush prevented WAL growth, Debezium's strict validation rendered it operationally unsafe. We needed a mechanism for users to trust the slot when it was known to be reliable.

Second Contribution: Trusting the Slot Position

We proposed a new approach (DBZ-9688) for offset/slot mismatches by introducing an offset.mismatch.strategy configuration property, inspired by Kafka's auto.offset.reset. This allows Debezium users to opt into trusting the PostgreSQL replication slot's position.

PR #6948 introduced the offset.mismatch.strategy enum, which controls connector behavior when the stored offset LSN differs from the replication slot's confirmed flush LSN. This property replaces and deprecates the existing internal.slot.seek.to.known.offset.on.start boolean, offering four explicit strategies:

no_validation(default): The connector attempts to stream from the stored offset without validating the replication slot state. If the slot is ahead of the offset and the requested LSN is unavailable, PostgreSQL returns an error. This preserves existing default behavior and backward compatibility.trust_offset: The connector validates that the stored offset is not behind the replication slot's confirmed flush LSN, failing with an error if it is (indicating potential data loss). If the offset is ahead of or equal to the slot, the connector advances the slot to the offset position usingpg_replication_slot_advance()when possible. This strategy replacesinternal.slot.seek.to.known.offset.on.start=trueand is useful for detecting and being alerted to unexpected slot state changes.trust_slot: The connector treats the PostgreSQL replication slot as the authoritative source. If the stored offset is behind the slot's confirmed flush LSN, the connector automatically advances its offset to match the slot position, skipping events between the stored offset and the slot position. This is appropriate when usinglsn.flush.mode=connector_and_driver, which requires trusting the slot.trust_greater_lsn: The connector synchronizes to the maximum LSN between the stored offset and the slot's confirmed flush LSN, offering bidirectional synchronization. If the offset is behind the slot, it advances to the slot position. If ahead, the slot advances to the offset position (when possible).

Backward compatibility was ensured: false for the deprecated internal.slot.seek.to.known.offset.on.start maps to no_validation, and true maps to trust_offset.

This provides Debezium operators with the flexibility to align connector behavior with their operational realities. Users configuring lsn.flush.mode=connector_and_driver can pair it with offset.mismatch.strategy=trust_slot for safe, production-ready operation with durable offset stores. It also assists in manual recovery, such as when an operator needs to advance a slot past corrupted WAL using pg_replication_slot_advance(), allowing Debezium to respect this change instead of refusing to start.

Documentation and Gratitude

A crucial aspect of open-source contribution is ensuring feature discoverability and understanding. We collaborated closely with the Debezium team to document both lsn.flush.mode and offset.mismatch.strategy within the PostgreSQL connector documentation, clarifying their relationship and providing usage guidance.

Both features have been merged into Debezium and are available in nightly builds, with an official release anticipated soon. This resolved our upgrade path and will enable safer logical replication for the wider community. The Debezium engineers were exceptionally responsive and collaborative, offering thoughtful engagement, detailed feedback, and helping design solutions beneficial to everyone. This experience underscored the value of open source: problems led to proposed solutions, which maintainers helped refine, ultimately benefiting all users.

Practical Implications for Users

If you are using PostgreSQL logical replication with Debezium, these features can significantly improve your operations:

- Experiencing WAL growth on low-activity databases? Configure

lsn.flush.mode=connector_and_driverpaired withoffset.mismatch.strategy=trust_greater_lsn(for persistent offset stores) to prevent WAL accumulation without dummy writes. Thetrust_greater_lsnstrategy offers bidirectional synchronization and self-healing recovery. - Need to manually advance replication slots past corrupted WAL segments? Use

offset.mismatch.strategy=trust_slotortrust_greater_lsnto recover without re-snapshotting your entire database. - Want maximum safety and to detect unexpected slot changes? Use

offset.mismatch.strategy=trust_offsetto validate that your stored offset is never behind the slot, catching potential data loss scenarios early.

At Zalando, these features are vital for our event streaming infrastructure across hundreds of Postgres databases, and we hope they similarly aid you in building reliable logical replication systems.