Java's Evolving Landscape: A Deep Dive into Deprecated Features

Explore Java's evolving landscape by delving into its deprecated features like Legacy Collections, Finalization, NashornScriptEngine, SecurityManager, and Unsafe. Discover why these 'antiquities' were phased out and what modern, safer alternatives have emerged to enhance Java development.

As Java continues to evolve with new mechanisms and APIs, certain outdated features are gradually being phased out. This article explores several such 'antiquities' – including Vector, Finalization, NashornScriptEngine, SecurityManager, and Unsafe – examining why they became obsolete and what modern alternatives have emerged.

How the Tomb is Filled

Java thrives and expands, accumulating new features, APIs, and modules, yet it periodically sheds its older components. Some parts become redundant, others no longer suit modern requirements, and a few were always intended as temporary workarounds. All these outdated or removed mechanisms quietly and steadily fill Java's "tomb."

Let's delve into some of these.

What's Inside the Tomb?

Legacy Collections

Before Java introduced List, Deque, or Map, collections like Vector, Stack, and Dictionary were commonly used. Unlike other deprecated features, this outdated API isn't explicitly marked as Deprecated, yet their documentation strongly advises against their use. Across the internet, these older classes are often referred to as "legacy collection classes."

But why are they considered outdated?

Legacy collections primarily suffer from two disadvantages:

- All interaction points with these classes are synchronized.

- The classes lack common interfaces, requiring an individual approach.

Let's elaborate.

The first disadvantage is that all interaction points with these classes are synchronized. This might initially seem like an advantage, as secure multithreading is generally desirable. However, if these collections are used in a single-threaded context, synchronization merely introduces performance overhead without offering any real security benefits. Modern Java practices reflect this, with developers often preferring to copy collections rather than modify them directly.

The second disadvantage is that classes lack common interfaces and require an individual approach. This leads to the perennial programming problem of non-universal code. Imagine developing with an older Java version, where your code is tightly coupled to Stack. For instance:

Stack<Integer> stack = new Stack<>();

for (int i = 0; i < 5; i++) {

stack.add(i);

}

System.out.println(stack.pop());

This code works fine initially, but picture it expanding to 1,000 lines with 150 references to the stack variable. One day, a performance issue arises (the code blocks, not the customers). You realize Stack uses an array for storage, which is frequently recreated. You theorize that a linked list could boost performance significantly. Eager to test, you find a LinkedList implementation and then face the laborious task of refactoring all 150 references to stack. This is far from ideal.

Now, consider the modern approach:

Deque<Integer> deque = new ArrayDeque<>();

IntStream.range(0, 5)

.forEach(deque::addLast);

System.out.println(deque.pollLast());

Over time, this code also grows to 150 references, but now they point to deque. If you again theorize that a linked list would be faster, you simply change the declaration from ArrayDeque to LinkedList, as both implement the Deque interface. This is all that's required!

This illustrates how the Collections Framework solved the problem of missing common interfaces, helping developers avoid being tied to specific implementations. For example, instead of being locked into Vector, developers can now use the more versatile List interface.

Finalization

At one point, finalizers (specifically the #finalize() method) seemed like an excellent concept: a method invoked when an object was destroyed, intended to release system resources.

However, in practice, this idea proved problematic:

- The finalizer might not be called at all if a strong reference to the object still existed or if the garbage collector (GC) decided not to reclaim it.

- If the GC did decide to destroy the object, it had to wait for the finalizer's code to execute, potentially delaying collection.

- An object could even "resurrect" itself within its finalizer.

- Worst of all, finalizers created ideal conditions for memory leaks.

Modern Java is highly flexible, with various garbage collector implementations and configurations. Under these conditions, it cannot be guaranteed that an object will ever be deleted. Furthermore, the garbage collector is guaranteed not to collect an instance as long as it has at least one strong reference.

For these reasons, the logic for releasing system resources should not depend on an object's lifecycle managed by the GC. The documentation for the #finalize() method now recommends using the AutoCloseable interface instead. This interface was specifically designed for efficient resource release without relying on the garbage collector. The key is simply not to forget to close resources.

For scenarios requiring tracking of object deletion, Java provides an alternative: java.lang.ref.Cleaner, which addresses the issues inherent in finalizers.

To illustrate the dangers, let's look at some unsafe code using a finalizer:

class ImmortalObject {

private static Collection<ImmortalObject> triedToDelete = new ArrayList<>();

@Override

protected void finalize() throws Throwable {

triedToDelete.add(this);

}

}

Here, every ImmortalObject instance that the garbage collector attempts to delete is "resurrected" by being added to a static list. This will inevitably lead to an OutOfMemoryError and consume excessive RAM.

The equivalent code using Cleaner looks like this:

static List<ImmortalObject> immortalObjects = new ArrayList<>();

// ... somewhere in the code ...

var immortalObject = new ImmortalObject();

Cleaner.create()

.register(

immortalObject,

() -> immortalObjects.add(immortalObject)

);

It's important to note that the Runnable acting as the listener here holds a reference to immortalObject. This prevents the object from being resurrected because the garbage collector will not delete the instance as long as a strong reference exists. This approach is more idiomatic in Java and avoids the "necromancy" of finalizers.

Finalizers were often employed when interacting with objects created via JNI outside of Java code (e.g., in C++). However, due to their inherent unsafety, finalizers were eventually replaced with more elegant alternatives.

NashornScriptEngine

Few recall that Java once closely paralleled JavaScript's evolution: JDK 8 included the built-in Nashorn JS engine, allowing developers to execute JavaScript code directly from Java.

You could even implement the popular "banana puzzle" without leaving Java:

new NashornScriptEngineFactory()

.getScriptEngine()

.eval("('b' + 'a' + + 'a' + 'a').toLowerCase();");

While initially a "cool" feature, the reality was that Java developers had to maintain a JavaScript engine. Nashorn struggled to keep pace with JavaScript's rapid evolution, and polyglot solutions like GraalVM Polyglot began to emerge. These factors led to the engine becoming outdated after just four language versions (in Java 11) and being finally removed in Java 15.

Why is GraalVM Polyglot superior? While Nashorn implemented JavaScript on top of Java, Polyglot creates an entire JVM-based platform that natively supports multiple languages, including both Java and JavaScript.

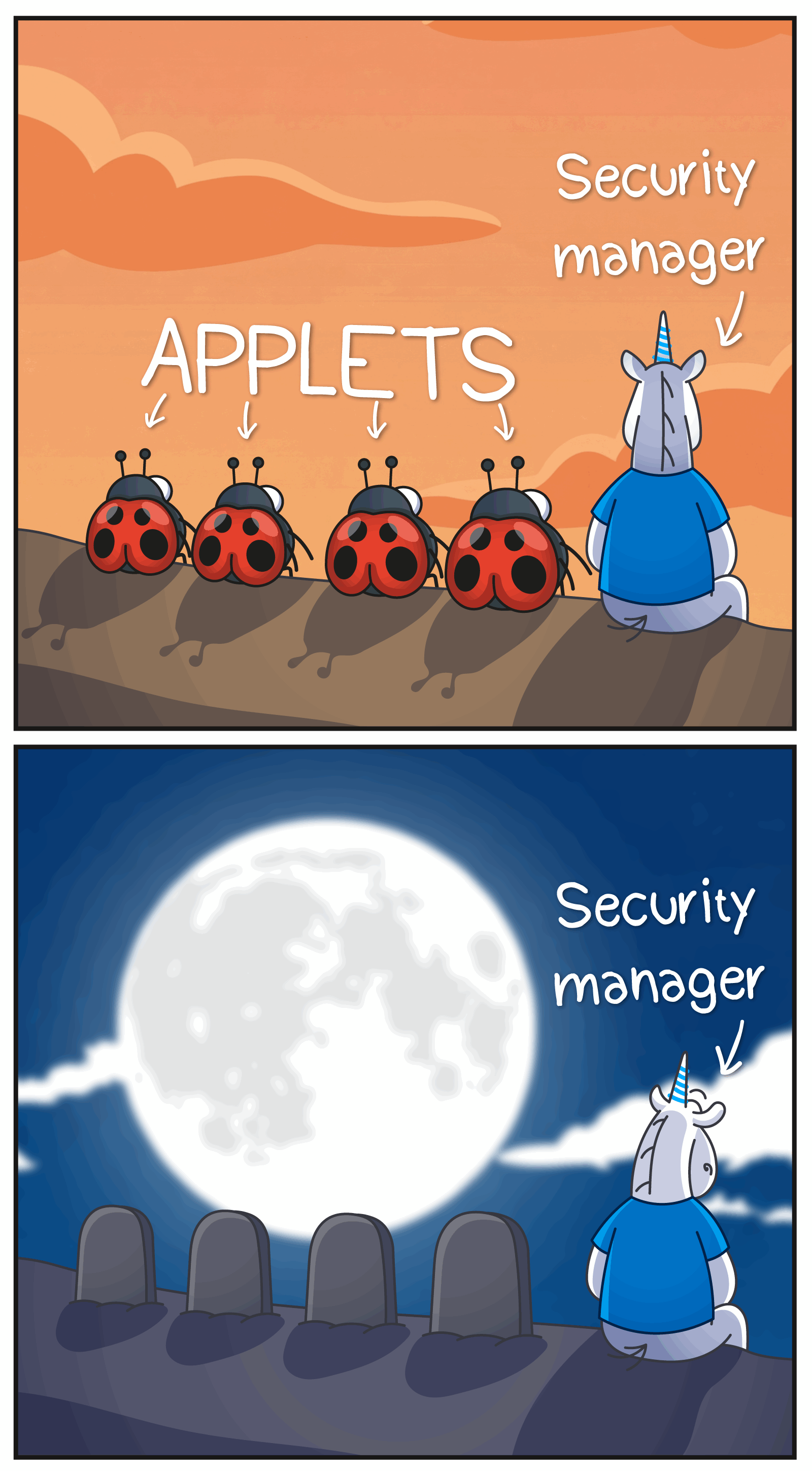

SecurityManager

Meet another veteran that persisted until Java 17: SecurityManager.

During the era of "wild applets" where Java code could run directly in browsers, SecurityManager was crucial for Java application security. An applet was a small program running in a browser, capable of displaying animations, graphics, or interactive elements, but operating under strict security limitations.

However, as time progressed, "wild applets" migrated from browsers to desktops, then eventually died out as a solution. Yet, SecurityManager remained and was frequently utilized in enterprise applications.

But nothing lasts forever, and the SecurityManager eventually faced removal. Java 17 stripped away its entire internal logic, leaving only a facade. Looking at the entry point for installing SecurityManager, you'll now find only a placeholder:

@Deprecated(since = "17", forRemoval = true)

public static void setSecurityManager(SecurityManager sm) {

throw new UnsupportedOperationException(

"Setting a Security Manager is not supported");

}

How did SecurityManager function? It acted as an internal JVM guard, intercepting potentially dangerous operations and verifying that each stack element had the necessary permissions to perform such an action.

What exactly did SecurityManager intercept? The list is extensive, but some key areas included:

- File system access

- Network connections

- Creating and terminating processes

- Access to system properties

- Manipulation with classes and reflection

SecurityManager performed well in the days of browser applets, where untrusted code needed to be sandboxed. However, with the rise of containerization, it became outdated.

Although SecurityManager was initially designed for applets, it found use in other contexts. For instance, Tomcat supported its use in server applications. In the PVS-Studio static analyzer, it helped solve a peculiar problem: if the static class initializer in the analyzed code called System#exit, the analysis process would terminate. SecurityManager allowed blocking such operations when analyzing static fields, a function later replaced by ASM.

Interestingly, even though applets were discontinued earlier than SecurityManager, their removal from the JDK has only recently begun.

The world changes, approaches evolve, and SecurityManager is now a part of Java's past.

Unsafe

Java is literally removing its "unsafety."

Perhaps the most legendary resident of this tomb was sun.misc.Unsafe. This class served as a secret gateway into the JVM's depths, where standard language rules no longer applied. Unsafe could easily crash a Java application with a SIGSEGV error.

How? For example:

class Container {

Object value; // (1)

}

// ✨ some magic to get unsafe unsafely ✨

Unsafe unsafe = ....;

long Container_value =

unsafe.objectFieldOffset(Container.class.getDeclaredField("value"));

var container = new Container();

unsafe.getAndSetLong(container, Container_value, Long.MAX_VALUE); // (2)

System.out.println(container.value); // (3)

In this example, we attempt to set Long.MAX_VALUE (2) into the value field (1), which is declared as an Object. When System.out.println(container.value) (3) is called, the virtual machine will crash because value now contains garbage instead of a valid object reference. This is just one straightforward example of the types of errors Unsafe could enable.

But why was such a dangerous mechanism ever added to Java?

Unsafe wasn't created as an anarchist tool, but as a "savior." In earlier times, before VarHandle and MemorySegment (which we'll discuss shortly), standard libraries needed efficient ways to perform low-level operations such as memory management, atomic operations, or thread blocking. When the language itself prohibited direct access "under the hood," a loophole was created. Unsafe emerged as an internal tool for Java's own needs.

Developers intended its use strictly within Java, and it even had built-in protection against external access to its instance. However, what happened in Java did not stay in Java, and developers found ways to obtain Unsafe, bypassing its protection. Behold the "magic" for acquiring Unsafe in an unsafe manner:

var Unsafe_theUnsafe = Unsafe.class.getDeclaredField("theUnsafe");

Unsafe_theUnsafe.setAccessible(true);

Unsafe unsafe = (Unsafe) Unsafe_theUnsafe.get(null);

This object allowed for extensive manipulation of the virtual machine.

However, it's unfair to solely criticize developers who sought access to what Java tried to hide. Access to Unsafe helped many libraries achieve greater efficiency. For example, the popular Netty library, used for network operations, was compelled to use Unsafe. When a safer replacement became available, Netty promptly switched to it.

So, what are these replacements?

There are two primary successors:

- The

Foreign Function & Memory APIreplaces memory operations. - The

VarHandle APIreplaces atomic operations.

These APIs are not merely direct method replacements for Unsafe. They are powerful, well-designed tools for safely performing low-level operations. For instance, the Foreign Function & Memory API enables calling native functions without the complexity of JNI.

Java's "unsafety" is gradually fading into oblivion, leaving behind new, robust, and well-designed APIs that achieve the same functionality without the risk of a SIGSEGV error.

Why is the Tomb Filled?

Java is maturing, shedding outdated ideas, and becoming safer, cleaner, and simpler. Each new API is not just "adding another module"; it's a more sophisticated way of addressing previous shortcomings.

Simultaneously, this virtual "tomb" plays an important cultural role: it chronicles the evolution of ideas and decisions, reminding us of the journey taken and providing essential context for meaningful platform development.