Reverse Mathematics Illuminates Why Hard Problems Are Hard

Reverse mathematics reveals deep logical equivalences between disparate theorems in computational complexity, providing crucial insights into the inherent difficulty of proving hard problems.

Computational complexity researchers often face significant challenges when confronting problems like finding the shortest route through multiple cities, known as the 'traveling salesperson problem.' Despite decades of effort, a robust mathematical proof demonstrating why such problems are inherently difficult remains elusive.

This pursuit has led to an increased focus on metamathematics, a field that treats the process of mathematical proof as an object of analysis. Metamathematicians scrutinize the foundational assumptions, or axioms, of mathematical systems, altering them to explore their impact on provable theorems. When applied to complexity theory, this approach aims to map the capabilities and limitations of different axiom sets regarding computational difficulty, ultimately seeking to understand the obstacles in proving problem hardness.

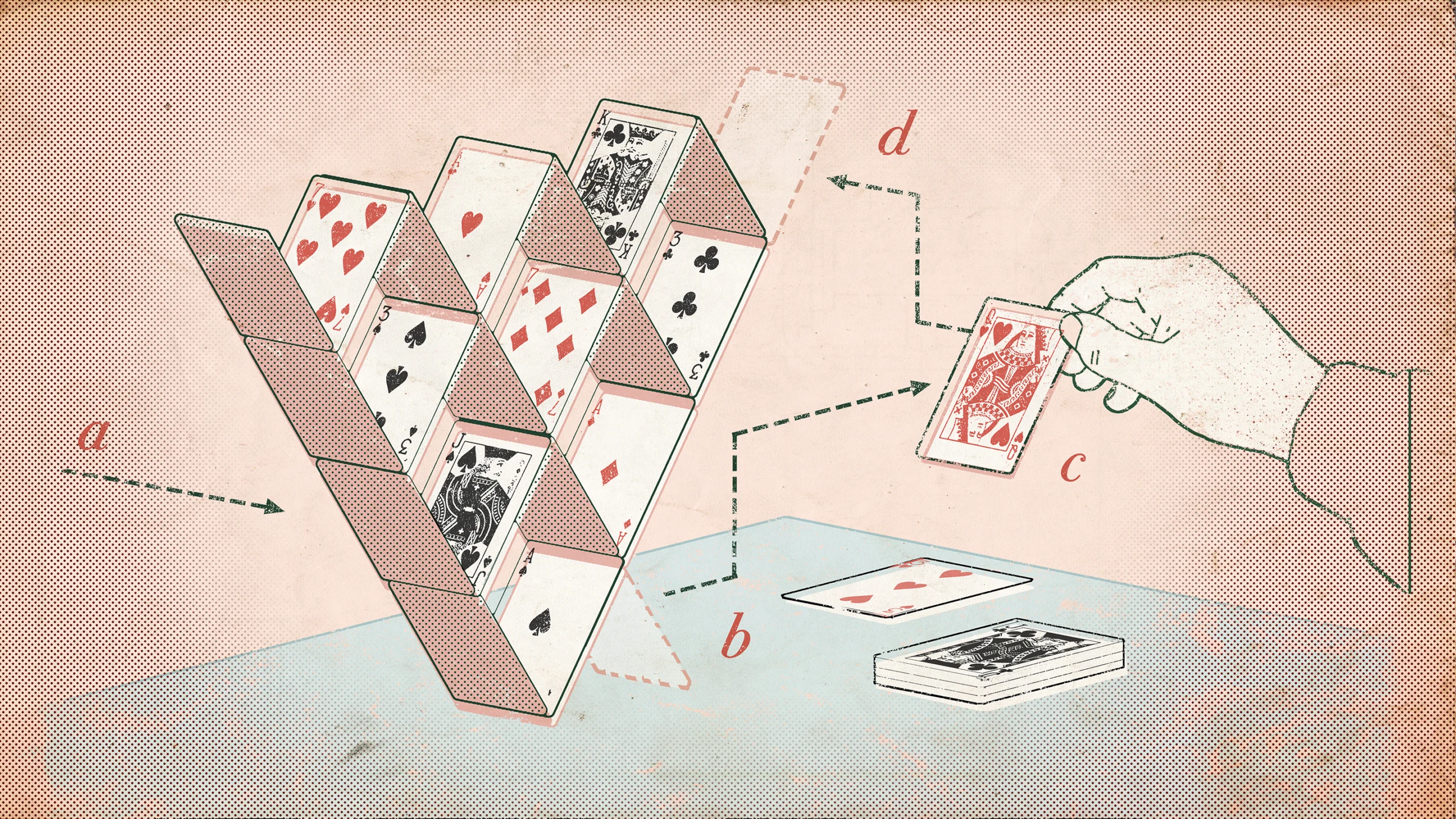

In a recent study, a new approach was introduced by three researchers: reverse mathematics. Instead of traditional axiomatic proofs, they inverted the formula, swapping a theorem for an axiom and then proving the original axiom. This method allowed them to demonstrate that numerous distinct theorems in complexity theory are, in fact, logically equivalent.

In reverse mathematics, researchers replace axioms, the foundations of mathematical systems, with the theorems they want to prove.

In reverse mathematics, researchers replace axioms, the foundations of mathematical systems, with the theorems they want to prove.

This groundbreaking work began with Lijie Chen, a complexity theorist, who dedicated time to studying metamathematics. His inquiry led him to communication complexity, a branch of the field exploring the information exchange needed for tasks. A core problem, the "equality problem," involves two players determining if their separate binary strings are identical with minimal communication. Decades ago, theorists proved that the minimum communication required equals the full string length, establishing a lower bound.

Chen's interest lay not in the lower bound itself, but in its proofs, which universally rely on the pigeonhole principle: if you place more pigeons than holes, at least one hole must contain multiple pigeons. This seemingly simple principle holds surprising power in complexity theory. Chen pondered if the relationship could be inverted: could the equality problem's lower bound be used to prove the pigeonhole principle?

This idea was developed in collaboration with Jiatu Li. To formalize the connection, they chose PV1, a set of weaker axioms preferred by metamathematics researchers for its ability to isolate precise theorem relationships. PV1 is strong enough to prove some complexity theorems, and when supplemented by a specific version of the pigeonhole principle, it can also prove the equality problem's lower bound. Li and Chen formally confirmed that the proof holds even when the two theorems are interchanged.

Lijie Chen conceived a method to invert the relationship between two mathematical theorems.

Lijie Chen conceived a method to invert the relationship between two mathematical theorems.

The mutual provability of the equality problem's lower bound and the pigeonhole principle within the PV1 framework signifies their exact logical equivalence. Expanding on this, the trio, joined by Igor Oliveira, realized their reverse mathematics method could apply to theorems across other areas of complexity theory. Over several months, they systematically established equivalences for many additional theorems, creating a comprehensive web of connections.

Igor Oliveira contributed to proving the equivalence of many distinct theorems.

Igor Oliveira contributed to proving the equivalence of many distinct theorems.

One of their most remarkable findings linked the same version of the pigeonhole principle to a foundational theorem in introductory complexity theory: the lower bound on the time required for a single-tape Turing machine to determine if a binary string is a palindrome. Li, Chen, and Oliveira used reverse mathematics to prove that, within PV1, this palindrome lower-bound theorem is equivalent to the pigeonhole principle. This equivalence is striking due to the theorems' superficial differences; the pigeonhole principle is a counting statement, while the palindrome lower bound describes a specific computational model. This suggests that these seemingly narrow computational theorems possess a more fundamental nature.

A visual representation of the interconnectedness of theorems found through reverse mathematics.

This new network of equivalences also sheds light on the limitations of the PV1 system. Given prior indications that the pigeonhole principle cannot be proven from PV1 axioms alone, these results imply that its equivalent theorems are also likely unprovable within PV1. While reverse mathematics excels at revealing connections between established theorems, its utility in proving currently unproven statements about complexity remains an open question.

Nevertheless, metamathematics is experiencing a resurgence of interest. Researchers, weary of stagnation in computational complexity, are increasingly turning to foundational analysis, bringing fresh perspectives to this historically esoteric field.