Scaling LinkedIn Profiles to 5 Million Reads/Second: Couchbase, Espresso, and Brooklin

Explore LinkedIn's innovative caching architecture handling 5M profile reads/sec with >99% cache hits, 60% latency reduction, and 10% cost savings using Couchbase, Espresso, and Brooklin.

LinkedIn successfully achieved an astonishing peak of 5 million profile reads per second by implementing a sophisticated caching strategy. This remarkable feat was accomplished while maintaining a cache hit rate exceeding 99%, significantly reducing tail latency by over 60%, and cutting costs by 10%. This article delves into how LinkedIn leveraged a powerful combination of Couchbase cache, their in-house Espresso data store, and Brooklin CDC (Change Data Capture) to achieve such impressive scalability and efficiency.

The Previous Architecture

Before the integration of the Couchbase cache, LinkedIn's profile read architecture operated as follows:

Upon a user's request to view a profile, the Profile frontend application would send a read request to the Profile backend. The backend would then retrieve the necessary data from Espresso, LinkedIn's proprietary NoSQL database, and return it to the frontend. Although this architecture included an off-heap cache (OHC) on the Espresso Router, its effectiveness was limited. The OHC was efficient for frequently accessed 'hot' keys but suffered from a low cache hit rate because it was local to each router instance, only serving requests seen by that specific instance.

While this solution initially served its purpose, it eventually reached its scaling limits. Further adding Espresso nodes yielded diminishing returns, highlighting a common challenge with horizontal scalability: no strategy can scale indefinitely without becoming cost-ineffective at some point.

The New Integrated Cache Architecture

To overcome these scaling challenges, LinkedIn designed a new architecture for profile reads, featuring an integrated cache:

The core change was the introduction of a Couchbase cache, positioned external to the Espresso storage nodes. This new setup also incorporates a Cache Updater and a Cache Bootstrapper, alongside Brooklin, LinkedIn’s internal data streaming platform. A significant benefit of this 'Integrated Cache' approach is that Espresso now abstracts away the caching complexities, allowing developers to concentrate on business logic.

It's worth noting that LinkedIn initially explored Memcached but found it unsuitable for their specific environment, contrasting with Facebook's well-documented success with the same technology. This highlights how an organization's unique context can lead to vastly different outcomes with identical tools.

The Read Path Workflow

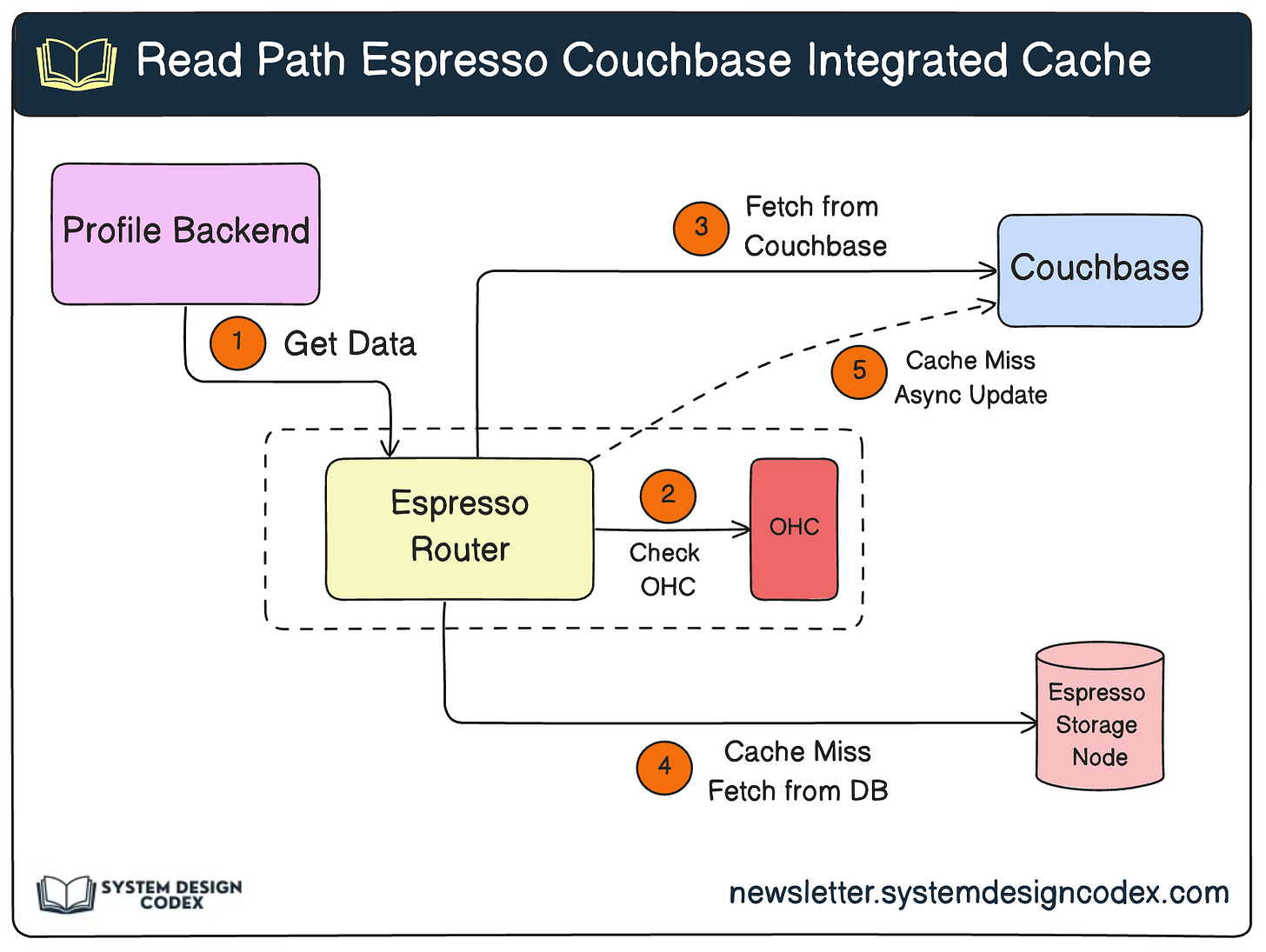

The new read path operates as follows:

- A read request arrives at the Profile backend application and is forwarded to an Espresso router instance.

- The router first checks its local Off-Heap Cache (OHC).

- If the key is not found in the OHC, the request is then sent to the Couchbase cache.

- On a Couchbase cache hit, the data is immediately returned.

- In the event of a Couchbase cache miss, the request falls back to the Espresso storage node, which serves the profile information. The router then returns this data to the backend.

- Finally, the router asynchronously upserts the retrieved data into the Couchbase cache, ensuring subsequent requests for the same data are served faster.

The Write Path for Cache Synchronization

Maintaining cache effectiveness requires robust synchronization between the database and the cache. LinkedIn addresses this with a Cache Updater and a Cache Bootstrapper, both consuming Espresso change events from two distinct Brooklin streams:

- Brooklin Change Capture Stream: This stream is populated with individual database rows as they are committed to Espresso, enabling real-time updates to the cache.

- Brooklin Bootstrap Stream: This stream provides periodically generated database snapshots, used for initial cache population or full cache refreshes.

Cache Design Requirements for Scalability

For the new architecture to meet LinkedIn's stringent scalability demands, three critical requirements had to be fulfilled:

-

Guaranteed Resilience in Case of Couchbase Failure: Resilience against Couchbase failures is paramount to avoid falling back directly to the database. LinkedIn implemented several measures:

- Health Tracking: Each Espresso router instance actively monitors the health of all accessible Couchbase buckets by comparing request exceptions against a predefined threshold. Unhealthy buckets are temporarily taken out of rotation.

- Data Replication: Profile data is stored with three replicas (one leader, two followers). While requests are primarily served by the leader, a failover mechanism ensures data is fetched from a follower replica if the leader becomes unavailable.

- Request Retries: Failed Couchbase requests, particularly those due to transient router or network issues, are retried to capitalize on temporary problem resolution.

-

High Availability of Cached Data: Cached data must remain continuously available, even during datacenter failovers. To achieve this, LinkedIn caches the entire profile dataset in every datacenter. This strategy is feasible given that a typical profile payload is only around 24KB. Data also has a finite Time-to-Live (TTL) to ensure expired records are eventually purged.

-

Minimizing Data Divergence: With multiple systems (Espresso Router, Cache Bootstrapper, Cache Updater) writing to the Couchbase cache, race conditions could lead to inconsistencies between the cache and the database. LinkedIn engineered several solutions to prevent this:

- Ordering of Updates: Cache updates for a given key are ordered using a System Change Number (SCN), a logical timestamp generated by Espresso for each committed database row. The system adheres to a Last-Writer-Win (LWW) reconciliation strategy based on the SCN, where the record with the largest SCN supersedes older entries in Couchbase.

- Periodic Bootstrapping: To prevent the cache from becoming 'cold,' LinkedIn periodically bootstraps the Couchbase cache via the Brooklin bootstrap stream. The bootstrapping period is set to be less than the data's TTL, ensuring records don't expire from the cache before being refreshed.

- Handling Concurrent Modifications: To manage concurrent updates from routers and cache updaters, LinkedIn utilizes Couchbase's Compare-And-Swap (CAS) mechanism. The CAS value, returned with data upon read, must match the server's current CAS value during an update. A mismatch indicates a concurrent modification, triggering a retry or appropriate handling.

Impact of the New Solution

The implementation of the Espresso-integrated Couchbase cache significantly enabled LinkedIn to support its rapidly expanding member base and achieve substantial operational improvements:

- 90% reduction in the number of Espresso storage nodes.

- 10% annual cost savings on servicing member profile requests.

- 99th percentile latency dropped by 60.73%, from 31.6 ms to 12.41 ms.

- 99.9th percentile latency dropped by 63.66%, from 66.87 ms to 24.3 ms.

This robust solution highlights LinkedIn's innovative approach to tackling large-scale data challenges, setting a benchmark for high-performance, cost-effective system design. The reference for this article is derived from the official LinkedIn engineering blog.