Streamline AI Development with Serverless MLflow on Amazon SageMaker

Amazon SageMaker AI with MLflow now offers a serverless capability, eliminating infrastructure management for ML experimentation. This enhances experiment tracking with automatic scaling and rapid instance creation, supporting generative AI development and seamless integration with SageMaker Pipelines for end-to-end MLOps.

Since its initial announcement in June 2024, Amazon SageMaker AI with MLflow has empowered customers to manage their machine learning (ML) and AI experimentation workflows using MLflow tracking servers. Building on this success, the MLflow experience is evolving to become even more accessible. We are excited to announce that Amazon SageMaker AI with MLflow now features a serverless capability, eliminating the need for infrastructure management. This enhancement transforms experiment tracking into an immediate, on-demand experience with automatic scaling, removing the burden of capacity planning. This shift to zero-infrastructure management fundamentally redefines AI experimentation, allowing teams to test ideas instantly without infrastructure concerns and fostering more iterative and exploratory development workflows.

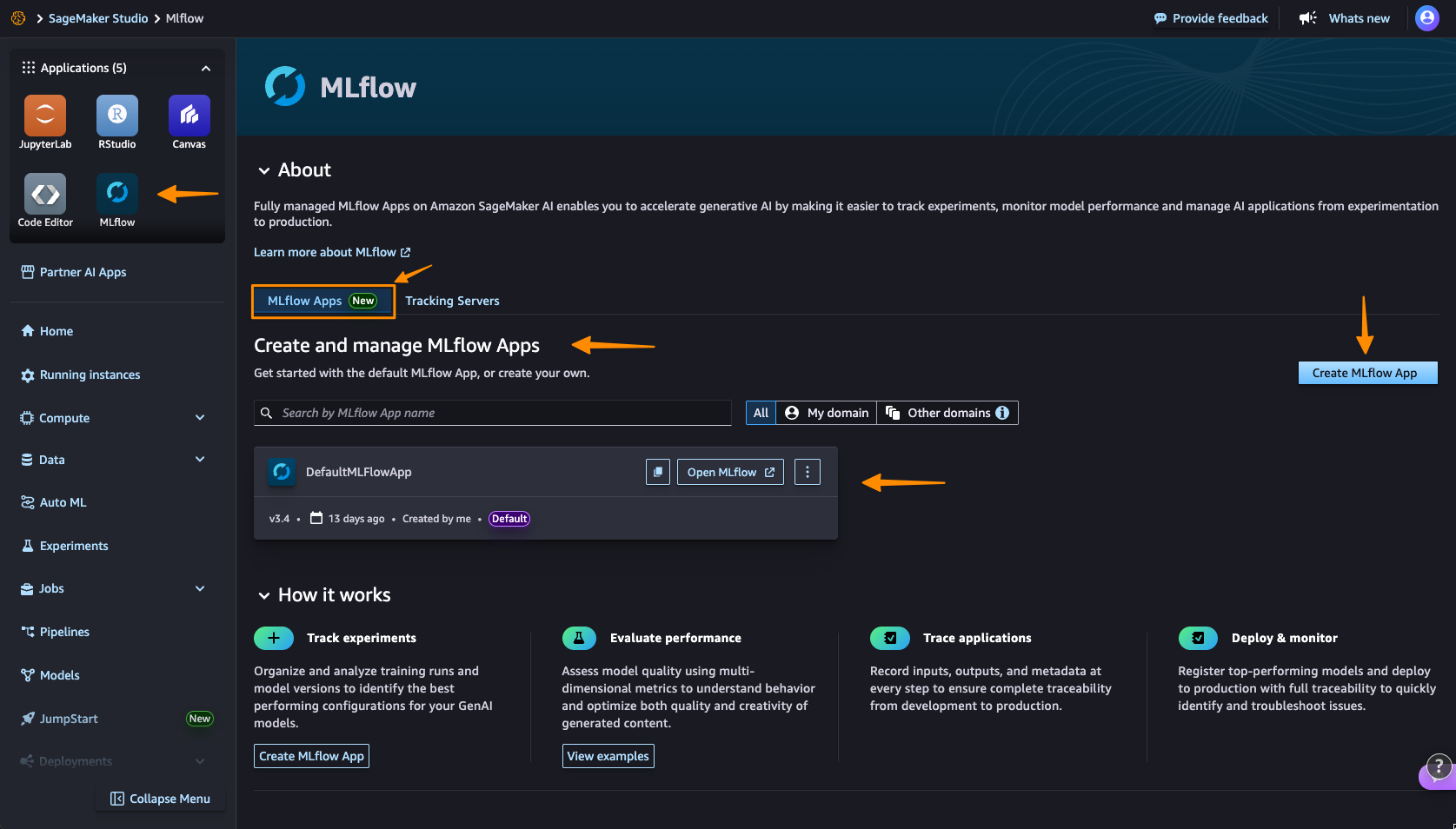

To begin with your first serverless MLflow instance, navigate to the Amazon SageMaker Studio console and select the MLflow application. Note that 'MLflow Apps' now replaces the earlier 'MLflow tracking servers' terminology, reflecting a streamlined, application-centric approach.

Upon arrival, you'll find a default MLflow App already created, simplifying the process of initiating experiments. Select Create MLflow App and provide a name. An AWS Identity and Access Management (IAM) role and an Amazon Simple Storage Service (Amazon S3) bucket are pre-configured, requiring modification only if necessary via Advanced settings.

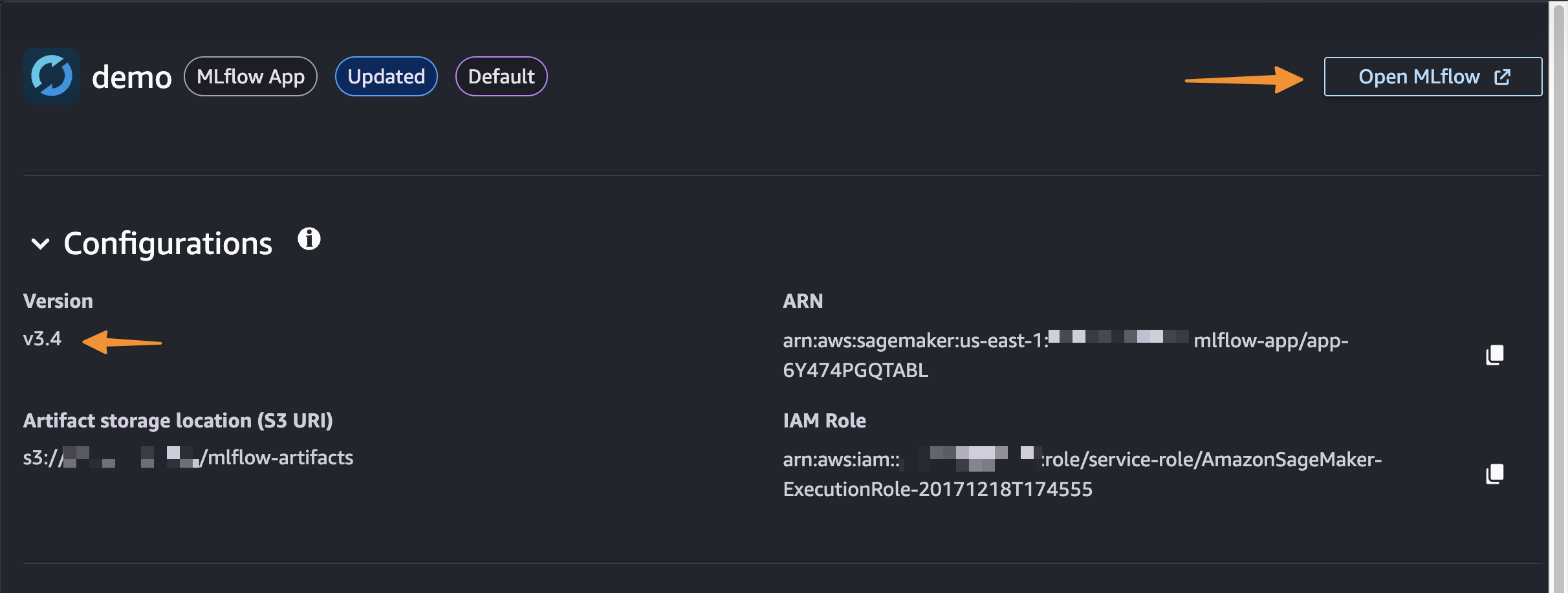

A significant improvement is the rapid creation process, completing in approximately 2 minutes. This immediate availability facilitates swift experimentation, removing the infrastructure planning delays and wait times that traditionally hindered ML workflows.

Upon creation, you'll receive an MLflow Amazon Resource Name (ARN) for seamless connection from notebooks. This simplified management eliminates the need for server sizing or capacity planning, allowing you to bypass configuration choices and infrastructure management to focus entirely on experimentation. For details on using the MLflow SDK, refer to 'Integrate MLflow with your environment' in the Amazon SageMaker Developer Guide.

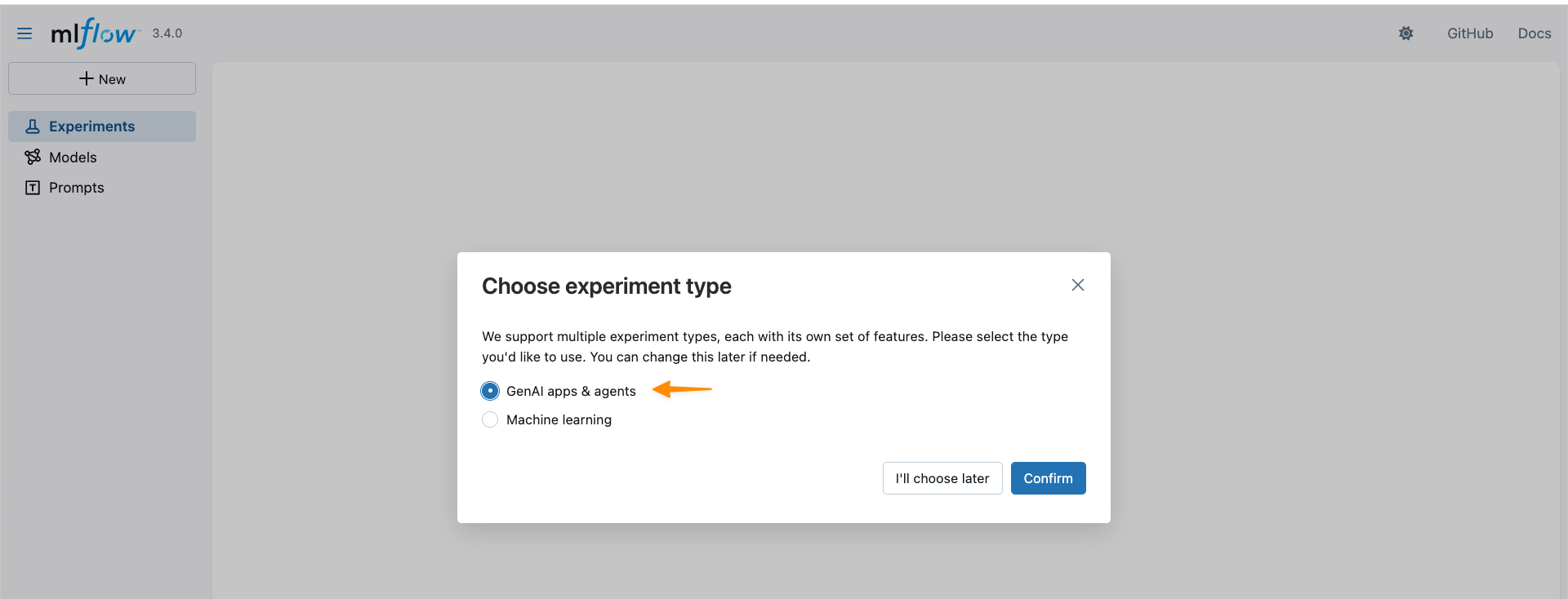

With support for MLflow 3.4, new capabilities are now available for generative AI development. MLflow Tracing, for instance, captures detailed execution paths, inputs, outputs, and metadata across the entire development lifecycle, facilitating efficient debugging in distributed AI systems.

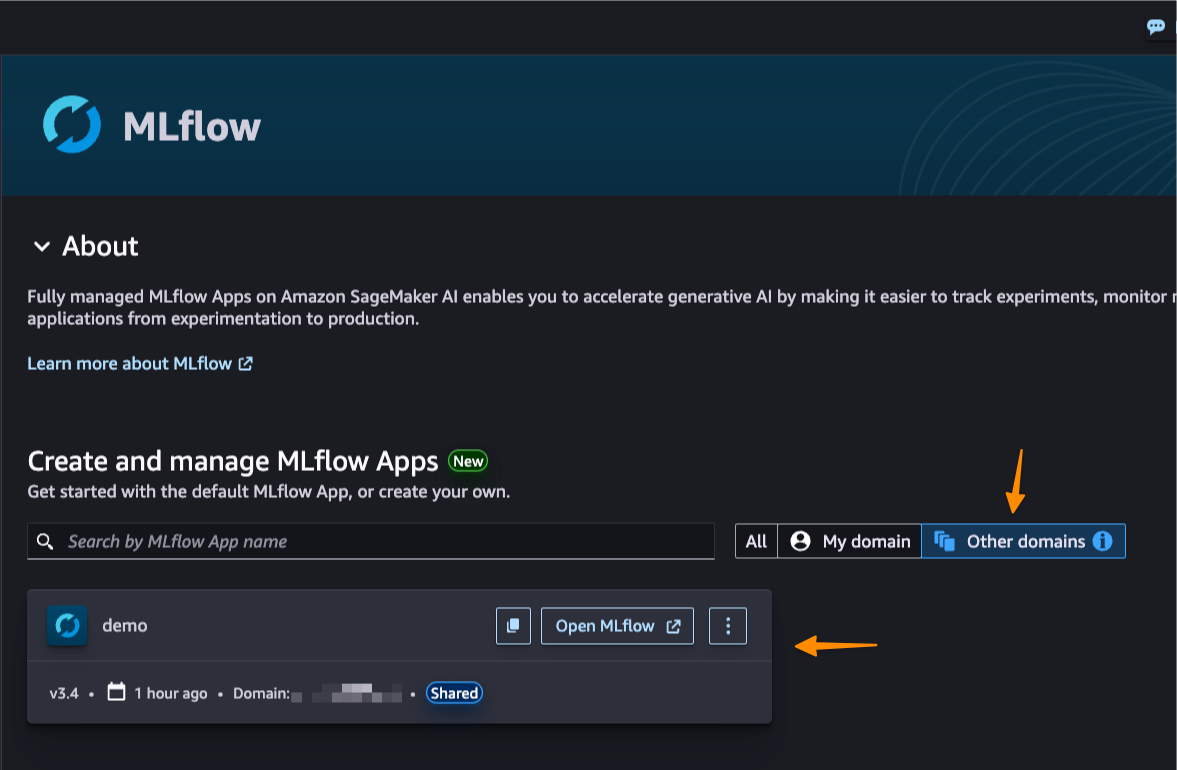

Furthermore, this new capability enables cross-domain and cross-account access via AWS Resource Access Manager (AWS RAM) share. This significantly enhances collaboration, allowing teams across various AWS domains and accounts to securely share MLflow instances and dismantle organizational silos.

Better Together: Pipelines Integration

Amazon SageMaker Pipelines offers seamless integration with MLflow. SageMaker Pipelines is a serverless workflow orchestration service specifically designed for Machine Learning Operations (MLOps) and Large Language Model Operations (LLMOps) automation – encompassing the deployment, monitoring, and management of ML and LLM models in production. Users can effortlessly build, execute, and monitor repeatable, end-to-end AI workflows using either an intuitive drag-and-drop UI or the Python SDK.

When using a pipeline, a default MLflow App is automatically created if one doesn't already exist. Users can define experiment names, and metrics, parameters, and artifacts are logged to the MLflow App as specified in their code. SageMaker AI with MLflow also integrates with established SageMaker AI model development features such as SageMaker AI JumpStart and Model Registry, facilitating end-to-end workflow automation from data preparation to model fine-tuning.

Important Considerations

- Pricing: The new serverless MLflow capability is provided at no additional cost, though service limits apply.

- Availability: This feature is available across numerous AWS Regions, including US East (N. Virginia, Ohio), US West (N. California, Oregon), Asia Pacific (Mumbai, Seoul, Singapore, Sydney, Tokyo), Canada (Central), Europe (Frankfurt, Ireland, London, Paris, Stockholm), and South America (São Paulo).

- Automatic Upgrades: MLflow version upgrades occur automatically in-place, ensuring access to the latest features without manual migration or compatibility concerns. The service currently supports MLflow 3.4, offering advanced capabilities like enhanced tracing.

- Migration Support: For users looking to migrate from existing MLflow Tracking Servers (whether SageMaker AI-managed, self-hosted, or others) to serverless MLflow (MLflow Apps), the open-source mlflow-export-import tool is available.

To get started with serverless MLflow, visit Amazon SageMaker Studio and create your first MLflow App. Serverless MLflow is also supported within SageMaker Unified Studio for even greater workflow flexibility.