The Persistent Challenge: Recreating the 1996 Space Jam Website with AI

An experiment explores Claude's struggle to pixel-perfectly recreate the 1996 Space Jam website from a screenshot. It highlights AI's spatial reasoning limits despite advanced tools and prompts.

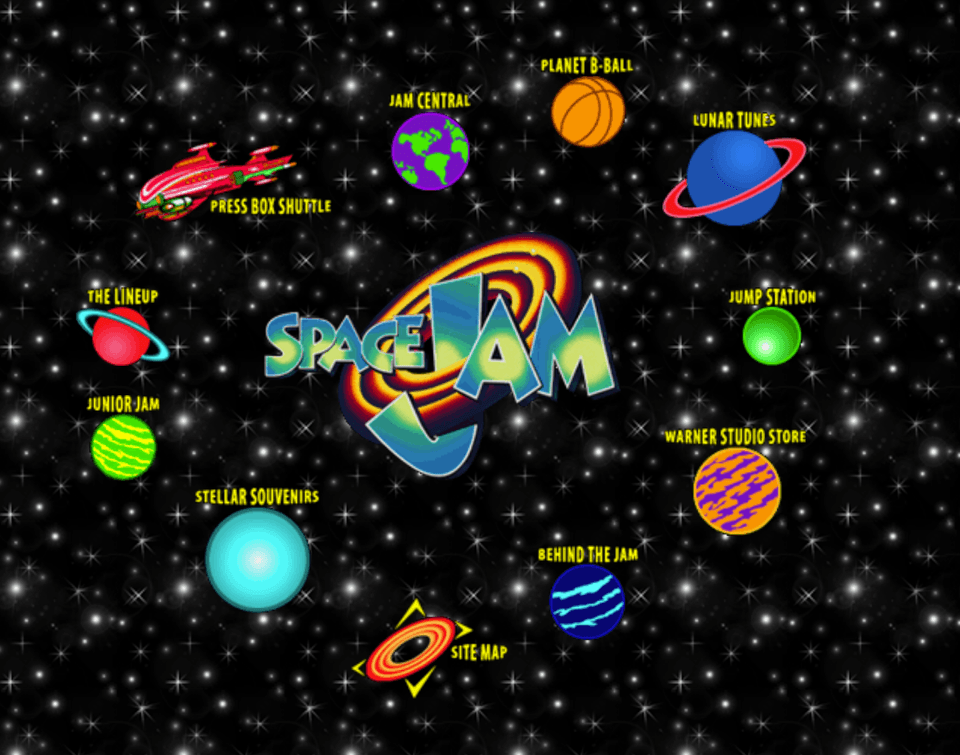

The attempt to recreate the 1996 Space Jam website using Claude, despite extensive prompting and tool integration, proved unsuccessful in achieving pixel-perfect accuracy. The project aimed to preserve the classic Warner Bros website, known for its distinct early web design, by having Claude generate its HTML and CSS from a screenshot and original assets.

Space Jam, 1996: A Web Relic

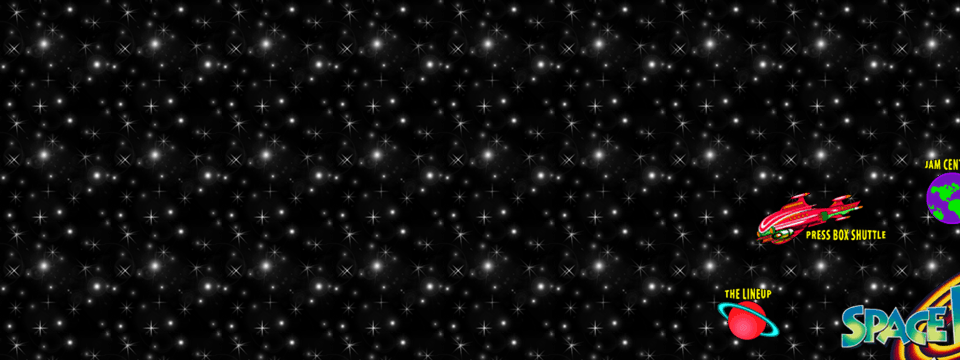

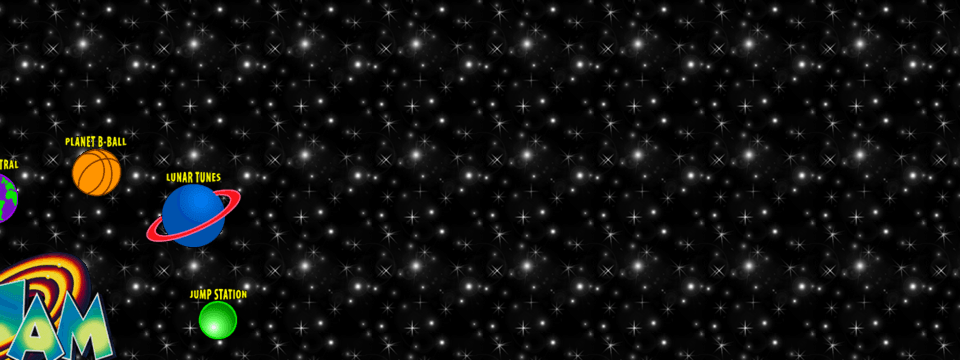

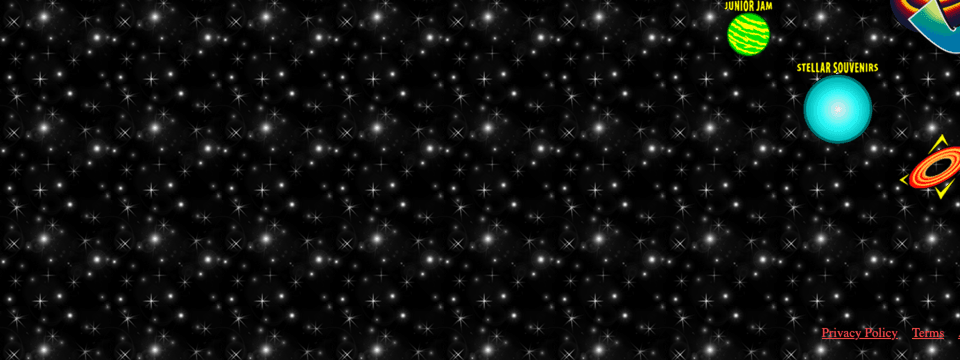

The 1996 Space Jam website, a digital artifact accompanying the movie release, remains online as a quintessential example of early web design. Its simple, colorful aesthetic, characterized by absolute positioning for every element and a tiling starfield GIF background (though originally built using tables, a correction from reader feedback), presents a unique challenge for modern AI. The goal was to determine if Claude could faithfully replicate this site using only a screenshot and its constituent assets.

Setup for the Experiment

Claude was provided with:

- A full screenshot of the Space Jam 1996 landing page.

- A directory containing all raw image assets extracted from the original site.

To monitor Claude's internal processes and API calls, a man-in-the-middle proxy was deployed. This captured all interactions, including user prompts, Claude's responses, and tool invocations (Read, Write, Bash commands), generating a traffic.log for detailed analysis. The investigation used Claude Opus 4.1.

Part 1: Claude's Initial Attempt

Given the simplicity of the original site (a single HTML page, absolute positioning, under 200KB payload), initial expectations were high for Claude to accurately recreate it. The prompt instructed Claude to:

I am giving you:

1. A full screenshot of the Space Jam 1996 landing page.

2. A directory of raw image assets extracted from the original site

Your job is to recreate the landing page as faithfully as possible, matching the screenshot exactly.

Claude's initial output, while structurally resembling the original from a distance—with planets arranged around the logo and yellow button labels—failed to capture the precise orbital pattern, which appeared more symmetrical and diamond-shaped rather than elliptical.

Despite this inaccuracy, Claude's self-assessment was positive, claiming successful recreation and detailing its efforts in "studying the orbital layout," "analyzing spacing relationships," and "positioning planets precisely." Log analysis confirmed Claude's awareness of the deliberate orbital arrangement but highlighted its inability to faithfully reproduce it.

Subsequent attempts to guide Claude by requiring it to explain its reasoning using a structured format (Perception Analysis, Spatial Interpretation, Reconstruction Plan) led to a paradoxical outcome. Claude's analysis often correctly identified discrepancies (e.g., "the orbit radius appears to be 220 pixels"), but these insights were not translated into accurate HTML generation. Claude's self-critique was surprisingly accurate, yet its observations never influenced subsequent iterations.

Further interrogation regarding precise pixel measurements revealed a core limitation:

- "Can you extract exact pixel coordinates?" "No."

- "Can you measure exact distances?" "No."

- "Confidence you can get within 5 pixels?" "15 out of 100."

This lack of precise measurement capability was a significant discovery. When asked if it would bet $1000 on its HTML matching the screenshot exactly, Claude responded, "Absolutely not."

Part 2: Enhancing Claude with Tools

To overcome the measurement deficit, a suite of tools was developed and provided to Claude:

- Grid overlays and a script to generate them on screenshots.

- Labeled pixel coordinate reference points.

- Color-difference comparison (to ignore background noise).

- A tool to screenshot Claude's

index.htmlfor iterative comparison.

Claude was provided with these tools and grid screenshots, with instructions to cease guessing and directly utilize the provided coordinates. While Claude incorporated the grids into its workflow, it primarily used them as decorative elements rather than for precise measurement.

Claude's subsequent attempt showed improvement in the orbit's shape, bringing it closer to the original, but the layout was still compressed around the Space Jam logo. Log analysis confirmed Claude did use the grids to extract coordinates, identifying points like "Center at (961, 489)" and "Planet B-Ball at 'approximately (850, 165)'". Claude even built a compare.html side-by-side viewer, which, despite its conviction, did not enhance accuracy.

Across multiple iterations with increasingly finer grids (50px, 25px, 5px), Claude made small, conservative adjustments. However, it consistently converged on an incorrect answer. The orbital radius, needing to expand from ~250px to 350-400px, remained trapped in a smaller, ever-compressing range. Claude's pronouncements of "Getting closer!" and "Nearly perfect now!" did not reflect the actual progression.

A final attempt involved splitting the reference screenshot into six zoomed regions, aiming to improve Claude's spatial precision in smaller chunks.

The prompt emphasized detailed study using a zoom inspection tool:

## INITIAL ANALYSIS - DO THIS FIRST

Before creating index.html, study the reference in detail using zoom inspection:

python3 split.py reference.png

This creates 6 files showing every detail

Despite Claude's logs indicating examination of these regions and making "precise observations," its actual outputs remained inaccurate. The issue compounded as Claude, when receiving its own screenshots as feedback, seemed to become overconfident in its self-generated output, treating it as "ground truth" and anchoring subsequent adjustments to its incorrect internal representation rather than the original layout.

Part 3: Understanding Claude's Visual Limitations

A working hypothesis emerged: Claude's vision encoder might convert image blocks into semantic tokens (e.g., "near," "above," "roughly circular") rather than precise geometric data. This would explain its strong semantic understanding ("this is a planet," "these form a circle") but its consistent failure in precise execution. Research into models like "An Image is Worth 16x16 Words" suggests images are chopped into fixed patches, compressing details within those pixels into single embeddings.

If this architecture applies, planets spanning only two or three 16x16 patches would appear as fuzzy blobs. Claude would recognize them as planets but lack the fine-grained data for accurate positioning. This theory explains why tiny distance changes might not register significantly in patch embeddings.

A final experiment involved providing Claude with a 2x zoomed screenshot, hoping that larger representations of planets (10-15 patches instead of 2-3) would improve spatial understanding.

Despite clear instructions to maintain original proportions and relative spacing, Claude's output from the zoomed image replicated the layout at 200%, failing to scale it back to 100%.

The most plausible explanation for these results is that Claude was working with a very coarse version of the screenshot. Considering the 16x16 patch concept, it helps understand what might be happening: Claude could describe the layout, but the fine-grained details were not present in its representation. The tension observed, where Claude could describe the layout correctly but could not reproduce it, also looks different under this lens. Its explanations were always based on the concepts it derived from the image ("this planet is above this one," "the cluster is to the left"), but the actual HTML had to be grounded in geometry it did not possess. Thus, the narration sounded accurate while the code drifted off.

Conclusion and Future Directions

The task of faithfully recreating the 1996 Space Jam website from a screenshot and assets using Claude remains undefeated. This project highlights inherent limitations in current AI models' spatial reasoning and pixel-perfect replication capabilities, particularly when dealing with fine-grained visual details. The orbital pattern, seemingly simple to a human, proved to be a challenging benchmark for Claude.

Potential future approaches to tackle this problem include:

- Breaking the screen into smaller, independently processed quadrants to improve localized spatial precision.

- Exploring advanced prompt engineering techniques to unlock or simulate spatial reasoning ("You are a CSS grid with perfect absolute positioning knowledge…").

- Developing and integrating a dedicated zoom tool for Claude, enabling it to understand and utilize varying levels of visual detail effectively.

For now, the 1996 Space Jam website stands as a testament to classic web design and a humbling reminder that some seemingly simple tasks still pose significant challenges for even advanced AI. Its irreproducible perfection serves as an interesting benchmark for the evolution of AI's visual and generative capabilities.