Uber's Finch: A Conversational AI Agent for Real-time Financial Analysis

Explore Uber's Finch, a conversational AI agent transforming financial analysis by providing real-time data access in Slack. Learn how it eliminates manual data retrieval, automates SQL queries, and enhances decision-making for finance teams at Uber's scale.

At Uber's operational scale, swift and accurate access to critical financial data is paramount for decision-making. Delays in report generation directly impact millions of transactions worldwide. The Uber Engineering Team recognized that finance professionals were spending considerable time merely retrieving data before they could even begin their analysis.

Historically, financial analysts navigated multiple platforms such as Presto, IBM Planning Analytics, Oracle EPM, and Google Docs to gather relevant figures. This fragmented approach created significant bottlenecks, leading to manual searches, increased risk of outdated or inconsistent data, and the need to write complex SQL queries. The latter required deep knowledge of data structures and constant documentation reference, making the process slow and error-prone. Often, analysts relied on the data science team for data retrieval, introducing delays of hours or even days, by which time critical insights were often stale. For a fast-moving company, such delays hinder the ability to make informed, real-time financial decisions.

To address these challenges, the Uber Engineering Team embarked on creating a secure, real-time financial data access layer seamlessly integrated into the finance teams' daily workflows. Their vision was clear: empower analysts to ask questions in plain language and receive answers within seconds, bypassing the need to navigate multiple platforms or write SQL. This ambition led to the development of Finch, Uber’s conversational AI data agent. Finch integrates financial intelligence directly into Slack, the company's primary communication platform. This article explores the development and underlying mechanics of Finch.

What is Finch?

Finch, developed by the Uber Engineering Team, is a conversational AI data agent embedded within Slack, designed to resolve long-standing issues of slow and complex data access. Instead of logging into various systems or crafting lengthy SQL queries, finance team members can simply pose questions in natural language, and Finch handles the rest.

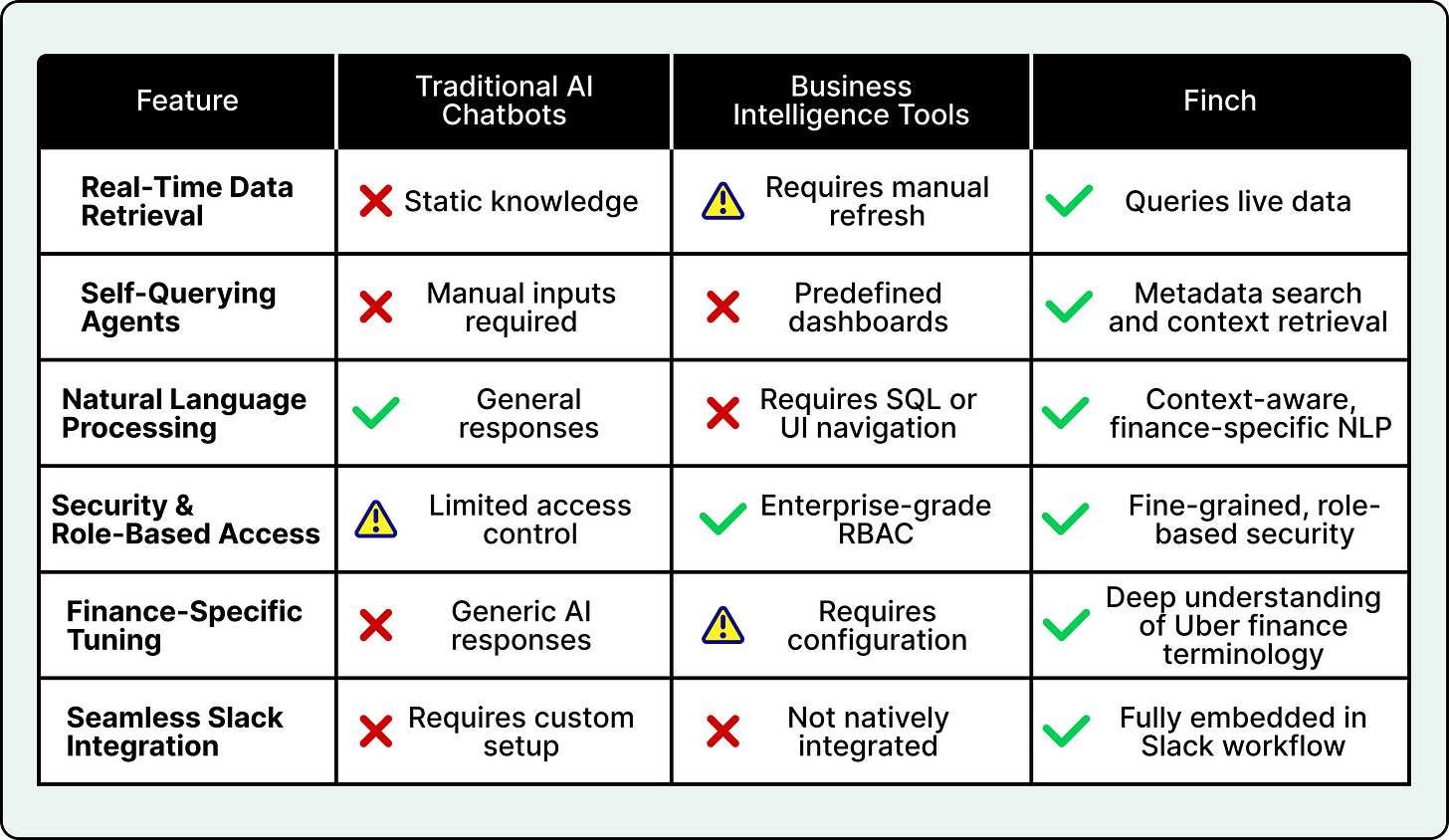

A comparison with other AI finance tools highlights Finch's unique capabilities:

At its core, Finch makes financial data retrieval as intuitive as messaging a colleague. When a user inputs a question, Finch translates the natural language request into a structured SQL query. It intelligently identifies the correct data source, applies necessary filters, verifies user permissions through role-based access controls (RBAC)—ensuring security for sensitive financial information—and retrieves the latest financial data in real time. The results are then presented back in Slack in a clear, readable format. For larger datasets, Finch can automatically export the information to Google Sheets, enabling users to continue their analysis without additional steps.

For instance, an analyst might ask: "What was the GB value in US&C in Q4 2024?" Finch promptly locates the relevant table, constructs and executes the appropriate SQL query, and delivers the answer directly within Slack. This process provides a clear, ready-to-use answer in seconds, eliminating hours of manual searching, query writing, or waiting for other teams.

Finch Architecture Overview

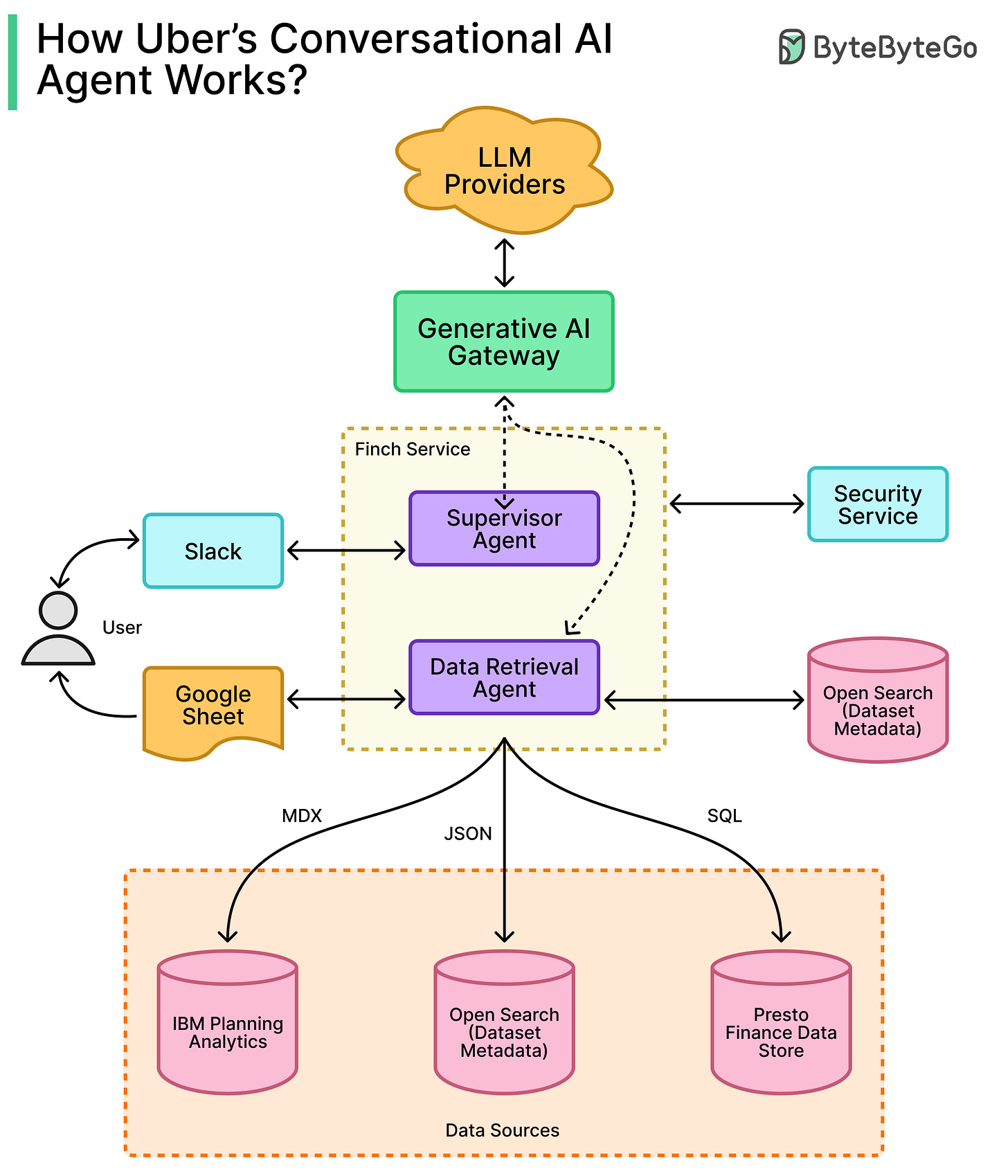

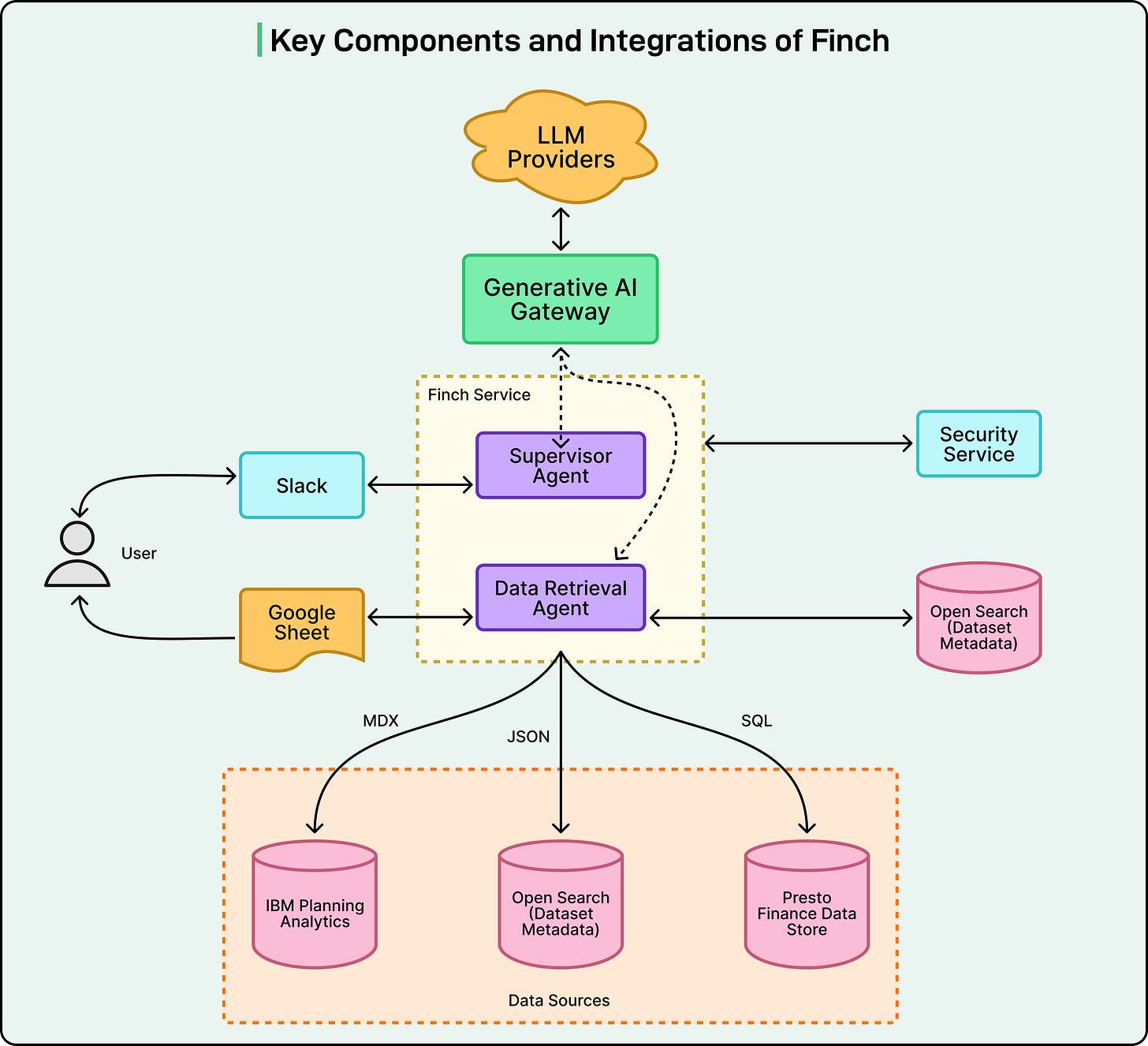

Finch's design prioritizes modularity, security, and accuracy in large language model (LLM) query generation and execution. The Uber Engineering Team engineered the system with independent yet smoothly integrated components, facilitating scalability, maintenance, and continuous improvement.

The key components of Finch's architecture are illustrated below:

At its foundation, Finch utilizes a data layer composed of curated, single-table data marts containing essential financial and operational metrics. This approach optimizes for speed and clarity by avoiding complex queries on large, multi-join databases.

To enhance natural language understanding, a semantic layer built on OpenSearch sits atop these data marts. This layer stores natural language aliases for column names and their values. For example, "US&C" can be mapped to the correct database column and value. This enables fuzzy matching, allowing Finch to accurately interpret varied phrasings of the same question and improve the precision of WHERE clauses in generated SQL queries—a common challenge for many LLM-based data agents.

Finch’s architecture integrates several key technologies:

- Generative AI Gateway: Uber's internal infrastructure provides access to various large language models, both self-hosted and third-party. This modularity allows for model swapping or upgrades without extensive system changes.

- LangChain and LangGraph: These frameworks orchestrate specialized agents within Finch, such as the SQL Writer Agent and the Supervisor Agent. LangGraph coordinates the sequential interaction of these agents to understand a question, plan the query, and deliver the result.

- OpenSearch: This technology serves as the backbone for Finch's metadata indexing, mapping natural language terms to the database schema. This significantly boosts reliability in handling real-world language variations.

- Slack SDK and Slack AI Assistant APIs: These enable direct integration with Slack, facilitating real-time status updates, suggested prompts, and a seamless chat-like interface for analysts.

- Google Sheets Exporter: For large datasets, Finch automatically exports results to Google Sheets, streamlining analysis by eliminating manual data transfer.

Finch Agentic Workflow

Finch's sophisticated orchestration pipeline defines how its components collaborate to process user queries, with each agent assigned a distinct role.

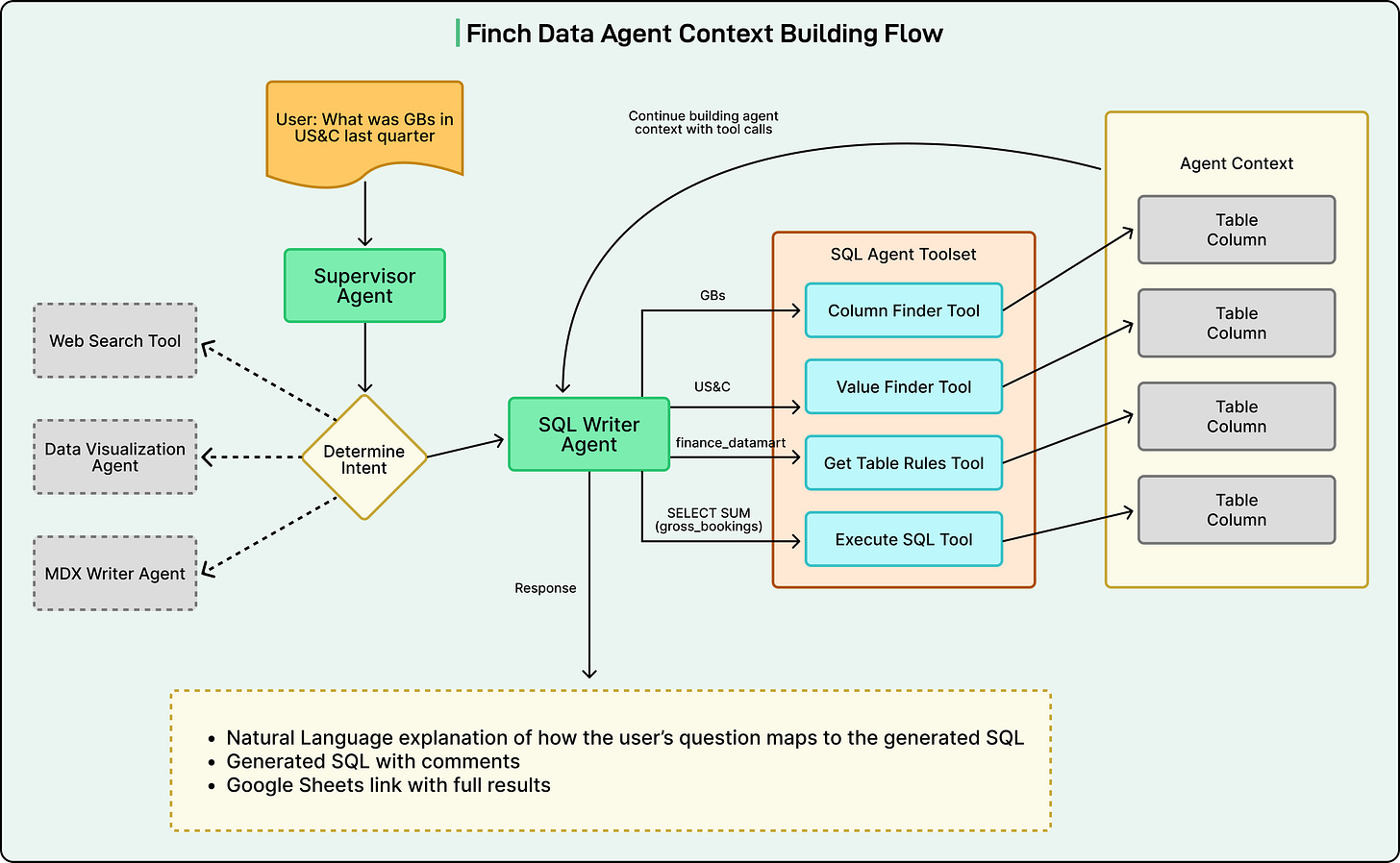

The workflow begins when a user submits a question in Slack, such as "What were the GB values for US&C in Q4 2024?" The Supervisor Agent receives this input, identifies the request type, and routes it to the appropriate sub-agent (e.g., the SQL Writer Agent for data retrieval).

The SQL Writer Agent then retrieves metadata from OpenSearch, which provides mappings between natural language terms and database elements. This critical step enables Finch to accurately interpret terms like "US&C" or "gross bookings" without requiring the user to know exact column names or data structures.

Next, Finch constructs the SQL query. The SQL Writer Agent leverages the metadata to build the correct query against the curated single-table data marts, ensuring proper filters and data sources are applied. Once formulated, the query is executed.

During this background process, Finch provides live feedback via a Slack callback handler. Users receive real-time status messages like "identifying data source," "building SQL," or "executing query," offering transparency into Finch's operations.

Finally, upon query execution, the results are delivered directly to Slack in a structured, readable format. If the dataset is too large, Finch automatically exports it to Google Sheets and shares the link. Users can also ask follow-up questions, such as "Compare to Q4 2023," and Finch will refine the conversational context to provide updated results.

The data agent’s context building flow is shown below:

Finch’s Accuracy and Performance Evaluation

For Finch to be effective at Uber's scale, it must be consistently accurate and fast. A conversational data agent delivering incorrect or slow answers would quickly erode trust among financial analysts. The Uber Engineering Team developed Finch with multiple layers of testing and optimization to ensure consistent performance, even as the system grows in complexity. This involved two main areas:

Continuous Evaluation

Uber employs continuous evaluation to ensure every part of Finch functions as expected:

- Sub-agent evaluation: Agents like the SQL Writer and Document Reader are tested against "golden queries"—trusted outputs for common use cases. This comparison identifies any drop in accuracy.

- Supervisor Agent routing accuracy: The Supervisor Agent's decision-making process for routing user requests to sub-agents is rigorously tested to prevent incorrect routing (e.g., confusing data retrieval with document lookup tasks).

- End-to-end validation: Simulating real-world queries ensures the entire pipeline operates correctly from input to output, catching issues not apparent during isolated component testing.

- Regression testing: Historical queries are re-run to confirm Finch consistently returns correct results, detecting accuracy drift before model or prompt updates are deployed.

Performance Optimization

Finch is engineered for rapid response times, even under high query volumes. The Uber Engineering Team optimized the system by:

- Minimizing database load: Efficient SQL queries reduce the burden on databases.

- Parallel processing: Utilizing multiple sub-agents that work in parallel, rather than a single blocking process, significantly reduces latency.

- Pre-fetching metrics: Frequently used metrics are pre-fetched and cached, leading to near-instant responses for common queries.

Conclusion

Finch represents a pivotal advancement in how Uber's financial teams access and interact with data. By eliminating the need to navigate multiple platforms, write complex SQL queries, or await data requests, analysts now receive real-time answers within Slack using natural language. The Uber Engineering Team has engineered a solution that removes significant friction from financial reporting and analysis by integrating curated financial data marts, large language models, metadata enrichment, and a secure system design.

Finch's architecture exemplifies a thoughtful balance of innovation and practicality. It employs a modular agentic workflow, ensures accuracy through continuous evaluation and testing, and achieves low latency via intelligent performance optimizations. The result is a system that reliably operates at scale and seamlessly integrates into the daily operations of Uber's finance teams.

Looking forward, Uber plans to expand Finch's capabilities, with a roadmap including deeper FinTech integration to support more financial systems and workflows. For executive users (e.g., CEO, CFO), a human-in-the-loop validation system featuring a "Request Validation" button will allow subject matter experts to review critical answers before final approval, bolstering trust for high-stakes decisions. The team is also working to support additional user intents and specialized agents, extending Finch beyond simple data retrieval to richer financial use cases such as forecasting, reporting, and automated analysis. As these capabilities evolve, Finch will transition from a helpful assistant into a central intelligence layer for Uber's financial operations.

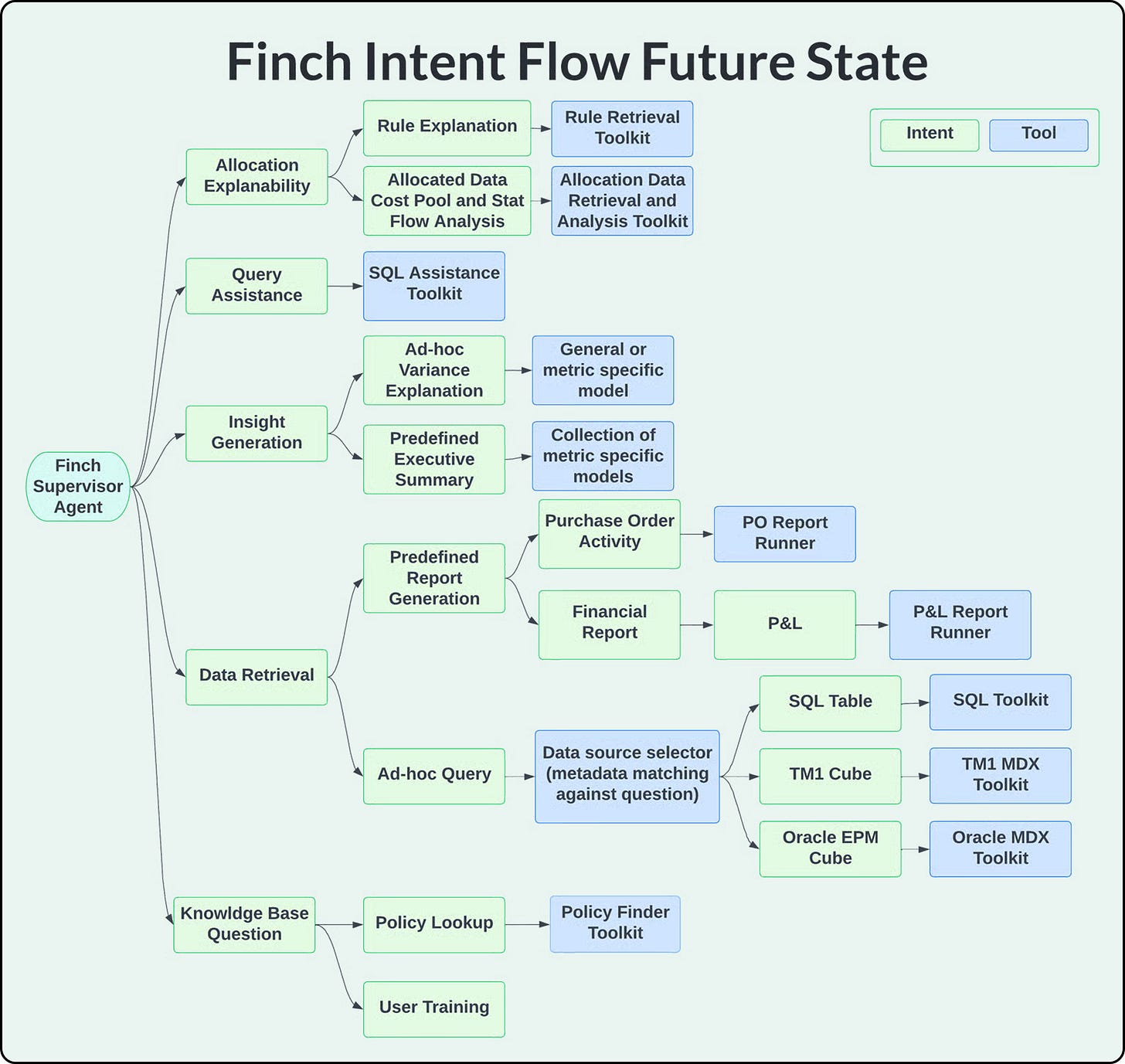

A glimpse into Finch’s Intent Flow Future State is shown below: