WebRTC vs. MoQ: A Deep Dive into Media Transport Protocols by Use Case

An expert analysis comparing WebRTC and Media over QUIC (MoQ) across various use cases like video calling, AI, and live streaming, predicting their future adoption.

This post marks my first editorial contribution here, following the discussions sparked by Fippo’s article, "Is everyone switching to MoQ from WebRTC?". My initial responses grew too extensive, prompting this deeper exploration. While Fippo’s data suggests Media over QUIC (MoQ) isn’t yet widespread, and won't instantly dominate, its inherent technical advantages as a new Internet protocol (HTTP/3, QUIC, WebTransport) hint at significant future potential. The core question remains: will MoQ eventually replace WebRTC?

I find the framing of that question problematic. Internet media transmission encompasses diverse use cases. MoQ is often presented as a universal solution, but its true applicability warrants scrutiny, particularly in identifying where it’s most likely to emerge first.

This article will not delve into the deep technical specifics of MoQ. For background and the latest developments, I recommend two talks from last month’s RTC.on: Will Law’s "A QUIC Update on MoQ and WebTransport" and Ali Begen’s "Streaming Bad: Breaking Latency with MoQ". The latter also features demos you can try at https://moqtail.dev/demo/.

Special thanks to Renan Dincer and Sergio Garcia Murillo for their valuable feedback and review of this post.

Contents

- MoQ Replacement Plausibility by Use Case

- Video Calling

- Meetings

- Video Streaming

- In-between Use Cases

- Live Streaming

- Webinars / Townhalls

- Conclusions

MoQ Replacement Plausibility by Use Case

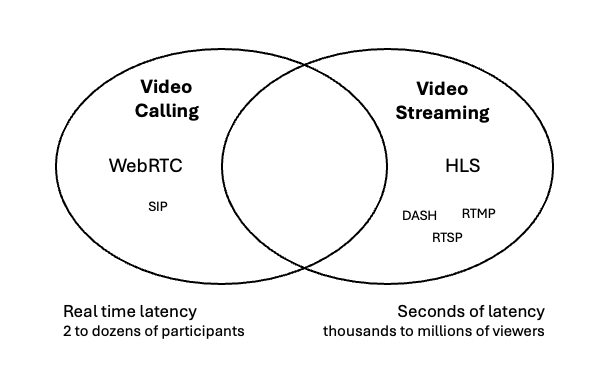

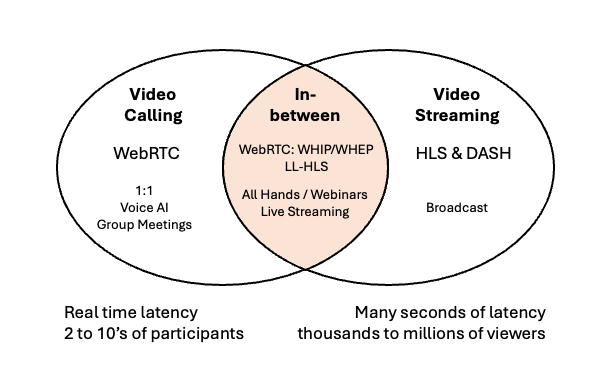

Media over the Internet has evolved from two distinct domains: video calling and video streaming. Video calling has largely standardized on WebRTC. Video streaming, on the other hand, involves various technologies, with HLS predominating for viewer transmissions today. While alternatives exist for both (e.g., SIP for video calling, DASH/RTSP for streaming), I will focus primarily on WebRTC and HLS, as they are the main protocols MoQ aims to potentially replace.

MoQ targets use cases and protocols

MoQ targets use cases and protocols

The overlap between these domains is particularly intriguing, but I will first cover the major video calling and video streaming use cases. For each, I'll also offer my prediction for WebRTC vs. MoQ by 2030.

Video Calling

1:1 (human:human)

One-to-one calls are extremely common. In his post, Fippo makes a strong case that "probably 40-50% of the time WebRTC is talking to a server." Our numerous black-box evaluations reveal that no major service utilizes "full mesh," meaning 50 to 60% of all calls are 1:1.

When there's no need for recording or server-side analysis, 1:1 calls are typically implemented as pure peer-to-peer (P2P). This lightweight architecture only requires a signaling server to connect the two clients (and possibly a TURN server for unusual network scenarios). WebRTC was designed as a P2P protocol at its core, and 1:1 implementations are optimized to avoid server usage, which adds costs and privacy concerns.

MoQ is a client-server protocol, making it unlikely to be ideal here unless MoQ vendors can convincingly argue that interposing more infrastructure between two users is beneficial. I've worked on many projects that attempted to make this case, but I've never seen the actual results justify the additional cost.

My bet in 2030: WebRTC will still be used for 1:1 calls.

Voice AI (1:1 but one party is AI)

Voice AI is a relatively new WebRTC use case where a user sends audio and video to a real-time Large Language Model (LLM), which responds with a media stream. As Cloudflare recently wrote in late August:

"WebSockets are perfect for server-to-server communication and work fine when you don’t need super-fast responses, making them great for testing and experimenting. However, if you’re building an app where users need real-time conversations with low delay, WebRTC is the better choice."

Conversely, this use case is client-server, not peer-to-peer. WebRTC's comprehensive, but relatively slow, Interactive Connectivity Establishment (ICE) is designed to create connections between peers potentially behind NATs or firewalls. Voice AI systems reside on public servers, making much of this process overkill.

Do real-time LLM makers care about better media?

I question how significant the real-time media problem is to solve here, given that WebRTC doesn't appear to be a high priority for any of the AI hyperscalers. OpenAI largely uses WebRTC to connect to and from browsers, but it's implemented as an add-on gateway to its WebSocket system, not a native part of the platform (see my interview with Sean DuBois at OpenAI for more). OpenAI is actually the furthest ahead on WebRTC.

Ironically, Google—the primary driving force behind WebRTC—doesn't seem to use it at all in Gemini. You won't find any WebRTC activity if you check chrome://webrtc-internals on https://aistudio.google.com/, https://gemini.google.com/app, Gemini in Chrome, etc., and I haven't seen evidence it's different on native mobile.

WebRTC proponents often highlight its low-latency benefits for Voice AI. However, if latency is truly a major issue in Voice AI, why isn't WebRTC a truly native component of speech-to-speech Voice AI systems?

Media for Humans vs. LLMs

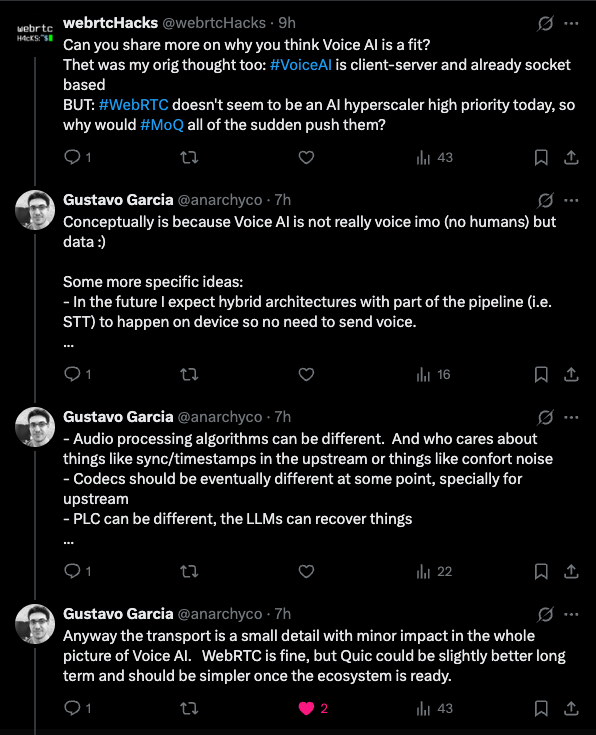

webrtcHacks regular, Gustavo Garcia, commented that Voice AI could be a good fit for MoQ, so I inquired why.

To summarize his argument: WebRTC excels at transmitting human voice, but this is inherently complex, leading to a complex solution. LLMs, however, don't require all the extra processing humans do and thus don't need such a complex solution, making MoQ potentially a better fit.

Gustavo also raises a point about local speech recognition—extendable to speech synthesis. Browsers already offer JavaScript APIs for this. However, to act more human, voice LLMs also interpret and adjust to the user’s tone and accent. Gemini’s Affective Dialog is one example. Current commodity speech engines on devices don't convey or interpret this extra information. I do anticipate on-device tokenization of media with newer neural chips in our phones and laptops, but this is not yet common.

Encoding tokens

Standardization efforts for encoding media streams for ML models are already underway. For instance, MPEG—the group behind the H.26X series of codecs—has a Video Coding for Machines (VCM) specification under MPEG-AI. Instead of optimizing for human viewing, this codec codes for machine detector needs. The standard, ISO/IEC 23888-2, is currently in the Committee Draft phase. It also has a sibling spec, FCM (Feature Coding for Machines), which goes further by compressing intermediate features/tensors rather than pixels (see the demo video). On the audio side, ACoM (Audio Coding for Machines) is an official MPEG Exploration with a Call for Proposals (Apr/Jul 2025) to define formats for machine listening and audio-feature transport.

Splitting inference between the client and server seems to be the future. While standardization efforts are still nascent, LLM vendors might develop proprietary mechanisms. For coordinating the transmission of these codecs, I could see developers using MoQ due to its novelty and potential ease of use. However, direct evidence for this outside of some academic papers (e.g., here) is scarce.

My bet in 2030: Hopefully something other than raw media over WebSocket, but it might not be WebRTC or MoQ.

Meetings

As Fippo noted, while many video conferencing vendors existed before WebRTC, almost all have transitioned to using WebRTC (Zoom being the last significant holdout). Multi-party video meetings present the most challenging use case discussed here—maximizing video quality for numerous users with constantly fluctuating bandwidth and minimal latency tolerance is inherently difficult. Sophisticated bandwidth control mechanisms like GCC (Google Congestion Control) took years to develop and fine-tune (see Kaustav’s excellent webrtcHacks post on GCC probing for an appreciation of just one aspect).

MoQ currently lacks such capabilities; they would need to be built. However, libwebrtc's work in this area is effectively complete and open source for reference. With modern coding agents like Codex/Claude adept at adapting existing code, it's not impossible for MoQ to develop a WebTransport-tuned GCC-like mechanism in the foreseeable future, provided there's sufficient motivation.

What would be that motivation? I believe WebRTC app developers would consider switching to MoQ if it offered a measurable quality improvement. The greater the improvement, the stronger the motivation. Would I expend significant effort to switch stacks for a 1-2% improvement? No. More than 10%? Likely, depending on the effort and risk involved.

Do we need better video quality?

Currently, MoQ has a long way to go to even be a contender. If MoQ’s primary adoption driver is superior video quality, there must first be user demand for quality exceeding what's available today. If users begin demanding 4K or AR for their video calls, then any marginal gains MoQ provides might become much more significant.

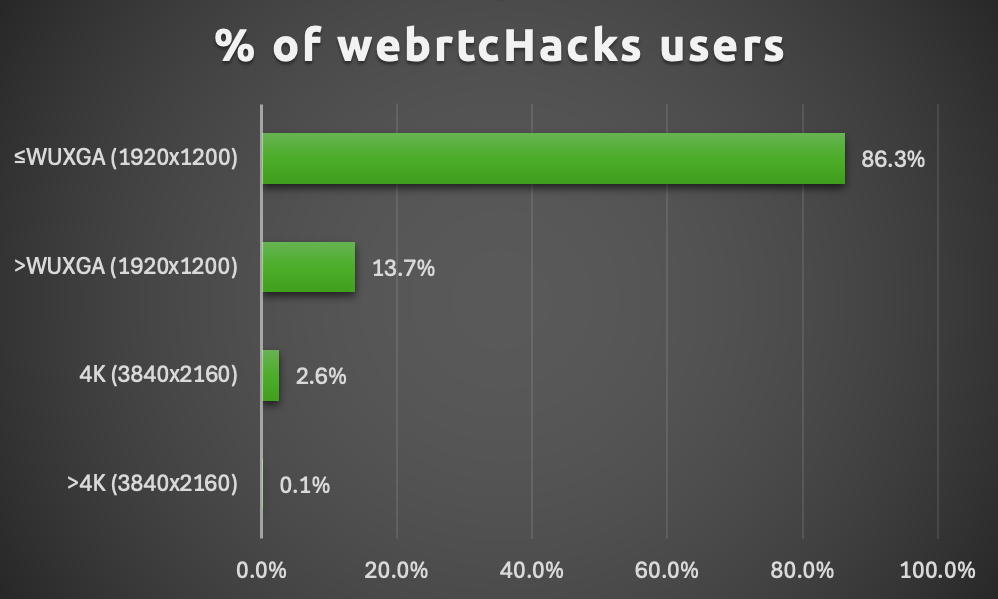

Screen size is a prerequisite for larger video camera resolutions. To fit four 1080p callers in a 2x2 grid in full screen, your display needs to be at least 3840x2160 (4K) to show everyone at full resolution. Unfortunately, robust public data on this is limited, so you must consider your own user data. Every time I've examined the adoption of screens larger than UHD (1920x1080, i.e., 1080p), I've been disappointed.

Statcounter shows that less than 8% of desktop users had a resolution larger than 1920x1080, though a significant “other” category could include higher resolutions. I also checked Google Analytics for webrtcHacks in October: while our audience generally has better hardware, still less than 3% view on screens with 4K resolution or better.

Caption: Few web users have screens large enough to view larger than UHD content. Percentage of Active webrtcHacks.com Viewers by screen resolution on WebRTC in October 2025 as measured in Google Analytics. 1600x1600 resolution excluded (fingerprinting blocker placeholder value).

Caption: Few web users have screens large enough to view larger than UHD content. Percentage of Active webrtcHacks.com Viewers by screen resolution on WebRTC in October 2025 as measured in Google Analytics. 1600x1600 resolution excluded (fingerprinting blocker placeholder value).

The reality is, the hardware required to display numerous users in ultra-high resolutions during video meetings isn't widely adopted today, and this percentage hasn't shifted rapidly. Certainly, niche applications exist that require very high resolutions in meeting-style environments, but will it be worthwhile for them to heavily invest in optimizing MoQ when WebRTC already functions effectively?

My bet in 2030: WebRTC stays.

Video Streaming

I will only briefly cover "traditional video streaming" here—the variety that has existed since the Flash era and is predominantly HTTP Live Streaming (HLS) today, alongside other formats like DASH. This is a vast market with many vendors and an established ecosystem. It's also not WebRTC, and since this is a WebRTC blog focusing on the left side of my Venn diagram above, I'll only touch upon it.

In the next section, I will also review Low-Latency HLS (LL-HLS) and DASH’s low-latency variant for live streaming, and how they compete with WebRTC and now MoQ.

Traditional video streaming

Most video streaming inherently has latencies of several seconds—typically anywhere from 6 to 30 seconds. Video is converted into smaller chunks and distributed via a CDN. WebRTC has no place here; it's designed for different uses, and no one recommends it for traditional streaming.

Unlike WebRTC, MoQ does offer some advantages in traditional longer-latency streaming, particularly noticeable on unreliable networks. However, if latency is disregarded, I'm not convinced MoQ is compelling in this domain. This category is distinct from "live streaming" mainly because low latencies weren't technically feasible when HLS was initially developed. Lower-latency options have since evolved into a new market (or submarket).

What if you could achieve lower latencies out-of-the-box without all the added complexity? That’s MoQ’s proposition. With that in mind, let’s examine the “in-between” architectures.

My bet in 2030: Consider MoQ only if lower latency is important.

In-between Use Cases

As just covered, WebRTC originated on the left side of the spectrum for real-time interactivity with multiple streams for video calls and meetings. Video streaming, on the right, involves one input stream with many seconds of latency for thousands to tens of millions of viewers.

Then, meetings began to grow in size, and as they expanded, most attendees became viewers—resembling a streaming scenario more closely. WebRTC systems have scaled up, now allowing up to 1000 viewers. On the streaming side, services like Clubhouse, Periscope, Twitch, and Mixer demonstrated the value of enabling hosts to interact with audiences. Lower latency directly improves interactivity. Low latencies also allow internet streaming to emulate traditional over-the-air broadcast TV, an expectation for sports and other live events.

This created a gap between what WebRTC and HLS could offer. Both sides started pushing towards the center from their respective positions. As I'll explain below, this is likely where MoQ’s sweet spot will be.

MoQ best targets the parts in-between

MoQ best targets the parts in-between

Live Streaming

No one ever wishes for a longer delay between themselves and a live event they are watching. The essence of "live" is immediacy—being as close to physically present as possible. The TV broadcast industry has established the expectation for live-event latency at mere seconds.

By optimizing HLS and reducing chunk sizes, LL-HLS can achieve latencies of 2 to 4 seconds, a similar performance to DASH. This appears to be the practical limit for this technology.

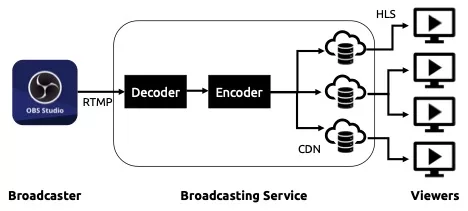

Live streaming services typically use RTMP (/RTMPS) for ingest, which adds several more seconds of latency. Several seconds can feel like a long time if a live stream host expects instant audience feedback, as they are accustomed to in video meeting software.

High-level diagram of a typical broadcast service using RTMP and HLS. Decoding, encoding, and file distribution add inherent network transmission delays

High-level diagram of a typical broadcast service using RTMP and HLS. Decoding, encoding, and file distribution add inherent network transmission delays

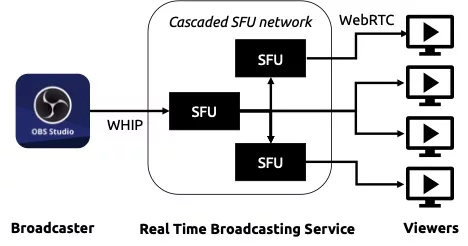

The solution for even faster performance is to use WebRTC, much like meetings do. Instead of transferring chunks, a series of SFUs (Selective Forwarding Units) replicate and relay live streams to users. Latencies are typically less than a second. WebRTC HTTP Ingest Protocol (WHIP) and WebRTC HTTP Egress Protocol (WHEP) facilitate connecting low-latency streams into and out of real-time streaming networks.

High-level diagram of a real-time broadcasting service using a cascaded SFU network. SFUs quickly duplicate and relay media with minimal latency

High-level diagram of a real-time broadcasting service using a cascaded SFU network. SFUs quickly duplicate and relay media with minimal latency

WebRTC vs. LL-HLS

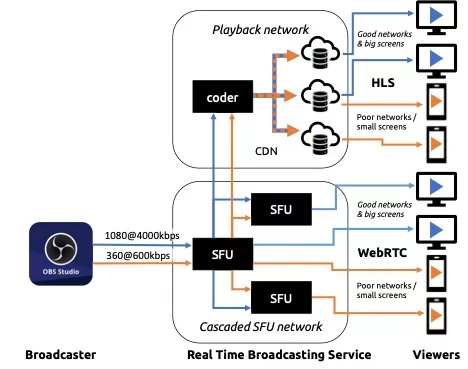

LL-HLS holds an advantage for replay; once chunks are on the CDN, they are permanently available for anytime viewing. WebRTC streams are ephemeral, lacking a built-in replay mechanism without an additional recording system. In practice, most streamers encode multiple renditions: LL-HLS for live viewing and multiple HLS streams for varied network conditions and high-quality replay. WebRTC-based systems often also utilize HLS for replay.

Which is cheaper? I’ve analyzed this several times, and the answer has never been definitive. It boils down to the cost of a CDN versus the cost of SFU processing. However, both these costs are dwarfed by egress bandwidth charges once you reach scale. At the protocol level, WebRTC’s superior bandwidth adaptation usually means it transmits less information, but these overhead differences are typically only a few percentage points. Major differences in bandwidth usually stem from the choice of codec, and comparable codec options exist for both systems, largely depending on the implementor.

How broadcasting with Simulcast could look for both real-time, low-latency viewers and for playback network offloading. Diagram simplified to only show 2 layers.

How broadcasting with Simulcast could look for both real-time, low-latency viewers and for playback network offloading. Diagram simplified to only show 2 layers.

How is MoQ different?

MoQ aims to offer a "best of both worlds" approach, bridging WebRTC and HLS/DASH.

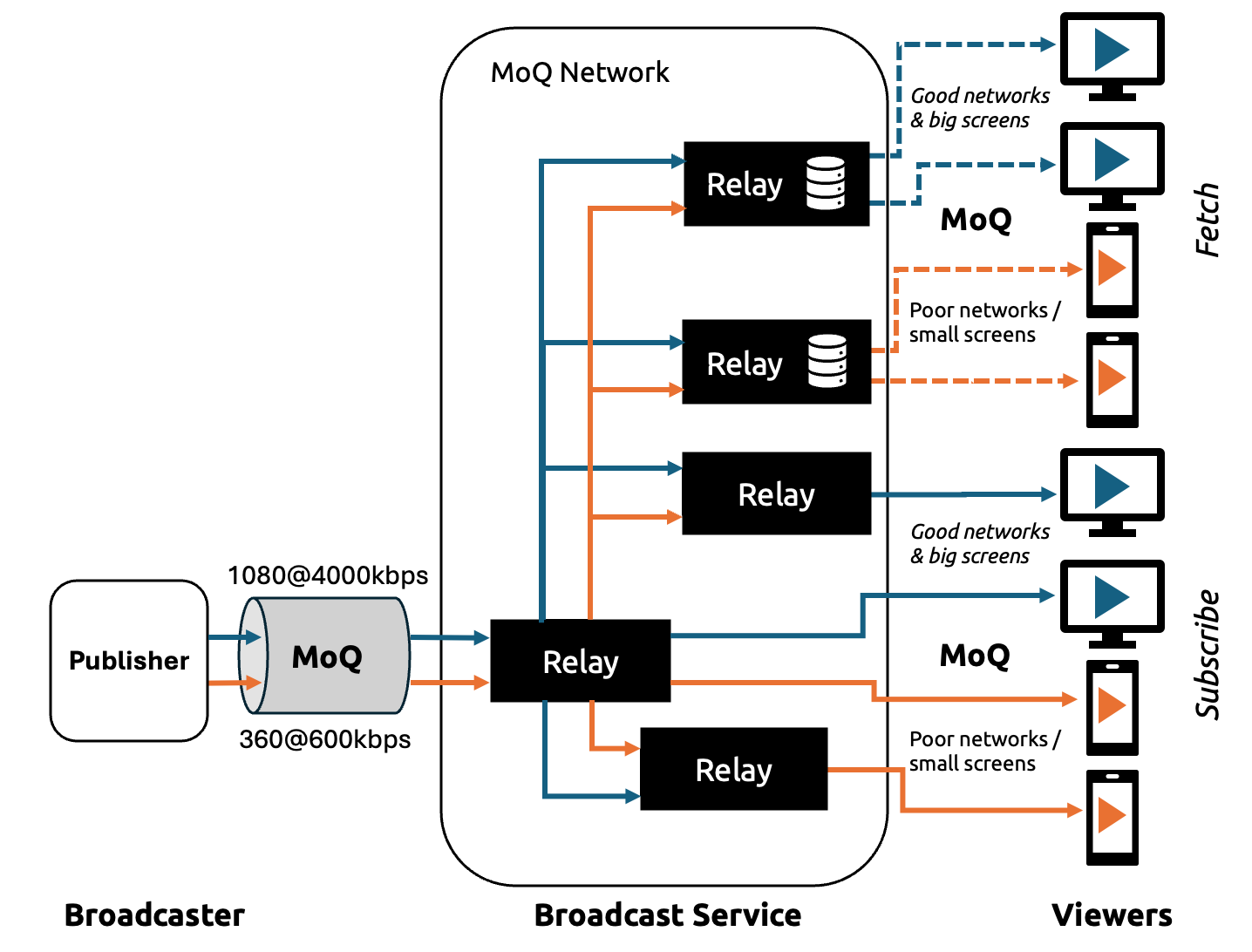

Unlike LL-HLS, which relies on playlists and (partial) segments optimized for CDN delivery, MoQ publishes a continuous live feed that CDNs/relays can cache and fan-out immediately at the live edge. This minimizes playlist churn and catch-up delays, essentially adopting the same approach a WebRTC SFU takes but more formally specified through a bespoke publish/subscribe model in its architecture.

Furthermore, by leveraging QUIC, MoQ benefits from independent streams and rapid connection setup. This helps reduce startup/join times and prevents stalls during congestion bursts. Relays can also prioritize the newest live data, allowing late joiners to synchronize closer to "now."

MoQ aims to combine WebRTC-like interactivity and latency with HLS/DASH-like distribution and caching. This makes it particularly appealing for one-to-many live events that require sub-second join times and Internet-scale fan-out without maintaining thousands of individual real-time sessions.

Much of what MoQ brings to live streaming, WebRTC already provides using different mechanisms over UDP. However, unlike WebRTC, MoQ also features a Fetch mode, where it operates like a traditional HLS CDN. This is an advantage when video also needs to be available for playback, as it theoretically allows for a single MoQ architecture versus two separate architectures (WebRTC & HLS).

Finally, remember that RTMP is most commonly used as the ingestion protocol. WHIP replaces this with WebRTC. MoQ could be used for both ingest and egress, further simplifying the architecture with a single protocol.

A potential end-to-end MoQ network mixing live relay subscribers with non-realtime, fetch playback

A potential end-to-end MoQ network mixing live relay subscribers with non-realtime, fetch playback

My bet in 2030:

- New services will utilize MoQ.

- Existing LL-HLS services may transition to MoQ (assuming it proves more reliable for the same price).

- Existing WebRTC Live Streaming services will likely not change (it’s harder to modify a WebRTC stack than an HLS stack).

Webinars / Townhalls

Meeting software is typically designed for universal, anytime interactivity. In webinar and town hall scenarios, there are usually one or a few presenters, with most of the audience watching, and only sporadic interaction from a small number of attendees.

Various technology choices exist here, depending on the required level of interactivity and the process desired for audience participation. This usually boils down to two approaches:

- Massive Meeting: Put everyone on WebRTC and treat it like a large meeting, but place most of the audience in a view-only mode to conserve resources.

- Broadcast with Interaction: Broadcast over HLS and have audience members join a live session (usually WebRTC-based) when they wish to participate. This isn't instant, as they might be many seconds behind on the HLS stream.

Some platforms allow users to pause or watch out of sync with the livestream, while others enforce a live-only experience.

Can MoQ help here? Possibly, but this use case is much more fragmented. For example, if breakout rooms are a crucial feature, services are likely to stick with WebRTC. Conversely, if the webinar app more closely resembles a live streaming app without audience members coming "on stage," then MoQ could be a good fit.

My bet in 2030:

- New services will seriously consider MoQ.

- Existing services will likely maintain their current approaches.

Conclusions

Here's my summarized take:

| Use Case | Major Tech Today | MoQ Fit |

|---|---|---|

| 1:1 calls | WebRTC | 👎 |

| Meetings | WebRTC | 👎 |

| Voice AI | WebSocket | 🤷 |

| Streaming | HLS | 🤏 |

| Live Streaming | RTMP/LL-HLS & WebRTC | 👍 |

| Webinars | LL-HLS & WebRTC | 👍👎 |

"Fit" doesn’t equate to commercial viability, but it is a prerequisite.

Many other use cases exist beyond meetings and streaming, which are the major ones. Undoubtedly, MoQ will be a good fit for many of these, though perhaps relatively smaller in scale. My aim here was to examine the largest potential MoQ use cases today.

Will MoQ significantly impact WebRTC usage? Probably not, but the Internet is vast, offering ample space for both. I anticipate many more MoQ posts here. In fact, I just bought moqHacks.com 😀

{"author": "Chad Hart"}