Why Engineering Leadership Is More Critical Than Ever in the AI Era

Explore how AI is reshaping engineering leadership, from the federal push for AI consolidation and the rise of context engineering to the end of the 'Scale' era and the critical need for impact measurement and clear project naming conventions. Learn why strategic oversight and judgment are paramount.

The prevailing narrative often suggests that advancements in artificial intelligence will render engineering leadership obsolete. However, historical precedents, akin to the Industrial Revolution, indicate the opposite is true. As the cost of code generation diminishes, the demand for high-level judgment and strategic oversight is set to increase dramatically. Leaders must shift their focus from starting with models to prioritizing customer pain points, employing frameworks to effectively manage risks and ensure value delivery.

1. The Federal Imperative for AI Consolidation

The U.S. government has initiated a significant move to establish a unified national regulatory framework for artificial intelligence through an executive order. This directive leverages substantial broadband program funding to override state-level regulations, mirroring the consolidation observed during the early days of the internet. With AI data centers, engineers, and users distributed globally, a federal approach via the Interstate Commerce Clause provides a logical mechanism to prevent a fragmented landscape of state restrictions. The overarching goal is not merely regulation, but to foster a cohesive environment for AI development nationwide.

2. From Prompt Engineering to Context Engineering

A pivotal shift is underway in how we interact with AI agents, moving beyond simple 'prompt engineering' to 'context engineering.' This evolution is underscored by the Linux Foundation's establishment of the Agentic AI Foundation (AAIF), an initiative supported by tech giants such as Amazon, Google, and Microsoft. The AAIF brings together crucial projects like Anthropic’s Model Context Protocol (MCP) and OpenAI’s AGENTS.md. The vision is a future where prompts remain straightforward, while the underlying knowledge corpus provides the rich context necessary for agents to interact autonomously with repositories. While a single prompt addresses a specific problem, comprehensive context has the potential to solve a multitude of challenges.

3. The Demise of the 'Scale Is All You Need' Paradigm

Recent discussions at events like NeurIPS 2025 have seen leading experts acknowledge that merely expanding model size is no longer sufficient to achieve general intelligence. This realization aligns with industry data from MIT and McKinsey, which indicates that a staggering 95% of companies are not achieving significant return on investment (ROI) from their generative AI initiatives. The era of exponential gains derived solely from raw computational power is drawing to a close. Future value in AI will stem not from increasingly massive training runs, but from the practical, efficient application of existing technology—through fine-tuning, optimization, and solving specific business problems.

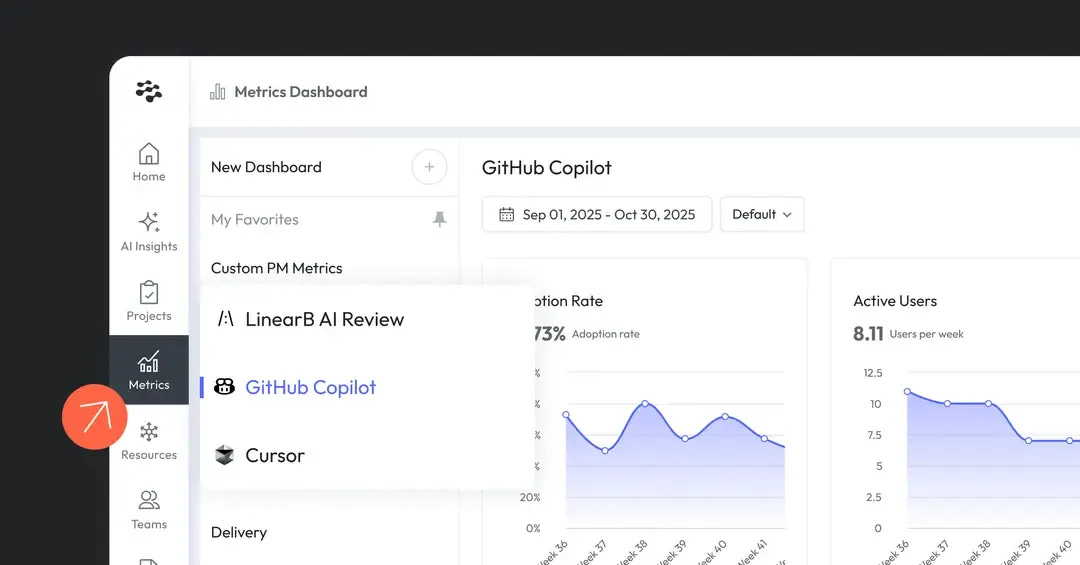

4. Bridging the Measurement Gap for AI Assistants

While engineering leaders are increasingly investing in AI tools like Copilot and Cursor, a critical challenge persists: effectively measuring their impact. New dashboards provide a solution, offering a consolidated view of AI assistant metrics—including adoption, acceptance rates, and engagement—and directly linking them to delivery outcomes. This enables organizations to move beyond simply purchasing tools to truly understanding their deep integration into the Software Development Life Cycle (SDLC) and identifying where trust is building. By transforming AI usage data into actionable engineering insights, teams can optimize their investments.

5. Prioritizing Clarity Over Cleverness in Project Naming

The industry's inclination towards 'clever' or abstract project names has become a significant cognitive burden. This approach imposes high cognitive costs on developers, transforming dependency lists into complex deciphering tasks. A more effective strategy involves namespaces, semantically clustering projects (e.g., agent-marketing, agent-copy). This not only streamlines repository scanning for human teams but also enhances the ability of Large Language Models (LLMs) to comprehend system architecture. Sometimes, a straightforward and 'boring' name proves far more beneficial than an overly creative one.