YouTube's Inconsistent Moderation: Tech Tutorials Flagged as Dangerous

A popular tech YouTuber faced severe repercussions when YouTube flagged two Windows 11 installation tutorials as dangerous, issuing community strikes. Despite eventual restoration, the incident highlights critical flaws in automated moderation and raises questions about human oversight when legitimate content is misidentified as life-threatening.

Big Tech platforms are often criticized for their content moderation practices, particularly when legitimate technical content, such as Linux or homelab tutorials, is flagged or removed with little explanation or recourse for creators. Recently, a popular tech YouTuber experienced similar issues, with their entire channel at risk due to YouTube's actions.

YouTube's Moderation Inconsistencies

Two weeks prior, a video demonstrating the installation of Windows 11 25H2 with a local account was removed by YouTube, citing that it was "encouraging dangerous or illegal activities that risk serious physical harm or death." Subsequently, another video illustrating how to bypass Windows 11's hardware requirements to install the operating system on unsupported systems was also taken down.

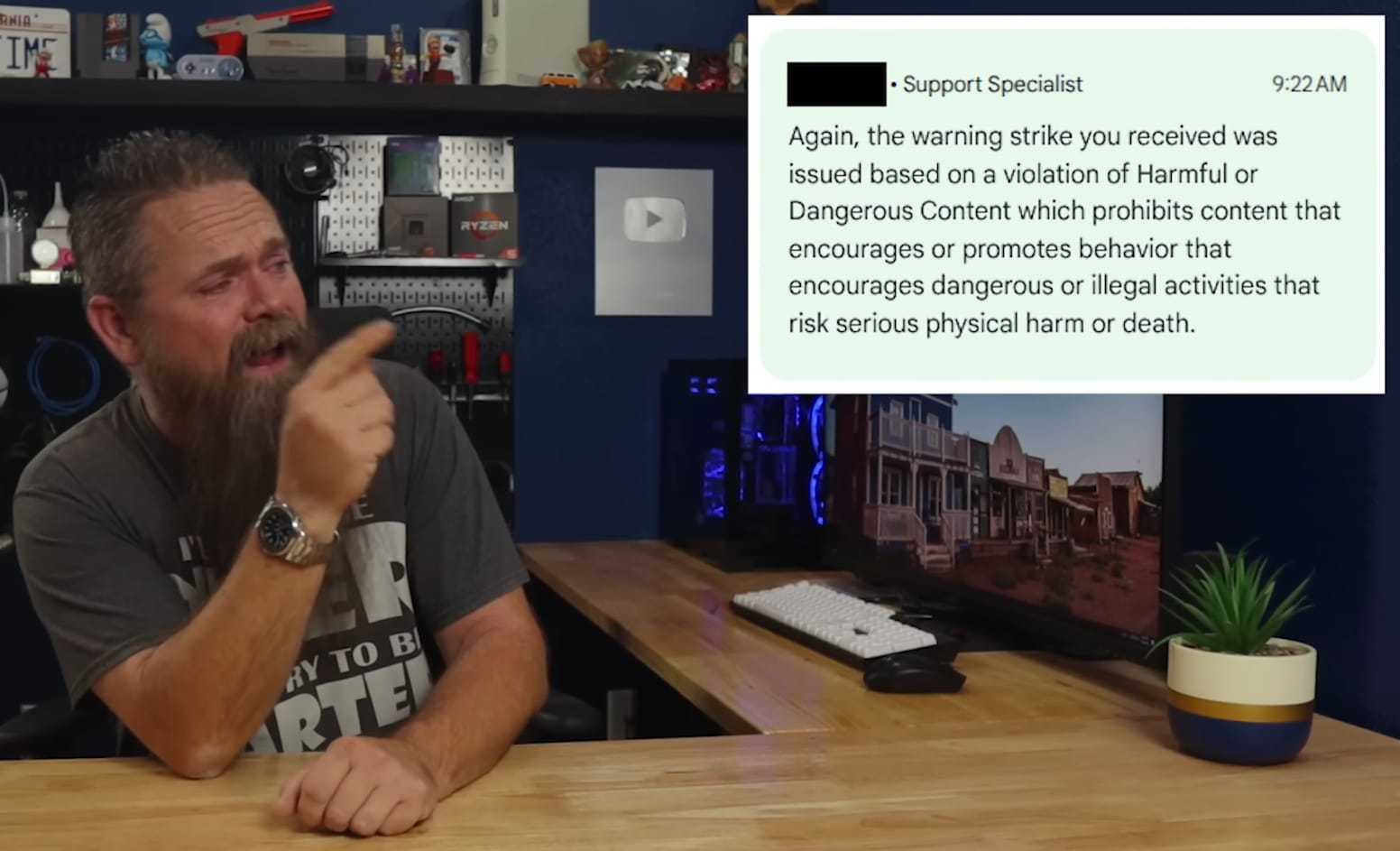

Both videos received community guidelines strikes. Appeals for both were promptly denied, with one rejection occurring within 45 minutes and the other in just five. Initial speculation pointed towards overzealous AI moderation, with some also questioning potential involvement from Microsoft.

A Reversal and Lingering Questions

The situation took a turn when YouTube eventually restored both videos. The platform claimed its "initial actions" were not a result of automated processes. This raises significant questions: if human reviewers were involved, how could Windows installation tutorials be deemed to pose a "risk of death"?

This incident highlights how automated moderation systems frequently struggle to distinguish legitimate content from harmful material, primarily due to a lack of contextual understanding. Despite billions invested in AI, Big Tech's moderation tools often misclassify harmless tutorials as dangerous, as further evidenced by this case and the recent removal of Enderman's personal channel.

Meanwhile, actual spam often slips through unnoticed. These platforms critically need robust human oversight. While automation can assist in content review, it cannot adequately replace human judgment, especially in complex or nuanced cases.